Post scriptum (2022, week 19) — When history is weaponised for war, how to think about free will, and rethinking the definition of reality

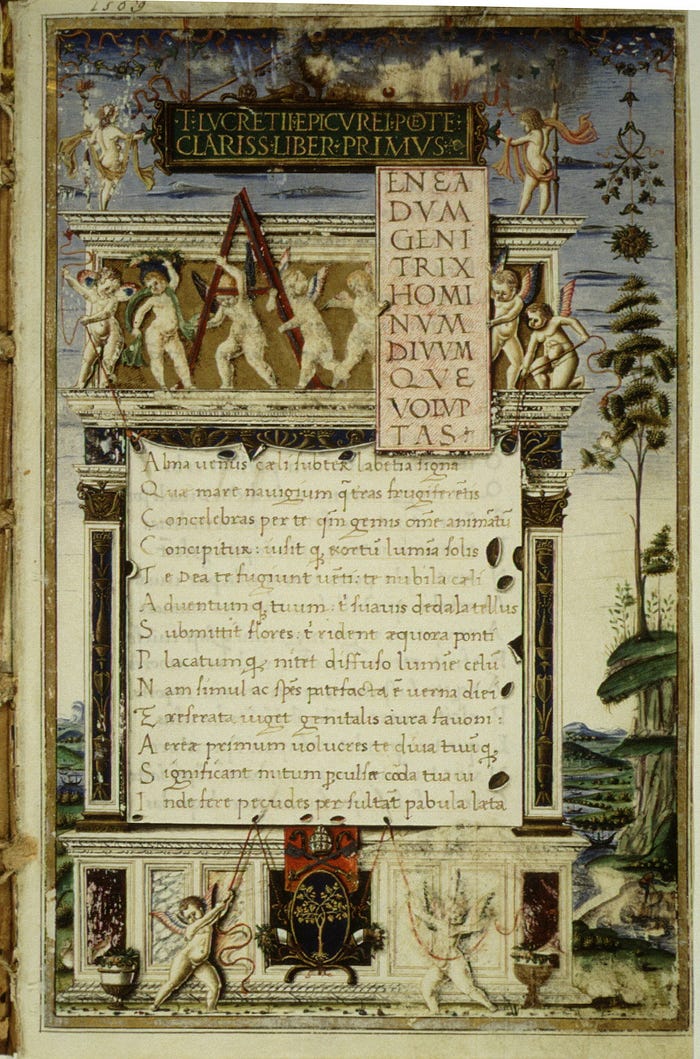

Post scriptum is a weekly curation of my tweets. It is, in the words of the 16th-century French essayist and philosopher, Michel de Montaigne, “a posy of other men’s flowers and nothing but the thread that binds them is mine own.”

In this week’s Post scriptum: Bad history can kill; rethinking the concept of free will; “Space is big. But reality is bigger”; the forgotten stage of human progress; what’s wrong with management education; Carlo Rovelli explores beyond physics; Massimo Vignelli at 90; and, finally, Matisse’s most “musical” painting.

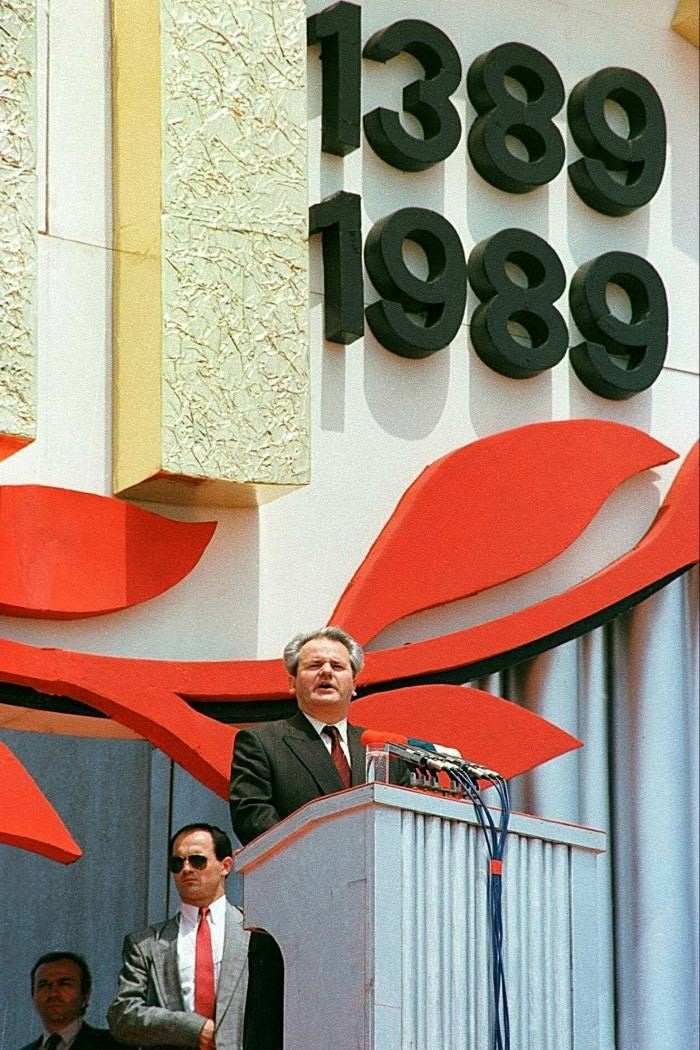

When history is weaponised for war

“[S]omewhere within the mind of tyrants lies the strange urge to be a professor; to cloak Machiavellian brutality with the gravitas of scholarly authority,” Simon Schama writes in When history is weaponised for war.

“Posing thus, autocrats can persuade themselves — and those to whom they feed their deluded claptrap — that their belligerence is at the service of some higher mission: the recovery of national self-respect, the righting of grievous wrongs and humiliations inflicted by wicked foreigners. Invariably it’s history, or rather, their mangled version of it, that gets wheeled out to vindicate those obsessions. Should actual, factual history, with all its complexity and nuances, resist being nailed to the Procrustean bed of grievance, then the inconveniences of truth can always be trimmed away.

So it was in July of 2021 that Vladimir Putin, in his own wannabe professor mode, published a lengthy screed, On the Historical Unity of Russians and Ukrainians ‡, which manages to be stupefyingly dull while also exhaustively untrue.”

“[H]istory has never been just an escapist exercise in time travel; it has always been entangled in the toils of power,” Schama writes. “From the outset, its dominant subject was war: the measure of sovereign success or failure. Herodotus began his Histories by stating that his purpose was to prevent the deeds of those who fought in the Greek-Persian wars from slipping into oblivion (though much of the attractiveness of his work lies in its insatiable curiosity about the manners and mores of non-Greeks). But when an actual general, Thucydides, turned historian of the Peloponnesian Wars, his writing, perhaps sobered by direct experience, banished triumphalism and turned history into a critical discipline.

“Herodotus, from Halicarnassus, here displays his enquiries, that human achievement may be spared the ravages of time, and that everything great and astounding, and all the glory of those exploits which served to display Greeks and barbarians alike to such effect, be kept alive — and additionally, and most importantly, to give the reason they went to war.”

— The opening lines from The Histories by Herodotus (in a translation by Tom Holland)

The principal protagonist Thucydides’ book is not a mythic hero but a classical ideal. Athenian democracy, which, while most honoured in the breach, was nonetheless deemed worthy of ultimate sacrifice. Read History of the Peloponnesian War and you will find things forbidden by the likes of Vladimir Putin: debates about the ethics of killing prisoners and civilians; disputes over whether expeditionary warfare was the instrument of opportunistic self-promotion; and, most shocking of all, the graphically unsparing report of disastrous Athenian defeat in Sicily that is the monumental narrative climax of Thucydides’ masterwork.

Openness to self-criticism, the mark of strong, honest history, is not, as is sometimes said by flag-waggers and drumbeaters, a sign of national self-hatred. On the contrary, it represents an optimistic patriotic faith that, in free societies, the cohesion of national community is better served by the examination of truth than by otiose flattery.

Our postwar generation had inspiring models of citizen-historians prepared to make sacrifices of their own lives for the sake of history’s integrity: Marc Bloch, the great medieval historian who wrote Strange Defeat, about the roots of French collapse in 1940, joined the Resistance and was shot by the Gestapo in 1944; or Benedetto Croce, who went from flirting with fascism to becoming one of its most adamant and ethically uncompromising enemies.

They were our heroes and paragons. In their memory, we thought our job was to be gadflies for truth; the discomfiters of the powerful, not the service industry of their feel-good fables. We also believed — and most of us still do — that history fashioned as self-admiration will always yield to the hard force of fact. But maybe we have been kidding ourselves; maybe when the loss of something or other — territory, empire, a fantasy past of unclouded grandeur — triggers paroxysms of indignation, seeing red will always blind populists to the clarity of truth.

Booster histories are pumped with grievance: the ressentiment that Nietzsche identified as a condition of impotent but unassuageable rage at some sort of imagined, unjust loss; and the projection of that anger towards those cast as the agents of humiliation. Putin mourns for the empire lost by the collapse of the Soviet Union and attributes the debacle to the designers of what he calls an ‘anti-Russia project,’ salivating to inflict yet another humiliation. In his view, post-Maidan Ukraine after 2014 allowed itself to be co-opted into that nefarious Euro-American strategy and so must now be punished for presuming to control its own destiny.”

“It would be better for the world if Thucydides’ fearlessly self-scrutinising history were also to be the most popular form of its literature. Unhappily, this is not the case and perhaps never has been. It is bad, cheerleading history that stirs the blood, makes the pulse race and fogs the brain with sentimental consolations. That bad history sells books and, as politicians wanting to ride the populist wave well know, has the rally crowds upstanding in hooting delirium. Why ditch it merely because it’s cheaply tendentious or even transparently untrue?”

Is it possible, then, for a modern nation to free itself from the relentless revisiting of ancient tribal grievance and still feel part of a patriotic community? Two examples, at least, tell us that it can be. From the denial and conspiracy of silence that lay heavily on postwar Germany, the late 20th century saw an extraordinary national accounting and an unflinching education in the horrors of the Third Reich. And Ireland, which for so long seemed doomed to be trapped in bad history, has over the past few decades become liberated from the blood sacrifices demanded by remembrance. Modernity, in its most inescapable form — the need to make a life, day to day, year to year, family to family — has been the redeemer.”

‡ In August 2017, Harvard University’s Ukrainian Research Institute (HURI) published an interview with Serhii Plokhii about his book Lost Kingdom: The Quest for Empire and the Making of the Russian Nation, which addresses many of the themes emerging in discussions in the wake of Putin’s statements.

How to think about free will

“Whenever people think through the implications of living in an entirely naturalistic universe, without any supernatural agency, doubts follow about free will,” Julian Baggini writes in How to think about free will.

“Quantum physics may tell us that some causes are probabilistic and so do not strictly determine their effects. But the randomness of quantum causation is no refuge for the notion of free will. Freedom is not the ability to act randomly, without any control about what effects follow.

The final nail in free will’s coffin seems to come from neuroscience. Various brain studies have claimed to show that actions are initiated in the brain before we have any awareness of having made a decision. In other words, the thought ‘I’ll choose that’ comes after the choice is made. Actions are determined by unconscious, unchosen brain processes, and the feeling of having made a decision comes later. On this view, believing that these thoughts have any role in determining what we do would be like mistaking the noise made by an engine for the force that powers it.

Voluntarist free will therefore appears to be an illusion. No matter how free we feel, our understanding of nature tells us that no choice originates in us but traces its history throughout our histories and our environments. Even leaving aside physics, it seems obvious that, at the moment of any choice, the conditions for that choice have already been set, and to be able to escape them would be no more than the ability to generate random actions. And if all that is true, praise, blame and responsibility look like illusions too.”

But in giving up the voluntarist conception, we don’t have to throw out the notion of free will altogether, Baggini writes.

“Free will isn’t an illusion, it’s just that the voluntarist conception of free will is flawed and untenable. It understands the free/unfree distinction to hinge upon whether our beliefs, desires and choices have causes or not, which is ridiculous, since obviously everything is caused. What we need is a compatibilist conception of free will, one that reconciles human freedom with the causal necessity of the physical world.

Such a conception is hiding in plain sight, in the ways in which we distinguish between free and unfree actions in real life. We rarely, if ever, ground this distinction in a metaphysical thesis about causation. Rather, we distinguish between coerced and uncoerced choices. If no one ‘made me do it,’ I acted freely.

Worries about free will tend to shift these coercive forces to within us, most obviously when people say: ‘My brain made me do it.’ But ‘your brain’ can’t make ‘you’ do anything, unless ‘you’ is something separate from your brain. If your brain is part of you, ‘my brain made me do it’ makes no sense. After all, if your brain wasn’t key to your decision-making, what else could be? Your immaterial soul? It is telling that almost everyone who defends voluntarist free will answers ‘yes’ to this ostensibly rhetorical question and has a religious belief in such souls. For those of us who accept the materiality of human animals, this option is a non-starter.

It is not quite enough, however, to say that, as long as choices are not coerced, they are free. Bees are not forced to spread pollen at gunpoint but their behaviours are too automatic to be classed as free. Similarly, highly automatic or unreflective human behaviours, such as addictive consumption, don’t seem to be genuinely free either. So what elevates some human choices to the genuinely free rather than the merely unforced?

The best answer to this remains Harry Frankfurt’s influential theory about the difference between first- and second-order desires. Our first-order desires are the ones we just have: for a piece of cake, to have sex, to scratch our itching skin. Second-order desires are desires about these desires. I may not want to want to eat cake, because I’m trying to eat more healthily. I may not want to want to have sex because the object of my desire is not the person I am in a monogamous relationship with.

Frankfurt says that we have the kind of free will worth having when our first- and second-order desires are aligned and we act on them. When we choose to do something that, all things considered, we don’t want to do, we have failed to exercise our free will and have behaved compulsively. If we haven’t even thought about whether we desire a desire, we are not exercising our free will if we unthinkingly act on it.

Second-order desires do not escape the chains of cause and effect. At bottom, they are the result of a series of events that we did not choose. But nothing we can do can be freely chosen ‘all the way down’. No one can choose the things that most fundamentally shape them: their genes, society and family. Not even God would be free to change its nature, if it existed.

Recognising the free will we do have requires accepting that complete freedom is an impossibility. To have free will is no more nor less than to be free enough to choose for ourselves on the basis of reasons that we endorse on reflection.”

Adopting a compatibilist view of free will matters, says Baggini, because it will encourage a more humane society.

“If we adopt a compatibilist notion of free will, we have to rid ourselves of delusions and illusions of ‘ultimate’ freedom and control. It can be disturbing to accept that there is a very real sense in which we could not do other than what we do, or be other than who we are. But if we can get over these discomforting thoughts, we can move to a place of humility and compassion that is both more humane and realistic than one rooted in naive beliefs in unfettered human freedom.

[…]

Compatibilism also allows for the undeniable fact that what we think changes how we act. Many assume that if all that we do is ultimately governed by cause and effect, then our actions are caused by brain processes that ‘bypass’ our thoughts and beliefs. But it cannot be as simple as that since, when we change what we believe, we change how we act. If I think that cake is poisoned, I won’t eat it. That is why it is important to think of our actions as being under a degree of control: what we think does change what we do.

One final lesson that is not sufficiently understood is that we should not be afraid of the fact that many of our decisions are made unconsciously. Artists, for example, often report that they have no idea where their ideas come from and that they feel more like conduits than creators. Yet art is one of the highest expressions of human freedom. The role of the conscious mind is to process and hone what arises from the unconscious. The result is creation, which is wholly that of the artist. Without conscious awareness of what we do, freedom is impossible, but conscious control is not something that we always need to be exercising.”

Rethinking the definition of reality

“If you woke up one day and discovered that you were living in a virtual world — that everything you’d ever known was, like the Matrix, a form of hyper-realistic simulation — what would this imply for your hopes, dreams and experiences? Would it reveal them all to be lies: deceptions devoid of authenticity?

For most people, the intuitive answer to all these questions is ‘yes.’ After all, the Matrix movies depict a dystopian nightmare in which humanity has been enslaved by sinister machines. How else to think about the revelation that ‘reality’ is nothing like it seems? For the philosopher David Chalmers, however, none of this necessarily follows. No matter what the status of your reality, he suggests, your thoughts and experiences remain as real as it gets. And the value and purpose of your life are similarly untouched. In fact, as Chalmers bluntly puts it in his new book, Reality+: Virtual Worlds and the Problems of Philosophy: ‘Simulations are not illusions. Virtual worlds are real. Virtual objects really exist.’ And the sooner we get used to these ideas, the sooner we will be able to grasp some of the digital age’s deepest tensions,” Tom Chatfield writes in The man rethinking the definition of reality.

The reality dilemma

“Near the start of the first Matrix movie, the character Neo (Keanu Reeves) faces a dilemma. He has just been told that his world is, in fact, a simulation within a larger reality. Now he has a choice. He can take a blue pill and keep on living forgetfully in the Matrix, as if nothing has happened. Or he can take a red pill and wake up into the ‘base’ reality beyond it. What should he do? What would you do? Neo chooses the red pill — and goes on to save both the external and simulated worlds. But, as Chalmers pointed out in a 2003 article commissioned by the production company behind The Matrix, discovering that you’ve lived your entire life inside a simulation doesn’t actually invalidate the ‘reality’ of that life.

After all, if you were born and grew up in the Matrix, you would by definition never have encountered any non-simulated objects, or had any experiences prompted by non-simulated interactions. What you call ‘trees’ are actually digital simulations. But since you’ve never seen a non-simulated tree, all this means is that everything you know about ‘trees’ can technically be rephrased as being about ‘simulated trees.’ Unless you have suddenly been granted simulation-breaking new powers, this revelation is no different to discovering that what you have been calling ‘trees’ are, technically, ‘accretions of subatomic particles’ or ‘collapsed quantum waveforms’ or ‘temporarily captured energy.’ In other words, Chalmers suggests, if I were to wake up one day and discover I’m living in a simulation, ‘I should not infer that the external world does not exist, or that I have no body, or that there are no tables and chairs… Rather, I should infer that the physical world is constituted by computations beneath the microphysical level. There are still tables, chairs, and bodies: these are made up fundamentally of bits, and of whatever constitutes these bits. This world was created by other beings, but is still perfectly real.’

What follows from this? Among other things, Chalmers argues in Reality+, the question of whether we’re living in a simulation has an unexpectedly theological dimension. A simulation operated by super-powerful entities is, in many ways, equivalent to a Universe created by a divine being. And it begs similar questions — not least if you turn out to be one of the super-powerful entities in question. What kinds of risks and responsibilities accompany the god-like powers associated with operating simulated worlds? Given that Facebook recently changed its name to Meta, in honour of the immersive environments it plans soon to unveil, the question of what it means for corporations to operate realms within which they’re close to omniscient and omnipotent has a startlingly practical dimension.

‘If you think that privacy and manipulation are already a problem on current social media,’ Chalmers told [Chatfield], ‘they’re obviously going to have the potential to be much more so when it comes to virtual worlds controlled and created by the same corporations.’ And this potential is even greater once we recognise that the values, experiences, objects and interactions at play in such worlds are real. In fact, the questions that matter most are not about reality and unreality at all, but rather about the kinds of experience, agency and opportunities afforded by any environment we are responsible for: ‘if these are genuine realities, ones where you can have meaningful experiences… what kind of meaningful experiences are we going to have?’

A sense of virtuality

Plenty of philosophers and ethicists have made the case in recent years for the importance of principles like privacy, transparency, agency and explicability within information environments. Chalmers is unusual, however, in the intensity of his focus upon the technology’s most distant horizons — and his quest for a non-naïve optimism when it comes to humans’ relationships with and through their creations.

To see what such an optimism might look like in practice, consider an inexperienced user of a virtual environment who doesn’t, for instance, know that the avatar they’re chatting to is being controlled by a corporate AI rather than a human. This is a scenario in which an informational asymmetry — the fact that the user is profoundly deceived about the nature of the interaction — may be connected to all kinds of manipulation or exploitation. Contrast this with an experienced user of a virtual environment who is hanging out with some avatars controlled by (human) friends as well as an AI-controlled avatar that’s telling them stories beside a virtual campfire. This is a very different prospect. What’s playing out here is a potentially life-enhancing encounter in an artificial realm — its pleasures derived from a knowing combination of verisimilitude and fictionality.

In Reality+, Chalmers uses the phrase ‘a sense of virtuality’ to describe the ways in which people know that an object or environment is simulated — and the importance of this awareness when it comes to rich, meaningful interactions with virtual environments. ‘I think knowledge is very important,’ he told [Chatfield]. ‘That, when you’re interacting with something virtual, you know it’s virtual; that, when you interact with something digital, you know it’s digital. It wouldn’t surprise me if this becomes part of the ethical regulation of virtual worlds. It’s not to say that these virtual worlds are not real. You just want to know which reality you’re in.’

The knowledge that you bring to a simulated experience is, in other words, a vital component of that experience — something that applies equally to any ‘real’ situation. In each case, to be under-informed or misinformed is to be vulnerable to various kinds of manipulation, while to possess meaningful options, agency and expertise is to be empowered.

This brings us to perhaps the most significant and sobering lesson of all: that, when it comes to consciousness, humans are at once brilliant and profoundly vulnerable. Countless artefacts, systems and environmental nudges are constantly altering and extending our minds. We do not and cannot access even ‘base’ reality directly via our senses. And this means that any and every moment we experience is at once more open and more unknowable than intuition easily lets us believe.

Change blindness

What, [Chatfield] asked Chalmers, are some of the things that have most surprised or excited him within our growing understanding of consciousness? One example that comes to mind, he told me, is research into what’s known as ‘change blindness.’ Change blindness describes the ways in which people can effectively be ‘blind’ to even substantial changes in what’s in front of them, unless they are specifically looking out for such changes.

In one 1998 experiment, for example, experimenters initiated a conversation with a pedestrian and then, half way through the conversation, surreptitiously replaced the first experimenter with a different person who carried on the conversation. Only half of the pedestrians even noticed the change, a remarkable finding reinforced by a growing body of research that suggests people may be conscious of — as Chalmers puts it — ‘much less than we thought.’ It seems that our everyday awareness of the world is detailed, smooth and constantly updated. But this is little more than a useful illusion. ‘We thought we were conscious of everything, all the details of a picture; but it turns out that maybe we’re just conscious of seven blobs that we attend to. Whenever we attend to it, it’s there. But [at least so far as consciousness is concerned] it’s not always there.’

Our minds and perceptions, in other words, are fundamentally non-literal in their readings of reality — while perception itself is a kind of evolved illusion, useful and accurate enough to safeguard our survival, but nothing like as comprehensive as it seems. Virtual worlds and technological mediation are, in this sense, already a kind of second nature so far as humanity is concerned: environments and encounters neither more or less inherently meaningful than anything else we experience. In the end, information itself is the reality that matters.

What is Chalmers’ own take when it comes to the status of his reality? Would he like to live in a simulation — or to know if he were already living in one? ‘I haven’t quite made up my mind,’ he says. ‘On the one hand, there’s something very cool about the idea of being in the base reality. There are all these simulations, but getting to be in base reality is a very interesting and special place to be. On the other hand, if we are in a simulation, then the Universe is much bigger and grander than we had thought.’

It’s a line of thought that feels, somehow, autobiographical — a version of the restless curiosity that took him halfway across the world, and that Reality+ maps across a succession of philosophical vignettes, provocations and parables. As he put it at the end of our conversation: ‘I grew up in Australia, and I discovered that at some point, oh my God, there’s a whole world out there that I get to explore beyond this. I think that knowing there’s a world outside our own Universe, perhaps even one that we could in principle explore, would open up horizons and possibilities that are exciting and interesting.’ And his ultimate verdict on that slippery word ‘reality’ — and why it needs to be followed by ‘plus’ to encompass everything he’s trying to say? ‘I guess I would like to say… that reality is capacious. Space is big. But reality is bigger.’”

In the margins

“The option to work from anywhere will be most attractive to people who have well-paid jobs and fewer obligations: childless tech workers, say. For many other people, the ‘anywhere’ in working from anywhere will still boil down to a simple choice between their home and their office. That might be a recipe for resentment within teams. Imagine dialling into a Zoom call covered in baby drool, and hearing Greg from product wax lyrical about how amazing Chamonix is at this time of year.

Resentment may even run the other way. Hybrid work has already smudged the boundary between professional and personal lives. Making everywhere a place of work smears them further. Countries that used to be places to get away from it all will become places to bring it all with you. Turning down meetings when you are on a proper vacation is wholly reasonable; it is not an option when you are plorking on a jobliday. Antigua and Barbuda’s tourism slogan, ‘The beach is just the beginning,’ sounds a lot more idyllic if the punchline in your head isn’t, ‘There’s also the weekly sales review.’

Adding to the menu of working options for sought-after employees makes sense. [Brian Chesky’s] new policies will probably help him attract better people to Airbnb. They are certainly aligned with the service he is selling. But for the foreseeable future, working from anywhere will be a perk for a lucky few rather than a blueprint for things to come.”

From: Why working from anywhere isn’t realistic, by Bartleby (The Economist)

“Progress is a puzzle whose answer requires science and technology. But believing that material progress is only a question of science and technology is a profound mistake.

- In confronting some challenges — for example, curing complex diseases, such as multiple sclerosis and schizophrenia — we don’t know enough to solve the problem. In these cases, what we need is more science.

- In other challenges — for example, building carbon-removal plants that vacuum emissions out of the sky — we have the basic science, but we need a revolution in cost efficiency. We need more technology.

- In yet other challenges — for example, nuclear power — we have the technology, but we don’t have the political will to deploy it. We need better politics.

- Finally, in certain challenges — for example, COVID — we’ve solved most of the science, technology, and policy problems. We need a cultural shift.”

From: The Forgotten Stage of Human Progress, by Derek Thompson (The Atlantic)

“Plenty of critics inside business schools have noted [a] reluctance to ask big-picture questions, despite the fad for genuflecting to environmental, social and governance concerns. Some note that schools are adept at defanging detractors, cordoning them off in their own professional journals and conferences and keeping them on payroll.

‘I’ve been rewarded for being as cheeky as possible,’ Martin Parker [a professor of organisation studies in the School of Management at the University of Bristol] told Molly Worthen. When his current employers hired him, they knew he was about to publish a book called Shut Down the Business School ‡, but they didn’t mind. ‘That doesn’t say they were particularly brave, but that my critique doesn’t matter very much,’ Dr. Parker told [Worthen]. ‘It’s not particularly threatening. I’m being petted by the emperor.’

Diversity initiatives and attention to environmental and social impact, he said, ‘amount to a green-washing, or ethics-washing, and conceal the major epistemological and structural issues that business schools assume, and glosses them with a particular kind of website fluff. It’s liberal fairy dust. Others don’t see it that way. They think capitalism just needs to become quite a bit nicer, that we need to orient corporations toward more benign investment strategies and less toxic relations with workers. That would be good — I’m not against small steps — but that diagnosis doesn’t reflect the nature of the problem we have.’”

From: This Is Not Your Grandfather’s M.B.A., by Molly Worthen (The New York Times)

‡ The following passage comes from Shut Down the Business School: What’s Wrong with Management Education, by Martin Parker (page 70–71).

Liberalism, and art

The answer, Sumantra Ghoshal suggests [in his essay Bad Management Theories are Destroying Good Management Practices], is that the curriculum needs to be rebalanced and become ‘pluralist,’ it needs to be returned to a time before finance became quite so dominant. He calls this ‘pluralism,’ and seems to be referring to a liberal arts model of character formation, pretty much a reinvention of what the early US schools said about themselves. This is a call which has been taken up enthusiastically by quite a few business school academics. It trades on the idea of the liberal arts, the humanities, and suggests that the business school curriculum needs to widen to include more about the practice of management. The 2011 US Carnegie report into management education made much of this, suggesting that the business school needed to connect with other parts of the university, and see its task as including character formation.

There are two parts to this suggestion I think. One is to say that the sort of research and teaching that happens within the business school can be enriched by the inclusion of methodologies and topics not commonly seen to be part of its remit. So this could be an encouragement for qualitative research into what it is like to manage or to be managed, for the use of literary texts or films as teaching aids or resources for research, for the teaching of philosophy and history. This would certainly enlarge the imagination of the business school, and (within the context of the university) provide incentives for its inhabitants to visit other parts of campus.

Behind this, however, is a more radical suggestion: that the purpose of this sort of education is to do with character formation, the shaping of the minds of those who are passing through. Rather than just teaching techniques, the business school should be concerned with Plato’s education of the guardians — providing self-knowledge, wisdom, empathy. If the problem is that the current products of the school are pointy heads with ideas above their station, then make them read Emile Zola, study what Emmanuel Levinas says about ethics, and watch films about the tragic consequences of getting rich too quickly. That way, when they graduate, their eyes will have been raised from the spreadsheet, and their imaginations concerning the lives of others will have been enlarged.

“Maybe the best way to think of Carlo Rovelli’s worldview is through the work of Nāgārjuna, a second-century Indian Buddhist philosopher he admires. Author of The Fundamental Wisdom of the Middle Way, Nāgārjuna taught that there is no unchanging, underlying, stable reality — that nothing is self-contained, that all is variable, interdependent. Reality, in short, is always something other than what it just was, or seemed to be, he argues. To define it is to misunderstand it.

In Emptiness is Empty: Nāgārjuna, another piece from his new book [There Are Places in the World Where Rules Are Less Important Than Kindness] Rovelli writes about how the philosopher’s conception of reality provokes a sense of awe, a sense of serenity, but without consolation: ‘To understand that we do not exist is something that may free us from attachments and from suffering; it is precisely on account of life’s impermanence, the absence from it of every absolute, that life has meaning.’

Before leaving Rovelli’s home that day, I took another look at the concealing snow outside. Reality seemed at once more compelling and more mysterious. Hesitating, I asked him if he thought there was any grand, capital ‘T’ truth.

He indulged me, then paused for a moment.

‘Capital ‘T,’ the Truth … I don’t think it’s interesting,” he said. ‘The interesting thing is the small t. That’s my take on it.’”

From: Searching for What Connects Us, Carlo Rovelli Explores Beyond Physics, by Nicholas Cannariato (The New York Times)

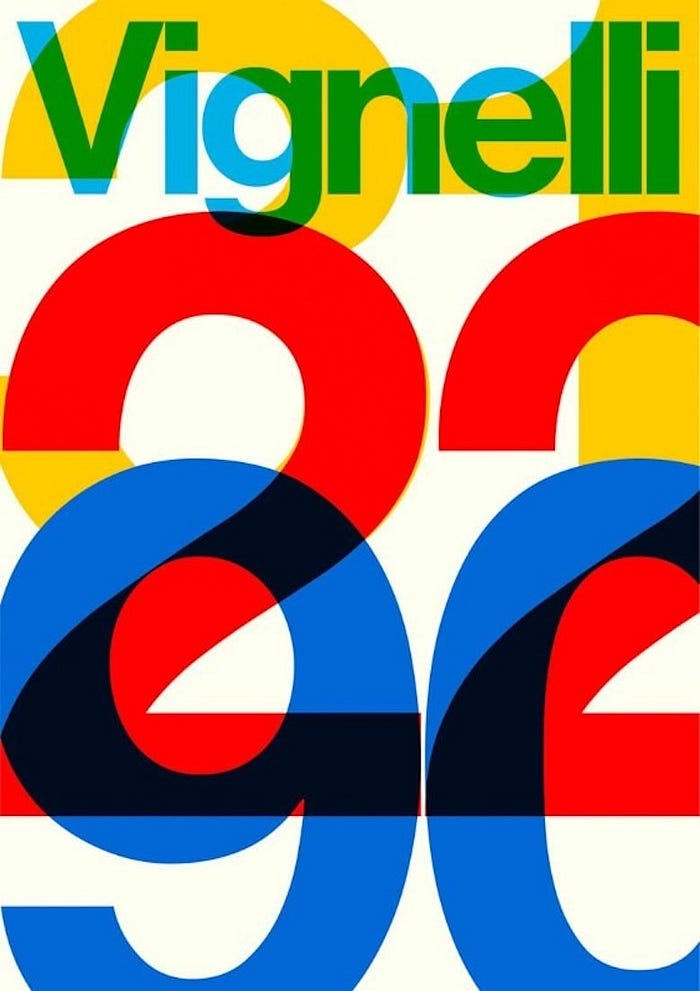

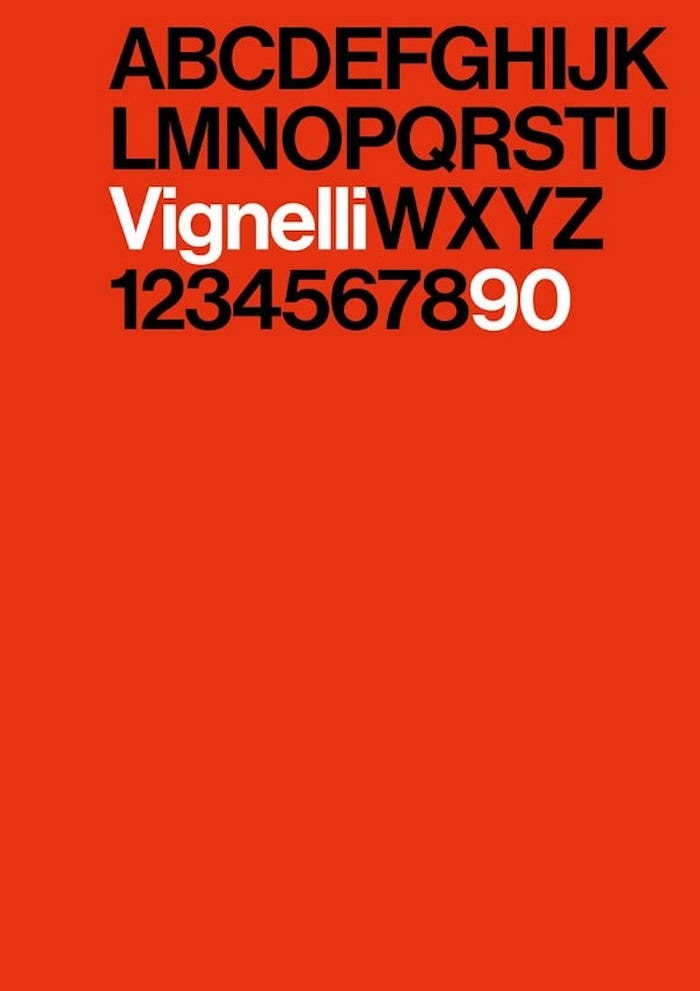

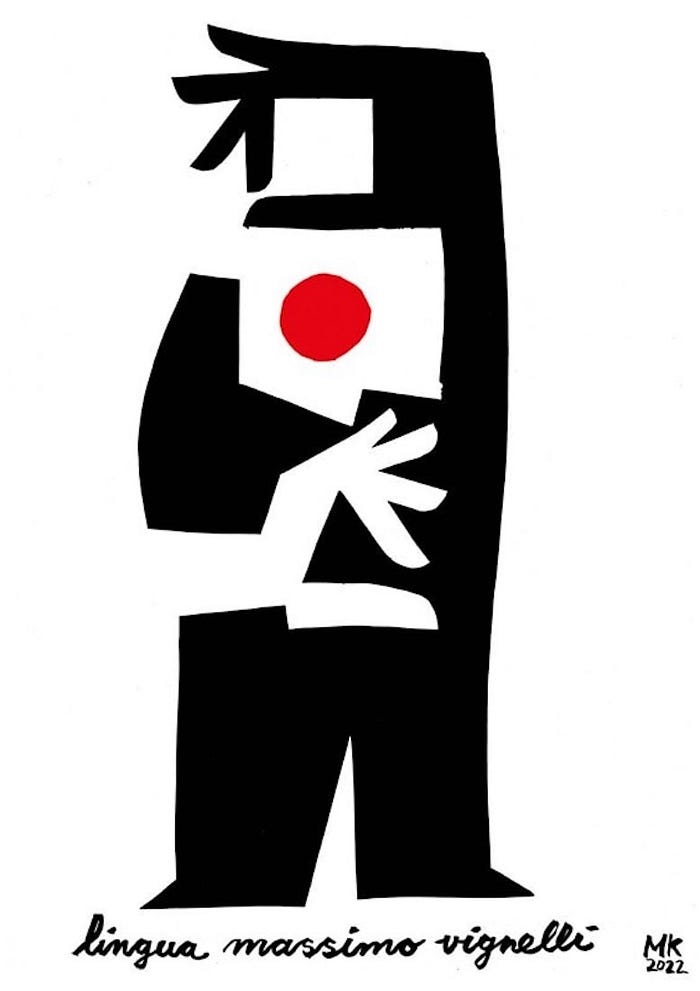

“Design is one,” Massimo Vignelli, used to say, “knowing how to design is a mental process, regardless of the medium and destination.”

“Vignelli90 is an initiative to celebrate the 90th anniversary of the great Italian graphic designer, who died in 2014. […]

Among the [250] posters, Vignelli’s favourite typeface, Helvetica, is frequently used. He enjoyed reproducing it by hand with the utmost precision during his train journeys with Bob Noorda. Therefore, there are flat colours and very accurate grids. And, of course, there are several new interpretations of the much disputed (and legendary) New York Subway Map, with its unmistakable 45 and 90 degree slanted lines.”

Via: 250 online posters to celebrate the 90th anniversary of Massimo Vignelli (Domus)

“If anybody had the capacity to understand The Red Studio, it was Gertrude Stein, the avant-garde author who was also an avid collector of Matisse’s art. As Matisse noted in a letter to [Sergei Shchukin ‡] — after understatedly describing The Red Studio as ‘surprising at first sight’ — ‘Mme. Stein finds it the most musical of my paintings.’ Perhaps following this lead, Matisse explained that the Venetian red ‘serves as a harmonic link between the green of a nasturtium branch [,] the warm blacks of a border of a Persian tapestry placed above the chest of drawers, the yellow ocher of a statuette around which the nasturtium has grown, enveloping it, the lemon yellow of a rattan chair placed at the right of the painting between a table and a wooden chair, and the blues, pinks, yellows and other greens representing the paintings and other objects placed in my studio.’” — Jonathon Keats in MoMA Shows The Backstory Of A Matisse Masterpiece So Radical It Befuddled The Artist Himself

‡ The Collector: The Story of Sergei Shchukin and His Lost Masterpieces “is a resumé of three Russian studies of Shchukin by Natalya Semenova, who salvaged what she could from the mutilations of Communist censors. André Delocque is Shchukin’s grandson and he has been responsible for the adaptation. And the text has been translated. The book therefore is sometimes jerky and stilted, but the story it tells is magnificent. The heroic relationship between Shchukin and Matisse especially is a revelation in its tender detail: first quizzical meetings, hiatus, first serious purchases, bold commissions, Shchukin getting cold feet, Matisse heartbroken, Shchukin relenting. The poignant aftermath: Shchukin escaping with his family from the Red Terror, exiled in Nice and Paris, but ashamed to renew the relationship now that he was no longer able to buy from the Master,” Duncan Fallowell writes in his book review for The Spectator.