Post scriptum (2022, week 27) — Ideology has poisoned the West, the comeback of Leibnizian optimism, and why do we obey rules?

Post scriptum is a weekly curation of my tweets. It is, in the words of the 16th-century French essayist and philosopher, Michel de Montaigne, “a posy of other men’s flowers and nothing but the thread that binds them is mine own.”

In this week’s Post scriptum: We are living through a dictatorship of ineptitude; how Voltaire’s ‘Candide’ can counter Leibnizian optimism; some rules last and some don’t, yet we cling to them in times of change; critical theory is not synonymous with critical thinking; how smartphones hijack our attention; toleration is an impressive virtue that’s worth reviving; the mementos Ancient Romans bought to commemorate their travels; a refreshing look at Egypt’s ancient pyramids; and, finally, Nora Bateson’s self-portrait.

Ideology has poisoned the West

“The word ‘ideology’ is often used as a synonym for political ideas, a corruption of language that conceals its fundamentally anti-political character,” Jacob Howland writes in Ideology has poisoned the West.

“In the ancient republics of Greece and Rome, primary models for English republicanism and the American Founders, politics was understood to be the collective determination of matters of common concern through public debate. As Aristotle taught, politics consists in the citizenly exercise of logos, the uniquely human power of intelligent speech. While voice registers private feelings — think of animal purrs and yelps — speech reveals what is good and bad, just and unjust, binding us together in the imperfect apprehension of realities greater than our individual selves.”

But according to Howland, “ideology is incapable of treating human beings as participants in a shared life, much less as individuals made in the image of God.” This “became fully apparent in the West only with the onset of Covid. It is now widely understood that the subordination of public life to ostensibly scientific guidance and the effective transfer of sovereignty from the body of citizens to an unelected overclass are fundamentally inconsistent with liberty and individual dignity.”

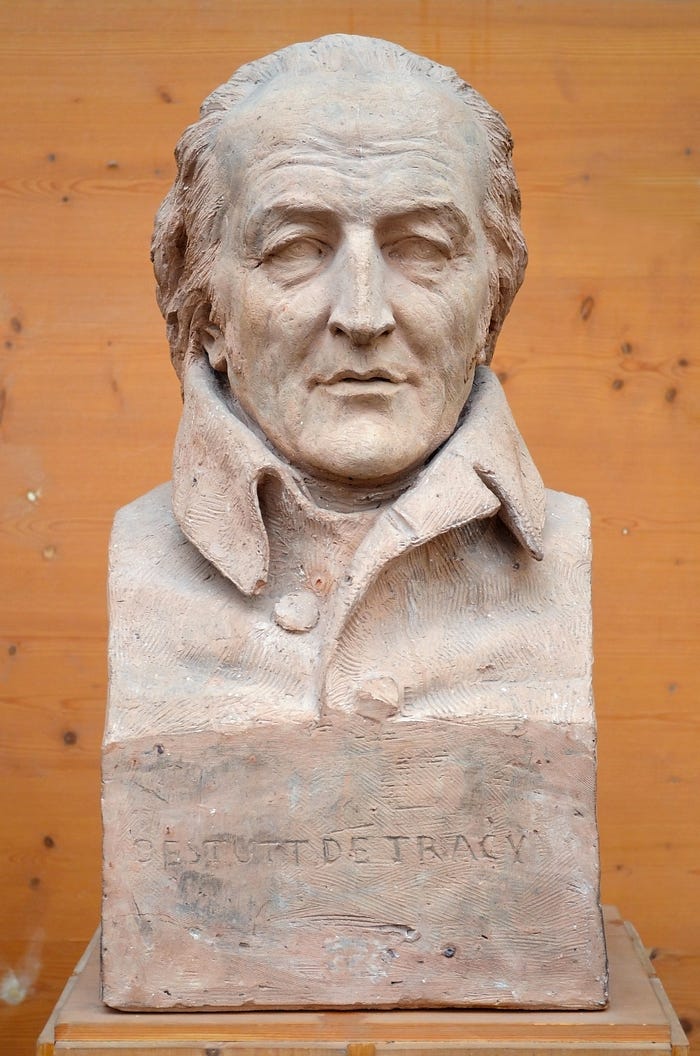

“History is littered with examples of malicious ideological experiments, which in good Baconian form observe nature — in this case, human nature — not ‘free and large,’ but ‘under constraint and vexed… forced out of her natural state, and squeezed and moulded.’ What is to my knowledge the first such experiment occurred after the Athenians were starved into submission at the end the Peloponnesian War in 404 BCE, when the Spartans installed an oligarchy known as the Thirty. The regime was led by Plato’s aristocratic cousin Critias, who flattered himself with the thought that he was a greater philosopher, statesman, and poet than his illustrious ancestor Solon. In Plato’s dialogue Charmides, Critias advances a vacuous conception of rule by a ‘science of sciences’ — an ancient prototype of idéologie, which Tracy considered to be a ‘theory of theories.’ According to Lysias, an eyewitness, the Thirty proposed ‘to purge the city of unjust men, and to turn the rest of the citizens toward virtue and justice’ by restoring what they claimed was the ancestral Athenian constitution. The oligarchs proceeded to disenfranchise, disarm, and expel large segments of the population and finally to rob and murder their political opponents, putting to death roughly 1,500 Athenians — perhaps 3% of the citizen body.

The ideological tyranny of the Thirty left no lasting mark outside of Athens. This was not the case with Communism and Nazism, which also disenfranchised, robbed, deported, and murdered large numbers of people, but did so with modern managerial and industrial efficiency,” Howland writes.

“Ideology’s most horrific social experiments illustrate several points that apply also to the Totalitarianism Lite of contemporary American life. First, while human beings naturally form social groups for common purposes, ideology assumes that organic associations cannot support a good society, which must be engineered from the top down. This assumption, which no ideological experimentation has ever sustained, makes up in arrogance what it lacks in humility.

Second, ideology abjures persuasion, preferring what Hannah Arendt called ‘mute coercion.’ We see this today in the insistence that certain widely-shared opinions that were uncontroversial only a few years ago are so morally illegitimate that they do not deserve a hearing. We see it in the fact that those who publicly voice such opinions are commonly smeared, hounded, denied financial services, investigated, and fired, even by institutions that are publicly committed to diversity of opinion and freedom of speech.

Third, ideology always involves the scapegoating and purging of opponents. Today these primitive religious rituals, enacted within the framework of a secularised and apocalyptic Christianity, include the sanctification of ‘victims’ and the (for now metaphorical) public crucifixion of ‘oppressors.’ Those who are targeted by, or resist, the ideological programme — denounced variously as kulaks, capitalist roadsters, vermin, or white supremacists — must, with the exception of a few penitents who are mercifully spared, be decisively defeated in battle with the forces of good. For only then will the earthy salvation of a just and harmonious society be achievable.

In modern times, the template for the use of violence in the name of the highest political and moral ideals was established in the French Revolution. Marching under the banner of liberty, equality, and fraternity, the Revolution took less than five years to move from the 1789 Declaration of the Rights of Man and of the Citizen to the Terror of Robespierre and the genocidal destruction of the Vendée, a French Department where the Revolutionaries responded to a peasant rebellion by slaughtering roughly 15% of the population. The trajectory from utopian fervour to nihilistic bloodshed, traversed over the past century in countries scattered across Europe, Asia, Africa, and Latin America, is unsurprising. One could hardly expect a programme of radical social transformation that demonises its opponents to be free of bloodshed.

Anyone who thinks that the United States could not descend into similarly horrifying violence is deluded. Ideology is a highly communicable social contagion that infects people who are morally immunocompromised, and today it poses a far greater threat to human beings than any merely biological virus. It always attracts thugs, sadists, and those who lust for power, groups that, once revolutionary fervour gives way to dictatorship, always outnumber true believers. But it also exploits the universal human longing for social validation and fear of being cast out. These risk factors are exponentially amplified by the tribalising social and news-media feedback loops that now fill the vacuum left by a permanent moral order, inherent in nature or revealed by God — notions that, owing to the seductions of technology, were arguably doomed at modernity’s inception.”

The comback of Leibnizian optimism

“It is demonstrable that things cannot be otherwise than as they are; for as all things have been created for some end, they must necessarily be created for the best end. Observe, for instance, the nose is formed for spectacles, therefore we wear spectacles.” — Pangloss in Voltaire’s novella Candide, or The Optimist

“The simplest form of Leibnizian optimism–the belief that despite not feeling like it, we live in the best of the possible worlds– is what allows us to keep endorsing the same structures and justifying the evil that results from them as ‘collateral damage,’” Arianna Marchetti writes in The Comeback Of Leibnizian Optimism.

“In the 21st century Leibnizian optimism has found its finest expression in Western secular democracies — the quintessential best possible world. It doesn’t matter what output we achieve through our systems and institutions or whether they deliver on their promises or not, what we’ve got is still the best that we could possibly have.

On the macro level the main arguments sustaining the thesis that we live in the best of the possible worlds goes like this:

- Democracy might be imperfect but is still the best form of government we have.

- Life in the West is not perfect, but is the best among all other options.

- Our financial system is not perfect but it still works better than any other system.

- We have challenges in our time but it is still the best time to be alive.

By repeating these ‘truths’ like a mantra we are able to feel comforted, pacified, and above all passive in the endless chain of catastrophes that are unfolding right in front of our faces. These afterall are seen as an unavoidable evil, or rather, the best worst option. Structural evil simply became a metaphysical imperative, a rule of nature, that can only be accepted and embraced,” Marchetti argues.

“So how can can lift these blinders of Leibnizian optimism and bring back some healthy doses of critical reflection and negativity to our lives? How can we claim back our right of real self-determination and free ourselves from the oppressive frameworks that limit our capacity to create something new?”

Marchetti believes that Voltaire, probably the fiercest [critique of Gottfried Leibniz], has some interesting insights to offer when it comes to countering Leibnizian optimism. His novella Candide is “a masterpiece of a novel entirely dedicated to ridiculing Leibniz’s best of the possible worlds argument,” she writes.

“The book ends on with Candide’s famous remark: ‘All is well said but we must cultivate our garden.’ This simple sentence has found many different interpretations, from an invite to focus on immediate actions to an invite to shield ourselves from the influences of the world. In my understanding, the invitation to cultivate one’s own garden is not an invitation to withdraw from the world and focus on one’s own immediate business, quite the opposite!

A garden requires dedication, effort, care, and constant attention. It demands us to prepare the soil, fertilize it and be responsive to its needs. Just like a garden our lives require constant attention, without which they will stop bearing fruits. As a garden needs to be cleared from weeds and sod before it can become ready for fertilization, our minds need to be cleared from the debris of years of indoctrination before they can bear fruits. By undertaking a painful process of introspection and self-discovery we can initiate a process of disillusionment and detachment from ideas and worldviews that have become sterile and prevent us from bringing new thoughts to maturity. Clearing our minds from bad weeds and pests is an essential step to welcome new life and thoughts that can in turn propagate and permeate in their surroundings.

After clearing our garden we also need to fertilize it and provide the necessary water to maintain its health. Likewise, after we create more space into our minds, we need to nourish them through concrete actions and new experiences that will lead to new thoughts and knowledge as well as a more balanced life experience. Maintaining a healthy garden requires full attention and so do our minds. In a heartbeat a pest could destroy all our work as a sustained negligence and lack of care can turn our minds dull. It is hard work cultivating our garden, but it is our duty towards ourselves and others.

We can’t have good crops if we don’t cultivate our gardens properly as we can’t have a good society if individuals don’t take care of their minds. What kind of solutions can we expect from a society composed of unhealthy individuals? If our decisions have brought us to the brink of environmental collapse, we cannot trust our ability to solve it with the same approaches and old worldviews. We need to go back to cultivating our neglected gardens if we want to find real solutions, nothing good can grow on a wasteland.”

Why do we obey rules?

“Cultures notoriously differ as to the content of their rules, but there is no culture without rules. […] A book about all of these rules would be little short of a history of humanity.” — Lorraine Daston in Rules: A Short History of What We Live By

In Rules: A Short History of What We Live By, the director emerita at the Max Planck Institute for the History of Science, Lorraine Daston, “analyzes rules as diverse as those for making pudding, those for regulating traffic, and those governing the movement of matter in the universe. In considering a series of historic anecdotes and texts, Daston helps us see rules (and their neighbors, such as laws and regulations) through the concepts of thickness and thinness, paradigms and algorithms, failures, and states of exception,” Rivka Galchen writes in Why Do We Obey Rules?

Daston uses the Rule of St. Benedict to “argue that, for much of late antiquity and the Latin Middle Ages, rules were derived from models ‡: the abbot of the monastery, considered to be endowed by God, was to be emulated. But he also enforced the rules, using his godly discretion.

These rules are considered ‘thick’ rules. This is not because there are so many of them, but because they require interpretation, and because examples are given, and because they make room for all sorts of exceptions. One might even say that thick rules are like a thicket — with tendrils and tangles of special cases, some specified, others deduced. A sick monk might be granted more than the daily allotted amount of bread. A thick rule need not be long. In [the Victorian world of Lewis Carroll’s Alice’s Adventures in Wonderland], for example, a thick rule might be ‘Young ladies should always be polite’ — and Alice does her best to interpret and enact this dictum in the ever-changing circumstances of Wonderland.

Thin rules, ideally, apply to all cases uniformly. As Daston puts it, thin rules ‘aspire to be self-sufficient.’ A computer algorithm is an example of a thin rule — long, perhaps, but intended to be deployed without the need of any human thought or intervention. In Wonderland, a thin rule, arguably, would be the Cheshire Cat’s declaration: ‘We’re all mad here.’ The Ten Commandments also tend be understood as thin — they are to be obeyed always, by everyone — which is part of why the story of Abraham being asked to sacrifice his son Isaac is so opaque, engaging, and sublime.”

It is a mystery, though, why some rules last and others don’t. Daston suggests that rules tend to succeed when they are also norms.

“One needs a good abbot for the Benedictine rules to work. If you let go of the idea that the abbot is endowed by God, then you can ask, Is the abbot using wise discretion, or is his judgment deranged by favoritism or bigotry or self-interest? Daston tells how, in the Western world, ‘willfulness had by the seventeenth century come to be tarred by the same brush as arbitrary caprice.’ This shift contributed to an ideal of different kinds of rules — thinner, even algebraic, rules. Algorithms, which were closely associated with reason, came to be valued as more ideal than error-prone human judgment. As Daston puts it, the ‘dream of unambiguous rules flawlessly followed has always fed upon the special case of calculation, the thinnest rules of all.’

[…]

By the end of Daston’s book, one feels a sense of clarity about how to think about rules, alongside a gentle sense of despair concerning what kinds of rules to hope for. Rules that leave a ruler, or a judge, in charge of interpreting them feel at once humanized and corruptible. Rules that allow no exception seem free of human frailty but alien, and unable to admit properly of complexity. Despair as a response to the ever-present weakness of laws seems intuitively honest, the abbot inside of us might say, and it also scans as accurate to our inner computing algorithm; algorithms are biased toward the quantifiable, and the Tin Man is right to worry that he has no heart.

In the final chapter, Daston discusses the political theorist Carl Schmitt’s definition of sovereignty as ‘the power to decide on the exception,’ and his conviction that the exception — the event or situation that legitimatizes the suspension of the rule of law — could not be represented in any existing legal order. Schmitt, who was eventually disgraced by his enthusiastic participation in the Nazi regime, ‘detested’ the rule of law, and saw the reforms across centuries that narrowed the powers of the sovereign as catastrophic. Daston describes how, historically, sovereignty in Europe has been derived from a mix of ‘divine authority, the patriarchal power of the male head of household over his wife and children, and the power of the conqueror over the vanquished in war.’ She also talks about the rapid collapse of rules that can follow a cataclysm, such as a pandemic or a revolution. These rapid changes can be positive, as envisioned in Boccaccio’s Decameron, or they can be the start of authoritarianism. Unfortunately, Daston’s book feels relevant today, even essential.

Alice’s Wonderland is a place where the only rule is that the rules will keep changing. One offering makes you larger, another makes you small; it’s always teatime because there’s no time; and the rabbit, with his broken watch, is always late. The rules of Wonderland fail to offer what is so beloved about rules, which is the increase of what Daston terms the ‘radius of predictability.’”

This, says Galchen, may not be equivalent to the good. But the predictable is as much a human need as are ruptures from the predictable.

‡ “In ancient Greek, kanon, the word for rule, was connected to the usefully straight and tall giant cane plant, which was used to make measurements. It’s because of this connection that the word became associated both with laws and with the idea of a model — that with which something is compared, but to which it is not meant to be identical. […] Similarly, the Latin term regula connects both to straight planks used for measuring and building and to a model by which others are measured more metaphorically — the ruler of a nation, say. In that more metaphorical case, the ruler may be the source of rules, and possibly exempt from them; alternatively, the ruler can be exemplary, the ideal by which one determines how one ought to be.”

In the margins

“Critical theory is not synonymous with critical thinking. To understand what it is exactly, one must go back to Karl Marx, and his observation that ‘philosophers have merely interpreted the world, and the point is to change it.’ Marx pointed out that philosophy and science had heretofore been descriptive, and what he wanted was a prescriptive approach to scholarship.

Critical theorists of the Frankfurt School argued that traditional ‘theories’ or ways of looking at the world had thus far served the interests of the powerful. Because traditional forms of inquiry were uncritical towards power, it therefore served the powerful, while critical theory, in unmasking powerful interests, helped serve the powerless. All scholarship is political, they said, and by choosing critical theory over traditional forms of scholarship, one choses to challenge the status quo. When the Frankfurt School were developing their theory, this approach was new and fresh, and was no doubt very useful in mobilising emancipatory civil rights movements across the world. But these liberationist impulses have since ossified into rigid orthodoxies.

On campus, at least in the humanities, critical theory is the new dogma. In critical legal theory, academics focus first on politicising the law, and then prescribing the correct political values that the law should reflect, i.e. the correct attitudes around gender, sexuality, race, the environment and economics. Scholars cited within critical legal theory include Marx, Gramsci, Foucault and Derrida. Critical theory also dominates the study of literature, and other humanities subjects such as international relations are also increasingly influenced by the spectre of the methodology. New fields of study have also opened up such as critical plant studies, which claims that humans occupy a ‘privileged place’ in relation to plant life.

Critical theory is not without its uses. It has proven that as a methodology it is capable of critiquing the dominant power structures of the mid-twentieth century. Arguably, however, the method has dated. Now critical theorists are in a position of dominance. In many humanities departments around the world, theirs is the dominant ideology. In the humanities, feminist, queer and post-colonial approaches of interpretation have become the status quo. Uri Harris, writing for the online magazine Quillette, […] has argued that this predominance of critical theory in the academy opens up challenging and paradoxical situations because, as critical theory becomes more widespread and its adherents more powerful, critical theory must then be turned on itself.

Both the far Right and the far Left are re-emerging across the Western world. It would be reductive and simplistic to place the blame for this development on either conflict theory or critical theory. Likewise, media which draw on erroneous and black-and-white narratives for explanations of complex social phenomena are not solely to blame. Of course the resurgence of left-wing and right-wing populism is influenced by a multiplicity of causes. Unfortunately, however, one of those causes is currently dominant within our institutions of higher education.”

From: What is mistake theory and can it save the humanities?, by Claire Lehmann (Engelsberg Ideas)

“Smartphones are, of course, made to hijack our attention. ‘The apps that make money by taking our attention are designed to interrupt us,’ says [Catherine Price, a science writer and the author of How to Break Up With Your Phone]. ‘I think of notifications as interruptions because that’s what they’re doing.’

For [Oliver Hardt, a professor who studies the neurobiology of memory and forgetting at McGill University in Montreal], phones exploit our biology. ‘A human is a very vulnerable animal and the only reason we are not extinct is that we have a superior brain: to avoid predation and find food, we have had to be really good at being attentive to our environment. Our attention can shift rapidly around and when it does, everything else that was being attended to stops, which is why we can’t multitask. When we focus on something, it’s a survival mechanism: you’re in the savannah or the jungle and you hear a branch cracking, you give your total attention to that — which is useful, it causes a short stress reaction, a slight arousal, and activates the sympathetic nervous system. It optimises your cognitive abilities and sets the body up for fighting or flighting.’ But it’s much less useful now. ‘Now, 30,000 years later, we’re here with that exact brain’ and every phone notification we hear is a twig snapping in the forest, ‘simulating what was important to what we were: a frightened little animal.’”

From: Is your smartphone ruining your memory? A special report on the rise of ‘digital amnesia,’ by Rebecca Seal (The Guardian)

“[The British philosopher Bernard Williams’s] version of toleration is pragmatic rather than principled. What is needed for a tolerant society, he felt, is not only — or even primarily — a single liberal virtue but rather ‘all the resources we can put together’. These include positive elements such as ‘the desire to co-operate and to get on peacefully with one’s fellow citizens and a capacity for seeing how things look to them.’ But most of all, for Williams, it involves a healthy sense of what life looks like when mutual toleration breaks down.

His perspective owes much here to the influence of the 17th-century English philosopher Thomas Hobbes and the 20th-century non-utopian thought of the American philosopher Judith Shklar. What we should focus on, for these thinkers and for Williams, is not achieving the best outcomes but avoiding the worst. What we need to avert is a world of escalating cruelty and tit-for-tat violence. What can inspire toleration, quite sensibly, is fear.

Williams was, as usual, shrewd here. But this pragmatic stance is not without its own risks — risks of a fundamentally moral kind. How much evil can we tolerate in the name of avoiding greater evil? Williams did not think there was any formula or list of moral rules that you could consult for an answer.

The question of whether to tolerate and when to intervene relies inescapably on wise perception and judgment. Williams the realist recognised that force also had to be taken into account. We can desire peace, and we can warn against fanaticism, but sometimes, unavoidably, what is needed is ‘power’ to provide ‘reminders to the more extreme groups that they will have to settle for coexistence.’”

From: Toleration is an impressive virtue that’s worth reviving, by Daniel Callcut (Psyche Ideas)

“We tend to think of souvenirs today as mass-produced and globalised, flattening them through a vocabulary of plastics and ‘Made in China’ labels that can elide their emotional appeal. Yet souvenirs can acquire great sentimental value, despite their mass-produced status. […]

Archaeological evidence from antiquity confirms that souvenirs could hold extraordinary personal significance for Romans, who similarly invested themselves emotionally in their souvenirs. The Puteoli and Baiae flasks have been found in graves, homes, sanctuaries and public bathhouses. They could function as useful vessels and conversation pieces in domestic contexts, yet they also accrued enough affective value to be offered to the gods or taken to the afterlife.

Souvenirs of the Tyche of Antioch similarly could fulfil multiple practical and emotional roles. The bronze figurines, produced far from Syria, likely were not acquired as pilgrimage souvenirs from a visit to Antioch. While they could have been set up in lararia, or household shrines, they could as easily have been displayed as modest artistic replicas, the ancient equivalent of a poster of the Mona Lisa. The glass bottles in the shape of the Tyche probably contained perfume, but their fine preservation suggests they ultimately ended up in burials. In all instances, the Tyche souvenirs offered a vicarious experience of Eutychides’ statue and the city it personified, and allowed people to grasp, in a manner literal as well as figurative, the image of the goddess and her incorporation into spheres of leisure, travel and ritual.

Integrated into people’s lives through these practical affordances and emotional attachments, souvenirs did not simply reflect widely held perceptions of places and monuments. They constructed those perceptions. They broadcast that certain statues and cities were worthier of commemoration than others, that certain parts of a statue or buildings within a city were the appropriate recipients of special attention and fame, and that these landmarks could stand as shared cultural property around the Roman Empire.”

“Consider the Statue of Liberty today. In the absence of reproductions, Frédéric Auguste Bartholdi’s copper statue would, of course, stand materially in New York Harbor — but the Statue of Liberty as an idea, a symbol and an internationally recognisable landmark would not. The monument’s reproduction on posters, postcards, snow globes, figurines and T-shirts renders it iconic of New York and even the US. Eutychides’ bronze statue of Tyche would have existed in the absence of reproductions, but it would have been neither an internationally recognisable personification of Antioch nor a prestigious, sought-after artwork. The ‘original’ statues mean so much, and so widely, because of their souvenir reproductions.

It is not inevitable that we have come to visualise the Statue of Liberty when we think of New York (and vice versa) or that ancient Romans linked Antioch and Eutychides’ statue of Tyche. It is not even inevitable that people around the Roman Empire perceived Rome as the ultimate big city, rather than any number of the empire’s other large, cosmopolitan cities. We often take for granted that, as the post-antique saying goes, all roads lead to Rome, but it was objects such as the London stylus that fashioned Rome as the centre for people who likely would never voyage there and experience it in person. Souvenirs performed the work of transforming these stationary cities, buildings and statues into portable, widely recognisable landmarks.

Roman souvenirs were anything but marginal in the lives of their owners and in the imperial system in which those owners lived. They mediated the meanings of the artworks and monuments that still inform the grand histories of Rome. They force us to confront how the reproduction of places and monuments and the circulation of objects shape how we know the parts of our world that we never see in person — processes still in play today, albeit via different media and on different scales. One cannot look at the Roman material and still think that souvenirs only commemorate travel. They also prime expectations of sites that fuel tourism economies, direct tourist behaviour, and obscure the complex histories and present circumstances of many tourist sites. Souvenirs, whether a magnet of the Statue of Liberty or a bronze figurine of the Tyche of Antioch, may be small and inexpensive, but banal they are not. In our quest to understand how people, ancient and modern, imagine, know and make meaning of places, we cannot afford to overlook them.”

From: Our trip to Antioch, by Maggie Popkin (Aeon Magazine)

‘My last visit to the pyramids was almost exactly 10 years ago, right before the Arab Spring revolution began. While Egypt has gone through a torrent of changes over the last decade, political and otherwise, these ancient wonders have remained as majestic and otherworldly as they ever were — though, as Dr. Lehner’s own work regularly demonstrates, there’s still plenty to learn about the structures and the people who made and used them. With his wide-ranging expertise, constant commentary and insider status (I lost track of the sheer number of government officials, other Egyptologists and guides who greeted him throughout the tour), my experience this time around, this past November, was undoubtedly richer.

Seeing the pyramids of Giza again — iconic monuments that thousands of visitors snap photos of every day — was a richer experience for me as a photographer, too. And that was largely because of one unexpected wild card: It rained.

In this part of the world, rainfall is a true rarity; the area generally sees less than an inch each year. And yet ‘bad’ weather often allows for good photography. Streaks of light or interesting cloud cover can allow you to see things in a different way. That can be especially useful when trying to capture locations that are so heavily photographed.

So I considered it a stroke of luck when Mother Nature provided a rarefied dramatic backdrop just as we neared the Bent Pyramid in Dahshur, some 25 miles south of Cairo. This notable pyramid, I learned, is the second built by Sneferu, the founding pharaoh of the Fourth Dynasty of Egypt. (His successor, Khufu, went on to build Giza’s famous Great Pyramid.) Egyptologists now see the Bent Pyramid as a critical step toward the building of a strictly pyramidal tomb.

Mother Nature wasn’t finished with her show yet, either. A heavy dust storm swirled around the Step Pyramid of Djoser, part of the Saqqara necropolis that lies some 19 miles south of Cairo. Masks and scarves were whipped out as we arrived, with some people ducking away to shelter from the opaque wall of airborne sand.

The season of sandstorms, and the winds that cause them, are known as the khamsin, the Arabic word for ‘50,’ referring to the 50 days of potential storms that arrive in late winter or early spring. From my perspective though, seeing Egypt’s most famous ancient treasures under such drama-filled circumstances only made these inimitable structures more otherworldly.”

From: A Refreshing Look at Egypt’s Ancient Pyramids, photographs and Text by Tanveer Badal (The New York Times)

“If I were a mathematical formula I would be a chalkboard full of symbols and arrows. No. I would be many such chalkboards.

Or maybe a just a crayon writing 1+1=

With no answer. Wondering.” — Nora Bateson, in Self Portrait