Random finds (2017, week 10) — On the cult of the ‘Great White Innovator,’ AI’s PR problem, and the revenge of analog

“I have gathered a posy of other men’s flowers, and nothing but the thread that binds them is mine own.” — Michel de Montaigne

Random finds is a weekly curation of my tweets and a reflection of my curiosity.

On the cult of the ‘Great White Innovator’

“Innovation has become a defining ideology of our time. Be disruptive, move fast, break things! And everyone knows — right? — what innovation looks like,” writes W Patrick McCray in It’s not all lightbulbs.

Innovation, as an infinite progression of advertisements, political campaigns and university incubators tell us, is ‘Always A Very Good Thing.’ But because this isn’t always true, says McCray, “it’s essential to understand how science and technology advances actually happen and affect the world.”

“First, forget all those images that a web search gives. The driving forces of innovation are not mythic isolated geniuses, almost always represented as men, be it Edison or Steve Jobs. That view is at best misleading, the history of technology and science’s version of the ‘Great (White) Man’ approach to history. For instance, Edison almost never worked alone.”

Second, “if we leave the shadow of the cult of the ‘Great White Innovator’ theory of historical change, we can see farther, and deeper.” For example, from the perspective of people in Asia or Africa, rather than a Western or European perspective, the modern industrial revolutions look very different. Such a more global view allows us to begin to glimpse a more familiar world where activities such as maintenance, repair, use and re-use, obsolescence and disappearance still dominate. And with it, the people whose lives and contributions have been marginalised by the narrative of the ‘Great White Innovator.’

“Our prevailing focus on the shock of the technological new often obscures or distorts how we see the old and the preexisting. It’s common to hear how the 19th-century telegraph was the equivalent of today’s internet. In fact, there’s a bestseller about it, The Victorian Internet (1998) by Tom Standage. Except this isn’t true. Sending telegrams 100 years ago was too expensive for most people. For decades, the telegraph was a pricey, elite technology. However, what was innovative for the majority of people c1900 was cheap postage. […] Although hard to imagine today, bureaucrats and business leaders alike spoke about cheap postage in laudatory terms that resemble what we hear for many emerging technologies today. By not seeing these older technologies in the past, we stand in danger of ignoring the value and potential of technologies that exist now in favour of those about to be. We get, for instance, breathless stories about Elon Musk’s Hyperloop and neglect building public transport systems based on existing, proven technologies or even maintaining the ones we have.”

If we maintain a narrow and shallow view of innovation, notions of making (new) stuff too easily predominate, McCray writes. “As Ian Bogost recently noted, the ‘technology’ in the tech sector is typically restricted to computer-related companies such as Apple and Alphabet while the likes of GE, Ford or Chevron are overlooked. This is absurd. Surely Boeing — which makes things — is a ‘tech company,’ as is Amazon, which delivers things using Boeing’s things. Revising our sense of what technology is — or who does innovation — reshapes and improves our understanding of what a technology company is.”

Just as ‘computer’ is a synecdoche for ‘technology,’ Silicon Valley has come to reflect a certain monoculture of thought and expression about technology.

McCray ends his essay for Aeon by saying, “It’s unrealistic to imagine that the international obsession with innovation will change any time soon. Even histories of nation-states are linked to narratives, rightly or wrongly, of political and technological innovation and progress. To be sure, technology and innovation have been central drivers of the US’s economic prosperity, national security and social advancement. The very centrality of innovation, which one could argue has taken on the position of a national mantra, makes a better understanding of how it actually works, and its limitations, vital. Then we can see that continuity and incrementalism are a much more realistic representation of technological change.

At the same time, when we step out of the shadow of innovation, we get new insights about the nature of technological change. By taking this broader perspective, we start to see the complexity of that change in new ways. It’s then we notice the persistent layering of older technologies. We appreciate the essential role of users and maintainers as well as traditional innovators such as Bill Gates, Steve Jobs, and, yes, Bill and Lizzie Ott. We start to see the intangibles — the standards and ideologies that help to create and order technology systems, making them work at least most of the time. We start to see that technological change does not demand that we move fast and break things.”

On AI’s PR problem

Artificial intelligence is often just a fancy name for a computer program, says Ian Bogost in ‘Artificial Intelligence’ Has Become Meaningless.

The AI designation might be warranted in some cases. Autonomous vehicles, for example, don’t quite measure up to R2D2 (or Hal), but they do deploy a combination of sensors, data, and computation to perform the complex work of driving. However, in most cases, the systems making claims to AI aren’t sentient, self-aware, volitional, or even surprising. They’re just software.

Deflationary examples of AI are everywhere. “Coca-Cola reportedly wants to use ‘AI bots’ to ‘crank out ads’ instead of humans. What that means remains mysterious. […] AI has also become a fashion for corporate strategy. […] The 2017 Deloitte Global Human Capital Trends report claims that AI has ‘revolutionized’ the way people work and live, but never cites specifics. Nevertheless, coverage of the report concludes that artificial intelligence is forcing corporate leaders to ‘reconsider some of their core structures.’ And both press and popular discourse sometimes inflate simple features into AI miracles. Last month, for example, Twitter announced service updates to help protect users from low-quality and abusive tweets.” And although these changes amount to little more than additional clauses in database queries, some conclude that Twitter is “constantly working on making its AI smarter.”

Charles Isbell, a AI researcher at Georgia Institute of Technology, suggests two features necessary before a system deserves the name AI. “First, it must learn over time in response to changes in its environment. Fictional robots and cyborgs do this invisibly, by the magic of narrative abstraction. But even a simple machine-learning system like Netflix’s dynamic optimizer, which attempts to improve the quality of compressed video, takes data gathered initially from human viewers and uses it to train an algorithm to make future choices about video transmission.

Isbell’s second feature of true AI: what it learns to do should be interesting enough that it takes humans some effort to learn. It’s a distinction that separates artificial intelligence from mere computational automation. A robot that replaces human workers to assemble automobiles isn’t an artificial intelligence, so much as machine programmed to automate repetitive work. For Isbell, ‘true’ AI requires that the computer program or machine exhibit self-governance, surprise, and novelty.”

Just like the word ‘algorithm,’ which has become a cultural fetish, also the indiscriminate usage of the term AI exalts ordinary — and flawed — software services as false idols. Mostly, whenever people are talking about AI, they’re referring to a computer program someone wrote.

“If algorithms aren’t gods, what are they instead? Like metaphors, algorithms are simplifications, or distortions. They are caricatures. They take a complex system from the world and abstract it into processes that capture some of that system’s logic and discard others. And they couple to other processes, machines, and materials that carry out the extra-computational part of their work.” — Ian Bogost in The Cathedral of Computation

Given the incoherence of AI in practice, Stanford computer scientist Jerry Kaplan suggests ‘anthropic computing’ as an alternative — programs meant to behave like or interact with human beings. “For Kaplan,” Bogost writes, “the mythical nature of AI, including the baggage of its adoption in novels, film, and television, makes the term a bogeyman to abandon more than a future to desire.”

Computers trick people all the time. “Not by successfully posing as humans, but by convincing them that they are sufficient alternatives to other tools of human effort. Twitter and Facebook and Google aren’t ‘better’ neighborhood centers, town halls, libraries, or newspapers — they are different ones, run by computers, for better and for worse.” We should address the implications of these services not as totems of otherworldly AI, but “by understanding them as particular implementations of software in corporations.”

“By protecting the exalted status of its science-fictional orthodoxy,” Bogost believes, “AI can remind creators and users of an essential truth: today’s computer systems are nothing special. They are apparatuses made by people, running software made by people, full of the feats and flaws of both.”

“Public discourse about AI has become untethered from reality in part because the field doesn’t have a coherent theory. Without such a theory, people can’t gauge progress in the field, and characterizing advances becomes anyone’s guess. As a result the people we hear from the most are those with the loudest voices rather than those with something substantive to say, and press reports about killer robots go largely unchallenged.” — Jerry Kaplan in AI’s PR Problem

A bit more …

“Analogue demands that you make a decision — to read this one book, write this sentence, take this photo — while digital keeps luring us on with the promise of perfection and infinite choice,” Oliver Burkeman argues in Get real: why analogue refuses to die.

“So, it’s not just that Apple hasn’t got fingerprint recognition (or whatever) right just yet; it’s that the trajectory it’s on — toward a device that does everything, perfectly — is unattainable, and thus doomed never to satisfy.”

“Lurking behind this is the fact that while we tend to believe we live in a ‘materialistic’ society, we are, in the words of sociologist Juliet Schor, ‘not at all material enough.’ In fact, writes David Cain at raptitude.com, ‘We have very low standards for what physical objects we trade our money for, and for the quality of the sensory experiences they provide.’ We live more and more in the world of the abstract — thanks to the internet and the rise of branding, which sells promises of happiness and attractiveness, rather than things themselves. The things themselves are often crap. Cain’s friends mocked him for buying a well-made £50 stapler; but now, he reports, ‘I enjoy every single act of stapling.’ And wouldn’t it be great to be able to say, on your deathbed, that you even savoured the stapling?”

“In an increasingly digital world where physical objects and experiences are being replaced by virtual ones, Mr. Sax concludes, ‘analog gives us the joy of creating and possessing real, tangible things’: the hectic scratch of a fountain pen on the smooth, lined pages of a notebook; the slow magic of a Polaroid photo developing in front of our eyes; the satisfying snap of a newspaper page being turned and folded back; the moment of silence as the arm of an old turntable descends toward a shiny new vinyl disk and the music begins to play.” — Michiko Kakutani in his review of The Revenge of Analog for The New York Times

The rise of algorithms has been relentless, but we need human input in our world of technological innovations.

“By any measure, we have an astounding surplus of reading matter. The more we have, the more we rely on algorithms and automated recommendation systems. Hence the unstoppable march of algorithmic recommendations, machine learning, artificial intelligence and big data into the cultural sphere. Yet this isn’t the end of the story. Search, for example, tells us what we want to know, but can’t help if we don’t already know what we want. Far from disappearing, human curation and sensibilities have a new value in the age of algorithms. Yes, the more we have the more we need automation. But we also increasingly want informed and idiosyncratic selections. Humans are back,” writes Michael Bhaskar, author of Curation: The Power of Selection in a World of Excess, in In the age of the algorithm, the human gatekeeper is back.

“Curation can be a clumsy, sometimes maligned word, but with its Latin root curare (to take care of), it captures this irreplaceable human touch. We want to be surprised. We want expertise, distinctive aesthetic judgments, clear expenditure of time and effort. We relish the messy reality of another’s taste and a trusted personal connection. We don’t just want correlations — we want a why, a narrative, which machines can’t provide. Even if we define curation as selecting and arranging, this won’t be left solely to algorithms. Unlike so many sectors experiencing technological disruption, from self-driving cars to automated accountancy, the cultural sphere will always value human choice, the unique perspective.”

“This is where the arts and humanities strike back in a world of machine learning. Here is a new generation of jobs. Information overload and its technology-driven response are one of the great transformations of our time. But amid today’s saturation (and those teetering piles of new books), knowledge and subjective judgment are more valuable than ever. In the words of one Silicon Valley investor, ‘software eats the world.’ Well, software can’t eat human curation. Contrary to myth, traditional gatekeeping roles are here to stay.

What we will see are hybrids: rich blends of human and machine curation that handle huge datasets while going far beyond narrow confines. We now have so much — whether it’s books, songs, films or artworks (let alone data) — that we can’t manage it all alone. We need an ‘algorithmic culture.’ Yet we also need something more than ever: human taste.”

Sam Mowe recently spoke with journalist Elizabeth Kolbert and Buddhist monk Matthieu Ricard about The Urgent Need to Slow Down.

Kolbert’s The Sixth Extinction: An Unnatural History — winner of the Pulitzer Prize for General Nonfiction 2015 — takes an unflinching look at the history of extinction and the different ways that human beings are negatively impacting life on the planet. In Altruism: The Power of Compassion to Change Yourself and the World, Ricard explores global challenges, such as climate change, and argues that compassion and altruism are the keys to creating a better future. Together these books — filled with grief and hope — feel like two sides of a coin, each necessary for understanding what it means to be alive during humanity’s greatest crisis.

[Elizabeth Kolbert] “I think that the idea about slowing down very much gets to the heart of the matter. To the extent that we are a world-altering species — and I do think it’s pretty clear that we’ve been at this project for a very long time — what makes us very destructive, unfortunately, is our capacity to change things on a time scale that is orders of magnitude faster than other creatures can evolve to deal with.

But there is a difference between what we were doing when we were hunting some mastodons and what we’re doing today. Our impact on the planet has been called “the great acceleration.” Becoming aware of our capacity to change the planet could be a good thing and could potentially lead us to reassess a lot of the things we do. However, I try to never say, ‘Things are going to change,’ because I don’t see any evidence of that. But I certainly think that there’s a possibility for change.”

[Matthieu Ricard] “It’s not contradictory to speak of an emergency to slow down. It’s not like you are frantically nervous while slowing down. It’s just that it is time to slow down. All of those terms — slowing down, simplicity, doing more with less — people respond to them by saying, ‘Oh, I’m not going to be able to eat strawberry ice cream anymore.’ They feel bad about that. But, actually, what they miss is that voluntary simplicity that turns out to be a very happy way of life. There have been very many good studies showing that again and again. Jim Casa studied people with a highly materialistic consumerism mindset. He studied 10,000 people over 20 years and compared them with those who more put value on intrinsic things — quality of relationships, relationship to nature — and he found the high consumer-minded people are less happy. They look for outside pleasures and don’t find relationship satisfaction. Their health is not as good. They have less good friends. They are less concerned about global issues like the environment. They are less empathic. They are more obsessed with debt. So I think we have to realize that we can find joy and happiness and fulfillment without buying a big iPad, then a mini iPad and then a middle-sized iPad.”

In The Black Swan Nicholas Nassim Taleb writes, “The writer Umberto Eco belongs to that small class of scholars who are encylopedic, insightful, and nondull. He is the owner of a large personal library (containing thirty thousand books), and separates visitors into two categories: those who react with ‘Wow! Signore professore dottore Eco, what a library you have! How many of these books have you read?’ and the others — a very small minority — who get the point that a private library is not an ego-boosting appendage but a research tool. Read books are far less valuable than unread ones.

The library should contain as much of what you do not know as your financial means, mortgage rates, and the currently tight read-estate market allows you to put there. You will accumulate more knowledge and more books as you grow older, and the growing number of unread books on the shelves will look at you menacingly. Indeed, the more you know, the larger the rows of unread books. Let us call this collection of unread books an antilibrary.

We tend to treat our knowledge as personal property to be protected and defended. It is an ornament that allows us to rise in the pecking order. So this tendency to offend Eco’s library sensibility by focusing on the known is a human bias that extends to our mental operations. People don’t walk around with anti-resumes telling you what they have not studied or experienced (it’s the job of their competitors to do that), but it would be nice if they did.

Just as we need to stand library logic on its head, we will work on standing knowledge itself on its head. Note that the Black Swan comes from our misunderstanding of the likelihood of surprises, those unread books, because we take what we know a little too seriously. Let us call this an antischolar — someone who focuses on the unread books, and makes an attempt not to treat his knowledge as a treasure, or even a possession, or even a self-esteem enhancement device — a skeptical empiricist.”

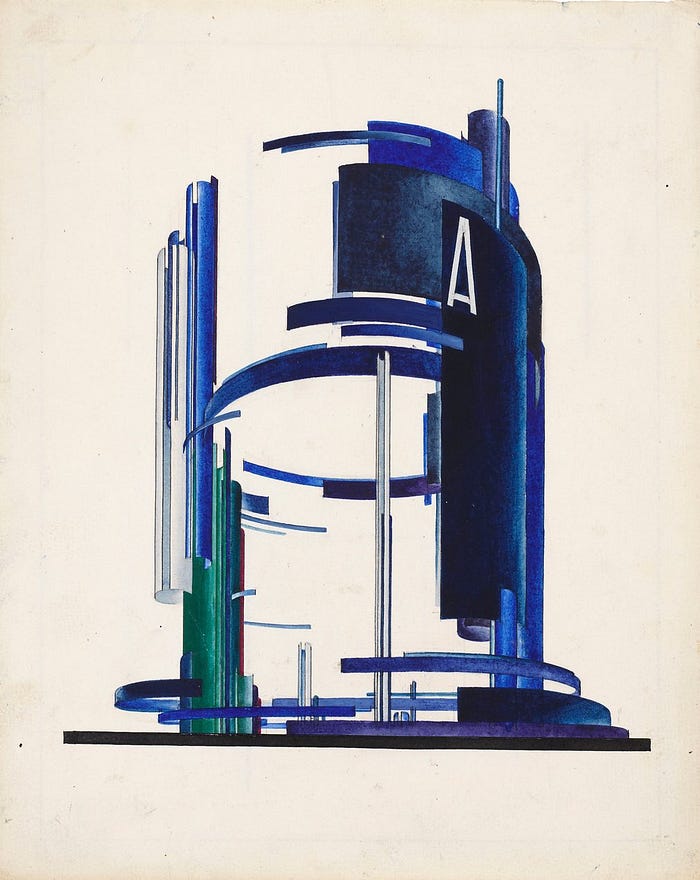

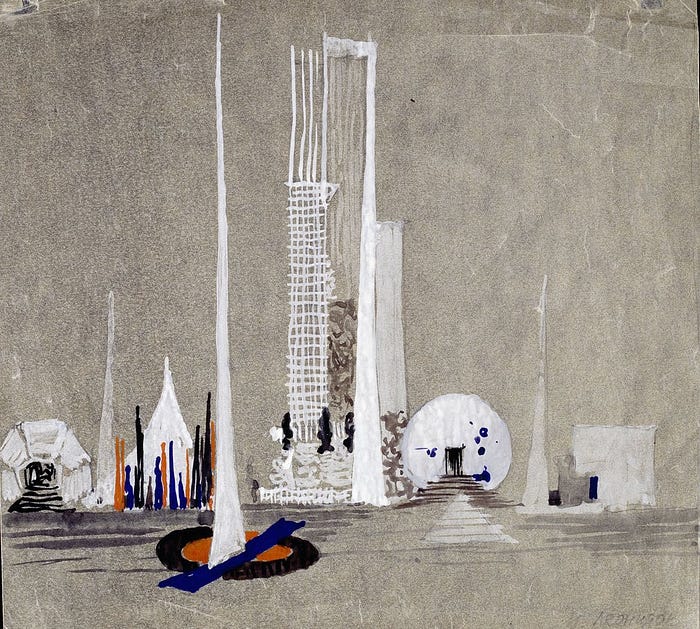

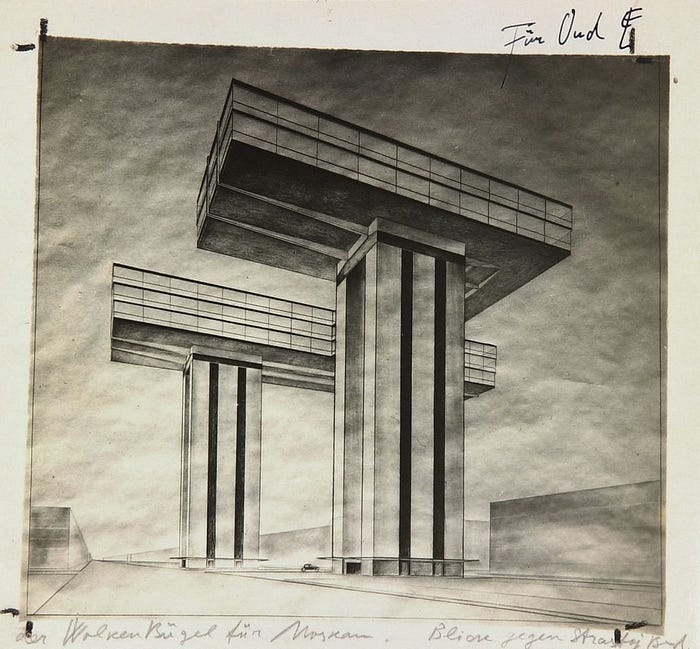

As the centenary of the Russian revolution approaches, the designs of top Soviet architects for the capital’s future reveal their visionary aspirations: Unbuilt Moscow: the ‘new Soviet’ city that never was. The exhibition Imagine Moscow is at the Design Museum in London, until 4 June.

“Maybe we have become so inclined to celebrate the authenticity of all personal evidence that it is now elitist to believe in reason, expertise, and the lessons of history.” — Tom Nichols in The Death of Expertise: The Campaign Against Established Knowledge and Why It Matters