Random finds (2019, week 10) — On creativity and the purpose of humanity, where smart cities proponents go astray, and the ethical consequences of immortality

I have gathered a posy of other men’s flowers, and nothing but the thread that binds them is mine own.” — Michel de Montaigne

Random finds is a weekly curation of my tweets and, as such, a reflection of my fluid and boundless curiosity.

If you want to know more about my work and how I help leaders and their teams find their way through complexity, ambiguity, paradox & doubt, go to Leadership Confidant — A new and more focused way of thriving on my ‘multitudes’ or visit my ‘uncluttered’ website.

This week: What is the purpose of humanity if machines can learn ingenuity?; the smart enough city; the allure of immortality; Uber and the servant economy; lessons from the fall of a great republic; Zadie Smith and the subtle difference between pleasure and joy; the intangible architecture of Pritzker Price laureate Arata Isozaki; an architectural space of rest and contemplation; and, finally, why we can’t go on with capitalism’s exploitative mindset.

Creativity and the purpose of humanity

Flashes of inspiration are considered a human gift that drives innovation — but the monopoly is over. A.I. can be programmed to invent and refine ideas and connections. So what’s next?, wonders Marcus Du Sautoy in What’s the purpose of humanity if machines can learn ingenuity?, an edited extract from his new book, The Creativity Code: How AI Is Learning to Write, Paint and Think (HarperCollins, March 2019).

“The value placed on creativity in modern times has led to a range of writers and thinkers trying to articulate what it is, how to stimulate it, and why it is important,” Du Sautoy writes. One of those thinkers is the research professor of cognitive science, Margaret Boden, who has identified three different types of human creativity.

The first is exploratory creativity, which involves taking what is there and exploring its outer edges, extending the limits of what is possible while remaining bound by the rules. “Boden believes that exploration accounts for 97 per cent of human creativity. This is the sort of creativity that is perfect for a computational mechanism that can perform many more calculations than the human brain. But is it enough? When we think of truly original creative acts, we generally imagine something utterly unexpected.”

The second type involves combination. “Think of how an artist might take two completely different constructs and seek to combine them. Often the rules governing one world will suggest an interesting framework for the other.” Not only the arts have benefited greatly from this form of cross-fertilisation, also mathematics revels in this type of creativity, just as in exploratory creativity.

There are interesting hints that this sort of creativity might also be perfect for the world of AI. Take an algorithm that plays the blues and combine it with the music of Boulez and you will end up with a strange hybrid composition that might just create a new sound world. Of course, it could also be a dismal cacophony. The coder needs to find two genres that can [algorithmically be fused] in an interesting way.

Margaret Boden’s third form of creativity, transformational creativity, is more mysterious and elusive. “This describes those rare moments that are complete gamechangers. Every art form has these gear shifts. Think of Picasso and Cubism, Schoenberg and atonality, Joyce and modernism. These moments are like phase changes, as when water suddenly goes from a liquid to a solid.”

Transformational moments like these often hinge on changing the rules of the game, or dropping an assumption that previous generations had been working under. “Can a computer initiate this kind of phase change and move us into a new musical or mathematical state?,” Du Sautoy wonders. Algorithms learn how to act based on the data that they interact with. But does this mean that they will always be condemned to producing more of the same?

“Margaret Boden recognises that creativity isn’t just about being Shakespeare or Einstein. She distinguishes between what she calls ‘psychological creativity’ and ‘historical creativity.’ Many of us achieve acts of personal creativity that may be novel to us but historically old news. These are what Boden calls moments of psychological creativity. It is by repeated acts of personal creativity that ultimately one hopes to produce something that is recognised by others as new and of value. While historical creativity is rare, it emerges from encouraging psychological creativity,” Du Sautoy writes.

His own recipe for eliciting creativity in students follows Boden’s three modes of creativity. “Exploration is perhaps the most obvious path. First, understand how we’ve come to the place we are now and then try to push the boundaries just a little bit further. This involves deep immersion in what we have created to date. Out of that deep understanding might emerge something never seen before. It is often important to impress on students that there isn’t very often some big bang that resounds with the act of creation. It is gradual.

As Van Gogh wrote, ‘Great things are not done by impulse but by a series of small things brought together.’

Boden’s second strategy, combinational creativity, is a powerful weapon, I find, in stimulating new ideas. I often encourage students to attend seminars and read papers in subjects that don’t appear to connect with the problem they are tackling. A line of thought from a disparate bit of the mathematical universe might resonate with the problem at hand and stimulate a new idea. Some of the most creative bits of science are happening today at the junctions between the disciplines. The more we can come out of our silos and share our ideas and problems, the more creative we are likely to be. This is where a lot of the low-hanging fruit is to be found.

At first sight transformational creativity seems hard to harness as a strategy. But again the goal is to test the status quo by dropping some of the constraints that have been put in place. Try seeing what happens if we change one of the basic rules we have accepted as part of the fabric of our subject. These are dangerous moments because you can collapse the system, but this brings me to one of the most important ingredients needed to foster creativity — and that is embracing failure.”

Are these strategies that can be written into code?

According to Du Sautoy, they are, especially since algorithms can be built on code that learns from its failures. If he is right, the entire human spectrum of creativity, as defined by Margaret Boden, could fall within the realm of A.I. This, indeed, begs the question, what is the purpose of humanity if machines can learn ingenuity? No doubt, A.I. will have an answer to that one as well…

“Machines can already write music and beat us at games like chess and Go. But the rise of artificial intelligence should inspire hope as well as fear,”says Marcus du Sautoy in Could robots make us better humans, an interview with John Harris for the Guardian.

“‘These things are already better at empathy than we are,’ he says. ‘AI is able to recognise a false smile as opposed to a genuine smile better than a human can. And if we’re worried about a dystopian future, we should want an empathic AI — an AI that understands what it’s like to be human. And, conversely, we need to understand how it’s working — because it’s making decisions about our lives.’

This is one of his arguments for listening to AI-generated music, studying how computers do maths and, even if they are awful travesties, gazing at digitally produced paintings: to understand how advanced machines work at the deepest level, in order to make sure we know everything about the technology that is now built into our lives. It’s also, he says, the best case for reading his book: ‘We think we’re in control, and at the moment, we’re not. And unless we learn the ways that we’re being pushed and pulled around by algorithms, we’re going to be at their mercy.’

Once that happens, he suggests, perhaps humans and machines can move smoothly into a shared future as bright as his shoes. ‘There’s actually a lot to be hopeful about,’ says Du Sautoy, before he picks up his yellow pad, briefly stares at all those shapes and equations, and prepares to get back to work.”

In The AI-Art Gold Rush Is Here, Ian Bogost wonders if A.I. will it reinvent art or destroy it?

“The results are striking and strange, although calling them a new artistic style might be a stretch. They’re more like credible takes on visual abstraction. The images in the show, which were produced based on training sets of Renaissance portraits and skulls, are more figurative, and fairly disturbing. Their gallery placards name them dukes, earls, queens, and the like, although they depict no actual people — instead, human-like figures, their features smeared and contorted yet still legible as portraiture. Faceless Portrait of a Merchant, for example, depicts a torso that might also read as the front legs and rear haunches of a hound. Atop it, a fleshy orb comes across as a head. The whole scene is rippled by the machine-learning algorithm, in the way of so many computer-generated artworks,” Bogost writes.

Where smart cities proponents go astray

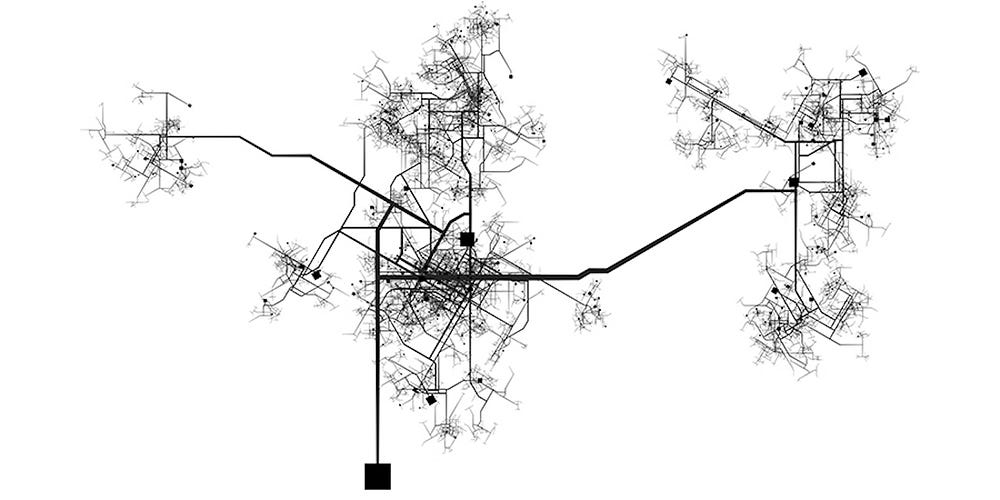

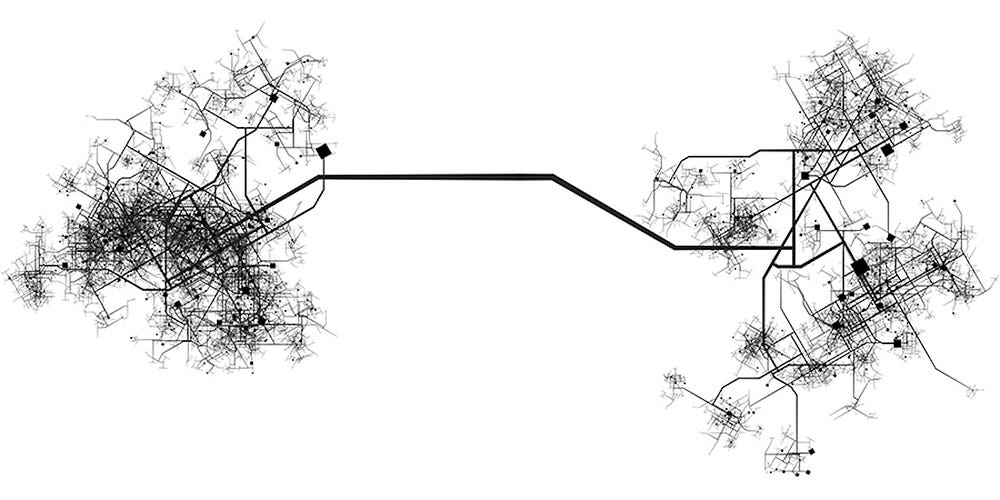

One of the smart city’s most alluring features is its promise of innovation; its use of cutting-edge technology to transform municipal operations, writes Ben Green in Cities Are Not Technology Problems: What Smart Cities Companies Get Wrong, an excerpt of his forthcoming book The Smart Enough City (MIT Press, 2019).

“Like efficiency, innovation possesses the nebulous appeal of being both neutral and optimal, which is difficult to oppose. After all, who would want her city to stagnate rather than innovate?

There is little doubt that cities can benefit from new ideas, policies, practices, and tools that make data easier to interpret and access. Where smart-city proponents such as Alphabet’s Sidewalk Labs go astray, however, is when they equate innovation with technology.”

This perspective is deeply misguided, says Green. Technological innovation in cities is “a matter of deploying technology in conjunction with non-technical change and expertise. This requires data scientists to reach out beyond the realm of databases and analytics to access as much contextual knowledge as possible.”

“Technology also cannot provide answers — or even questions — on its own: Cities must first determine what to prioritize (a clearly political task) and then deploy data and algorithms to assess and improve their performance. Wired famously promised that “the data deluge makes the scientific method obsolete” and represents “the end of theory,” but in today’s age of seemingly endless data, theory matters more than ever. In the past, when they collected minimal data and had little capacity for analytics, cities had few choices about how to utilize data. Now, however, cities collect extensive data and can deploy cutting-edge analytics to make sense of it all. The magnitude of possible analyses and applications facing them is overwhelming. Without a thorough grounding in urban policy and program evaluation, cities will be bogged down by asking the wrong questions and chasing the wrong answers,” Green argues.

According to Joy Bonaguro, San Francisco’s former chief data officer , “The key barrier to data science is good questions. When we hire data scientists,I really want someone who does not want to just be a machine learning jockey. We need someone who is comfortable and happy to use a range of techniques. A lot of our problems aren’t machine learning problems.”

This is also the core message of Green’s forthcoming book, The Smart Enough City. Cities are not technology problems, and technology cannot solve many of today’s most pressing urban challenges, he argues. “Cities don’t need fancy new technology — they need us to ask the right questions, understand the issues that residents face, and think creatively about how to address those problems. Sometimes technology can aid these efforts, but technology cannot provide solutions on its own.”

According to Bruce Sterling in Stop Saying ‘Smart Cities,’ there is no such thing as a ‘smart city.’ “The term ‘smart city’ is interesting yet not important, because nobody defines it. ‘Smart’ is a snazzy political label used by a modern alliance of leftist urbanites and tech industrialists,” he writes. “[The] cities of the future won’t be ‘smart,’ or well-engineered, cleverly designed, just, clean, fair, green, sustainable, safe, healthy, affordable, or resilient. They won’t have any particularly higher ethical values of liberty, equality, or fraternity, either. The future smart city will be the internet, the mobile cloud, and a lot of weird paste-on gadgetry, deployed by City Hall, mostly for the sake of making towns more attractive to capital.”

“If you look at where the money goes (always a good idea), it’s not clear that the “smart city” is really about digitizing cities. Smart cities are a generational civil war within an urban world that’s already digitized. It’s the process of the new big-money, post-internet crowd, Google, Apple, Facebook, Amazon, Microsoft et al, disrupting your uncle’s industrial computer companies, the old-school machinery guys who ran the city infrastructures, Honeywell, IBM, General Electric. It’s a land grab for the command and control systems that were mostly already there.

[…]

‘Smart cities’ merely want to be perceived as smart, when what they actually need is quite different. Cities need to be rich, powerful, and culturally persuasive, with the means, motive, and opportunity to manage their own affairs. That’s not at all a novel situation for a city. ‘Smartness’ is just today’s means to this well-established end.

The future prospects of city life may seem strange or dreadful, but they’re surely not so dreadful as traditional rural life. All over the planet, villagers and farmers are rushing headlong into cities. Even nations so placid, calm, and prosperous as the old Axis allies of Germany, Japan, and Italy have strange, depopulated rural landscapes now. People outside the cities vote with their feet; they check in, and they don’t leave. The lure of cities is that powerful. They may be dumb, blind, thorny, crooked, congested, filthy, and seething with social injustice, but boy are they strong.”

The ethical consequences of immortality

“I welcomed him generously and fed him, and promised to make him immortal and un-aging. But since no god can escape or deny the will of Zeus the aegis bearer, let him go, if Zeus so orders and commands it, let him sail the restless sea. But I will not convey him, having no oared ship, and no crew, to send him off over the wide sea’s back. Yet I’ll cheerfully advise him, and openly, so he may get back safe to his native land.” — Calypso to Hermes when he delivers Zeus’ request to send Odysseus swiftly on his way (Homer, The Odyssey, Book V:92–147, Hermes explains his mission)

“Immortality has gone secular. Unhooked from the realm of gods and angels, it’s now the subject of serious investment — both intellectual and financial — by philosophers, scientists and the Silicon Valley set. Several hundred people have already chosen to be ‘cryopreserved’ in preference to simply dying, as they wait for science to catch up and give them a second shot at life,” Adrian Rorheim and Francesca Minerva write in What are the ethical consequences of immortality technology?

But if we treat death as a problem, what are the ethical implications of the highly speculative ‘solutions’ being mooted?

Although we don’t have the means of achieving human immortality, nor is it clear that we ever will But two hypothetical options have so far attracted the most interest and attention: rejuvenation technology and mind uploading.

“Like a futuristic fountain of youth, rejuvenation promises to remove and reverse the damage of ageing at the cellular level. Gerontologists such as Aubrey de Grey argue that growing old is a disease that we can circumvent by having our cells replaced or repaired at regular intervals. Practically speaking, this might mean that every few years, you would visit a rejuvenation clinic. Doctors would not only remove infected, cancerous or otherwise unhealthy cells, but also induce healthy ones to regenerate more effectively and remove accumulated waste products. This deep makeover would ‘turn back the clock’ on your body, leaving you physiologically younger than your actual age. You would, however, remain just as vulnerable to death from acute trauma — that is from injury and poisoning, whether accidental or not — as you were before,” Rorheim and Minerva write.

“The other option would be mind uploading, in which your brain is digitally scanned and copied onto a computer. This method presupposes that consciousness is akin to software running on some kind of organic hard-disk — that what makes you you is the sum total of the information stored in the brain’s operations, and therefore it should be possible to migrate the self onto a different physical substrate or platform. This remains a highly controversial stance. However, let’s leave aside for now the question of where ‘you’ really reside, and play with the idea that it might be possible to replicate the brain in digital form one day.”

Rejuvenation seems like a fairly low-risk solution. It essentially extends and improves your body’s inherent ability to take care of itself. However, to have your one shot at eternity, you will need to avoid any risk of physical harm, making you among the most anxious people in history.

Mind uploading, by contrast, resembles the backup of files on external drives or in the cloud. “[Your] uploaded mind could be copied innumerable times and backed up in secure locations, making it unlikely that any natural or man-made disaster could destroy all of your copies.” This comes tantalisingly close to true immortality.

“Despite this advantage, mind uploading presents some difficult ethical issues. Some philosophers, such as David Chalmers, think there is a possibility that your upload would appear functionally identical to your old self without having any conscious experience of the world. You’d be more of a zombie than a person, let alone you. Others, such as Daniel Dennett, have argued that this would not be a problem. Since you are reducible to the processes and content of your brain, a functionally identical copy of it — no matter the substrate on which it runs — could not possibly yield anything other than you.

What’s more, we cannot predict what the actual upload would feel like to the mind being transferred. Would you experience some sort of intermediate break after the transfer, or something else altogether? What if the whole process, including your very existence as a digital being, is so qualitatively different from biological existence as to make you utterly terrified or even catatonic? If so, what if you can’t communicate to outsiders or switch yourself off? In this case, your immortality would amount to more of a curse than a blessing. Death might not be so bad after all, but unfortunately it might no longer be an option.

Another problem arises with the prospect of copying your uploaded mind and running the copy simultaneously with the original. One popular position in philosophy is that the youness of you depends on remaining a singular person — meaning that a ‘fission’ of your identity would be equivalent to death. That is to say: if you were to branch into you1 and you2, then you’d cease to exist as you, leaving you dead to all intents and purposes. Some thinkers, such as the late Derek Parfit, have argued that while you might not survive fission, as long as each new version of you has an unbroken connection to the original, this is just as good as ordinary survival.”

Which option is more ethically fraught?

In the author’s view, rejuvenation is probably a less problematic choice. “Yes, vanquishing death for the entire human species would greatly exacerbate our existing problems of overpopulation and inequality — but the problems would at least be reasonably familiar,” they argue. Mind uploading, however, would open up a plethora of completely new and unfamiliar ethical quandaries.

“Uploaded minds might constitute a radically new sphere of moral agency. For example, we often consider cognitive capacities to be relevant to an agent’s moral status (one reason that we attribute a higher moral status to humans than to mosquitoes). But it would be difficult to grasp the cognitive capacities of minds that can be enhanced by faster computers and communicate with each other at the speed of light, since this would make them incomparably smarter than the smartest biological human. As the economist Robin Hanson argued in The Age of Em (2016), we would therefore need to find fair ways of regulating the interactions between and within the old and new domains — that is, between humans and brain uploads, and between the uploads themselves. What’s more, the astonishingly rapid development of digital systems means that we might have very little time to decide how to implement even minimal regulations,” Minerva and Rorheim write.

As for the the personal, practical consequences of your choice of immortality, “Assuming you somehow make it to a future in which rejuvenation and brain uploading are available, your decision seems to depend on how much risk — and what kinds of risks — you’re willing to assume. Rejuvenation seems like the most business-as-usual option, although it threatens to make you even more protective of your fragile physical body. Uploading would make it much more difficult for your mind to be destroyed, at least in practical terms, but it’s not clear whether you would survive in any meaningful sense if you were copied several times over. This is entirely uncharted territory with risks far worse than what you’d face with rejuvenation. Nevertheless, the prospect of being freed from our mortal shackles is undeniably alluring — and if it’s ever an option, one way or another, many people will probably conclude that it outweighs the dangers.”

Alluring or not, the British philosopher Julian Baggini believes that the dream of immortality would betray what it means to be human. Besides, “eternal life would be deathly dull,” he warns those who are seeking to live for ever.

“To even imagine eternal life,” Baggini writes, “we have to assume that we are the kinds of creatures who could persist indefinitely. But contemporary philosophers, neuroscientists, psychologists and the early Buddhists all agree that the self is in constant flux, lacking a permanent, unchanging essence. Put simply, there is no thing that could survive indefinitely. Take this seriously and you can see how the idea of living for ever is incoherent. If your body could be kept going for a thousand years, in what sense would ‘the you’ that exists now still be around then? It would be more like a descendant than it would a continuation of you. I sometimes find it hard to identify with my teenage self, and that was less than 40 years ago. If I change, I eventually become someone else. If I don’t, life becomes stagnant and loses its direction. Even if we could survive for hundreds of years, focusing too much on the future always risks neglecting the present. There is a very real sense in which we only ever exist in the here and now. Being fully alive requires being in that present as fully as possible. Dreams of eternal life interfere with making the most of the reality of temporal life.”

According to Baggini, we need to accept that our mortality is a necessary part of being embodied beings who live in time. But this doesn’t mean we have to romanticise death or, like Plato and the Stoics, consider it to be no bad thing at all. “On this, Aristotle was characteristically sensible […]. The more we live life well, the more we ‘will be distressed at the thought of death.’ When you appreciate that ‘life is supremely worth living’ you know what a grievous loss it is when that life comes to an end. Living for ever may be a terrible fate but living a lot longer in good health sounds like a wonderful one.”

Baggini’s attitude to death is therefore similar to Augustine’s attitude towards chastity. “Yes, I want to be mortal, but please — not yet.”

From gene therapy and fad-diets to cryonic frozen corpses, many still hope to find a way to live forever. Some scientists are starting to think death might be reversible. But Heidegger famously thought life’s transience gave it meaning. Does our fear of death prevent us from living fully? Could we enhance experience by embracing its end? Or can science one day remove death’s shadow, without compromising the meaning and quality of life?

In Living Forever, recorded at the Institute of Art and Ideas’ annual philosophy and music festival HowTheLightGetsIn, philosopher and author of Post-human Ethics Patricia MacCormack, Oxford transhumanist Anders Sandberg, and author Janne Teller debate cryonics, death and where life gets its meaning.

And also this …

Since Uber was born in March 2009, “its name became a shorthand for [a] new kind of business: Uber for laundry; Uber for groceries; Uber for dog walking; Uber for (checks notes) cookies. Larger transformations swirled around — the gig economy, the on-demand economy — but the trend was most easily summed up by the way so many starry-eyed founders pitched their company: Uber for X,” writes Alexis Madrigal in The Servant Economy.

“As a group, all of these companies have brought hundreds of thousands of people into new work arrangements that are more than a gig but less than a job. They’ve rearranged the way people get basic tasks done, and they’ve wired those in local industries — handymen, house cleaners, dog walkers, dry cleaners — into the tech- and capital-rich global economy. These people are now submitting to a new middleman, who they know controls the customer relationship and will eventually have to take a big cut, as Uber drivers would be happy to tell them. And because the ideas themselves are not rocket science, the competition has been fierce. […] To drive faster growth, they have to charge customers less (increasing demand) and pay workers more (increasing supply), then fill the gap with venture-capital funding.”

Because these ‘Uber for X’ companies have done so much —upended labor markets, changed industries, rewritten the definition of a job — it is worth asking, for what exactly?

“It’s not hard to look around the world and see all those zeroes of capital going into dog-walking companies and wonder: Is this really the best and highest use of the Silicon Valley innovation ecosystem? In the 10 years since Uber launched, phones haven’t changed all that much. The world’s most dominant social network became Facebook in 2009, and in 2019, it is still Facebook. Google is still Google, even if it is called Alphabet,” Madrigal writes.

Politically, however, the world is night and day and “[in] that context, these apps take on a strange pall. The haves and the have-nots might be given new names: the demanding and the on-demand. These apps concretize the wild differences that the global economy currently assigns to the value of different kinds of labor. Some people’s time and effort are worth hundreds of times less than other people’s. The widening gap between the new American aristocracy and everyone else is what drives both the supply and demand of Uber-for-X companies.”

Although the inequalities of capitalist economies have been around for centuries, “[what] the combined efforts of the Uber-for-X companies created is a new form of servant, one distributed through complex markets to thousands of different people. It was Uber, after all, that launched with the idea of becoming ‘everyone’s private driver,’ a chauffeur for all.

An unkind summary, then, of the past half decade of the consumer internet: Venture capitalists have subsidized the creation of platforms for low-paying work that deliver on-demand servant services to rich people, while subjecting all parties to increased surveillance.

These platforms may unlock new potentials within our cities and lives. They’ve definitely generated huge fortunes for a very small number of people. But mostly, they’ve served to make our lives marginally more convenient than they were before. Like so many other parts of the world tech has built, the societal trade-off, when fully calculated, seems as likely to fall in the red as in the black.”

The Economist published a review of Mortal Republic: How Rome Fell into Tyranny by Edward Watts, entitled Lessons from the fall of a great republic.

“Shakespeare missed a trick. His version of Julius Caesar’s funeral does, admittedly, have its moments. But he might have done even better had he read his Appian. For while the Bard’s version musters oratorical verve, the historian’s offers a coup de théâtre, complete with the astute use of props, sightlines and stagecraft.

Before the funeral, well aware that Caesar’s corpse would be obscured by the throng, a wax cast of the body was prepared, with each of the assassins’ blows marked on it clearly. This was then erected above Caesar’s bier. As the Roman people filled the Forum, a mechanical device rotated the model slowly, revealing the 23 ‘gaping’ wounds to everyone. The crowd, as they say, went wild.

Caesar’s reign — and its bloody end and bloodier aftermath — would later come to be seen as a turning point in the history of Rome. And indeed it was, but as Edward Watts points out in Mortal Republic, the Republic had been in its death throes for decades. The decline was caused less by gaping wounds than gaping inequality, and by leaders unable or unwilling to remedy it. If that syndrome sounds familiar, it is meant to. Mr Watts says inquiries about how antiquity can illuminate the ‘occasionally alarming political realities of our world’ prompted his reflections.”

“This ominously titled book is his response, furnished with such nudgingly apposite chapters as The Politics of Frustration and The Republic of the Mediocre. His gambit isn’t new: the classical world is often used as a lens through which to examine modernity. Want to understand China? Read Thucydides. Need to know about Afghanistan? Best study Plutarch. This trick can easily go awry: the past was not just the present in togas, as some historians would do well to remember. But Mr Watts pulls it off deftly.

He begins by taking the reader on a brisk march through Roman history. The Republic’s early citizens were legendarily hardy. In the late third century bc, faced with multiple threats, Rome entered a state Mr Watts describes as ‘an ancient version of total war.’ Two-thirds of the male citizen population between 17 and 30 years old were enrolled in the army, ready to die for Rome. And die they did, cut down in battle after battle like fields of wheat. During one engagement with Hannibal, tens of thousands were killed. The Roman response was to regroup — and win.

Such sturdiness didn’t last. By the second century BC, the formerly united Republic had been split into two factions — not by war but by wealth. On one side was a class of ‘superwealthy Romans,’ enriched by military conquest and growing financial sophistication. They dined off silver plate, ate imported fish, drank vintage wine and holidayed in extravagant Mediterranean villas. One of the most powerful was Crassus, a man who made his fortune in unscrupulous property deals, then used that money to buy political influence.

Yet while some Romans swilled from ornate goblets, the majority drank a more bitter draught. They endured a life of backbreaking work and the knowledge that they would almost certainly end up poorer than their parents. Such a situation could hardly last — and didn’t.

What remains one of the world’s longest-lasting republics fell by the end of the first century bc, to be replaced by autocracy. Rome had defeated its enemies abroad but, argues Mr Watts, it was undone from within by greed and inequality — and by the sort of politicians ‘who breach a republic’s political norms.’ plus ‘citizens who choose not to punish them.’”

In Feel Free, a wide-ranging collection of essays, written between 2010 and 2017, Zadie Smith tackles subjects from JG Ballard to Justin Bieber. Covering politics, philosophy, art and culture, most have been published before but reading them together is nonetheless richly rewarding. Smith discusses the process and anxieties of writing and conveys her enjoyment of culture alongside razor-sharp analysis. It is a dazzlingly intelligent collection, but far from intellectually alienating: Smith weaves her ideas around personal memories and pop culture references, creating an intimacy between herself and the reader.

One of the essays in Feel Free is Joy (The New York Review of Books, 2013), in which Smith describes the subtle difference between pleasure and joy. Pleasure, she writes, is relatively easy to find, instant and replicable. For Smith, a pleasurable experience is embodied in a pineapple popsicle from a stand on Washington Square, or the ecstasy she experiences people watching on the streets of New York City. Joy, on the other hand, is a more tangled experience in which, Smith says, one can find no pleasure at all but still need in order to live. To make the point, she writes as a final thought:

“… sometimes joy multiplies itself dangerously. Children are the infamous example. Isn’t it bad enough that the beloved, with whom you have experienced genuine joy, will eventually be lost to you? Why add to this nightmare the child, whose loss, if it ever happened, would mean nothing less than your total annihilation? It should be noted that an equally dangerous joy, for many people, is the dog or the cat, relationships with animals being in some sense intensified by guaranteed finitude. You hope to leave this world before your child. You are quite certain your dog will leave before you do. Joy is such a human madness.

The writer Julian Barnes, considering mourning, once said, ‘It hurts just as much as it is worth.’ In fact, it was a friend of his who wrote the line in a letter of condolence, and Julian told it to my husband, who told it to me. For months afterward these words stuck with both of us, so clear and so brutal. It hurts just as much as it is worth. What an arrangement. Why would anyone accept such a crazy deal? Surely if we were sane and reasonable we would every time choose a pleasure over a joy, as animals themselves sensibly do. The end of a pleasure brings no great harm to anyone, after all, and can always be replaced with another of more or less equal worth.”

Joy is something you can lose and never regain. It is painful, vulnerable and more often than not completely lacking in pleasure.

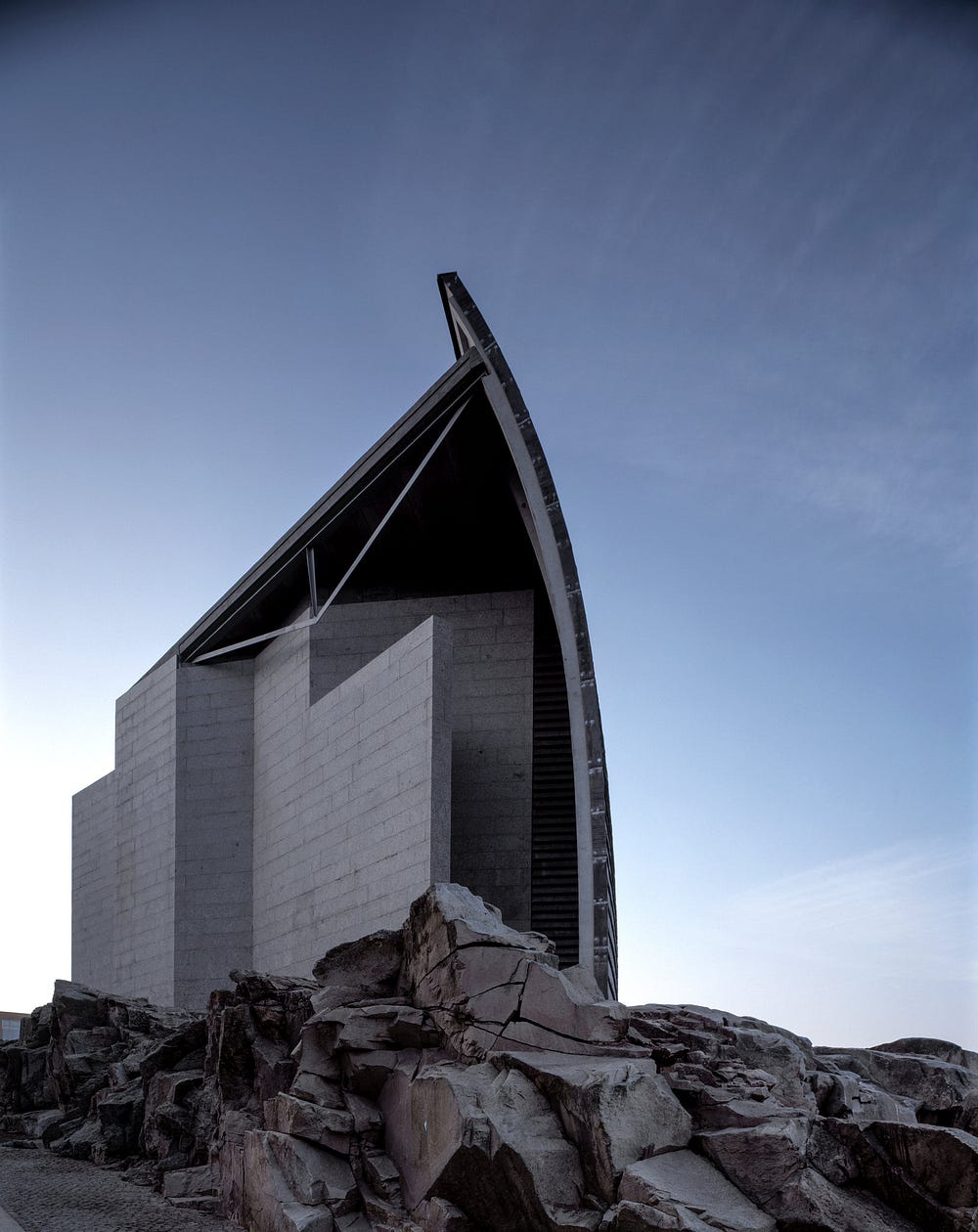

“He has been called the ‘emperor of Japanese architecture’ by his peers and ‘visionary’ by critics. Now, the internationally renowned Japanese architect Arata Isozaki can add yet another tribute: the 2019 Pritzker Architecture Prize,” writes Amy Qin in Pritzker Prize Goes to Arata Isozaki, Designer for a Postwar World.

“Dressed in a mud-dyed silk kimono with a folding fan tucked into his obi and his unruly white shoulder-length hair combed back behind his ears, Mr. Isozaki reflected on a six-decade long career blending architecture with visual art, poetry, philosophy, theater, writing and design.

‘My concept of architecture is that it is invisible,’ he said. ‘It’s intangible. But I believe it can be felt through the five senses.’

Crucial to Mr. Isozaki’s avant-garde approach to architecture is the Japanese concept of ‘ma,’ signifying the merging of time and space, which was the subject of a traveling exhibition in 1978. [In an interview with PLANE — SITE, Isozaki explores the topics of time, space and existence in architecture.]

‘Like the universe, architecture comes out of nothing, becomes something, and eventually becomes nothing again,’ said Mr. Isozaki. ‘That life cycle from birth to death is a process that I want to showcase.’

His commitment to the ‘art of space’ was in part what led the Pritzker jury to select Mr. Isozaki as its 46th laureate, and the eighth from Japan. He will receive the award at a ceremony in France in May.”

“While his best known projects are in cities, Mr. Isozaki said last week that he was ‘more nostalgic about the rural projects.’ Asked to pick a favorite, he named the Domus Museum (1993–1995) in A Coruña, Spain. Built atop a rocky outcrop by the Bay of Riazor, the museum features a curved slate-clad facade that resembles a sail billowing in the wind.

‘Mr. Isozaki’s is an architecture that thrusts aside the shopworn debate between modernism and postmodernism, for it is both modern and postmodern,’ the critic Paul Goldberger wrote in The Times in 1986. ‘Modern in its reliance on strong, self-assured abstraction, postmodern in the degree to which it feels connected to the larger stream of history.’

In 2017, Mr. Isozaki donated his vast collection of books and quietly moved with his partner, Misa Shin, from Tokyo to Okinawa in search of warmer climes. The couple rented a nondescript apartment with a view of the sea in a peaceful residential neighborhood. The neighbors have no idea that living in the peach-colored walk-up is a bona fide starchitect.”

Contemplation spaces are a regular feature in the work of the London-based architectural designer John Pawson. Nový Dvůr monastery and the St Moritz Church are among his best-known projects.

For Wooden Chapel, Pawson has stacked up 144 tree trunks to create a space of rest and contemplation alongside a new cycle route in southwest Germany. It is considerably simpler than the two projects mentioned above, containing just a single room where passing cyclists can find rest and shelter. But it is still designed to create opportunities for spiritual reflection.

“For me a special commission is one that brings the chance truly to test the thinking, in terms of space and materials, in the purest and most intense ways,” Pawson said. “I felt sure when I first received the invitation to design a chapel on the edge of the forest that here was such a project and so it has proved to be.”

Via Dezeen and designboom

“This century, this age of collapse, urgently calls for us to expand our moral horizons to include all of us. That’s the only way we care about the planet, about inequality, about greed, about everything going wrong — by caring about everyone on it. Too often, being children of the 20th century, we want to have our cake and eat it too. We want to call ourselves good and moral people — but not really care about anyone outside the family, tribe, or nation. That mistake will not work anymore. In this century, if we cannot care for everyone — no one will be cared for. We will stand together, or we will perish apart.” — Umair Haque in (Why) the 21st Century is When Humanity Matures — or Falls Apart