Random finds (2019, week 4) — On the elite charade of changing the world, the moment when we become slaves to the machines, and ‘surveillance capitalism’

I have gathered a posy of other men’s flowers, and nothing but the thread that binds them is mine own.” — Michel de Montaigne

Random finds is a weekly curation of my tweets and, as such, a reflection of my fluid and boundless curiosity. I am also creating #TheInfiniteDaily; an ∞ of little pieces of wisdom, art, music, books and other things that have made me stop and think.

If you want to know more about my work and how I help leaders and their teams find their way through complexity, ambiguity, paradox & doubt, go to Leadership Confidant — A new and more focused way of thriving on my ‘multitudes’ or visit my new ‘uncluttered’ website.

This week: The elite’s phoney crusade to save the world — without changing anything; will we, as Marvin Minsky predicted, become “pets” to the computers?; tech’s goal to automate us; have we reached the limit of individualism?; why you shouldn’t throw in the day job to follow your dream; technology’s moral implications; how John Ruskin shapes our world; beautiful architecture; we change together; and, finally, curiosity and seeking out new ‘first loves.’

The elite charade of changing the world

“If we want things to stay as they are, things will have to change.” — Tancredi Falconeri, the fictional 19th-century Italian aristocrat from Giuseppe Tomasi di Lampedusa’s novel The Leopard

“Today’s titans of tech and finance want to solve the world’s problems, as long as the solutions never, ever threaten their own wealth and power,” says Anand Giridharadas, the author of Winners Take All: The Elite Charade of Changing the World.

“When the fruits of change have fallen on the US in recent decades, the very fortunate have basketed almost all of them,” Giridharadas writes in The new elite’s phoney crusade to save the world — without changing anything. “That vast numbers of Americans and others in the west have scarcely benefited is not because of a lack of innovation, but because of social arrangements that fail to turn new stuff into better lives.”

“Thus many millions of Americans, on the left and right, feel one thing in common: that the game is rigged against people like them. Perhaps this is why we hear constant condemnation of ‘the system,’ for it is the system that people expect to turn fortuitous developments into societal progress. Instead, the system — in America and across much of the world — has been organised to siphon the gains from innovation upward, such that the fortunes of the world’s billionaires now grow at more than double the pace of everyone else’s, and the top 10% of humanity have come to hold 85% of the planet’s wealth. New data published this week by Oxfam showed that the world’s 2,200 billionaires grew 12% wealthier in 2018, while the bottom half of humanity got 11% poorer. It is no wonder, given these facts, that the voting public in the US (and elsewhere) seems to have turned more resentful and suspicious in recent years, embracing populist movements on the left and right, bringing socialism and nationalism into the centre of political life in a way that once seemed unthinkable, and succumbing to all manner of conspiracy theory and fake news. There is a spreading recognition, on both sides of the ideological divide, that the system is broken, that the system has to change.”

“[In] recent years a great many fortunate Americans have also tried something else, something both laudable and self-serving: they have tried to help by taking ownership of the problem. All around us, the winners in our highly inequitable status quo declare themselves partisans of change. They know the problem, and they want to be part of the solution. Actually, they want to lead the search for solutions. They believe their solutions deserve to be at the forefront of social change. They may join or support movements initiated by ordinary people looking to fix aspects of their society. More often, though, these elites start initiatives of their own, taking on social change as though it were just another stock in their portfolio or corporation to restructure. Because they are in charge of these attempts at social change, the attempts naturally reflect their biases,” Giridharadas argues.

“For the most part, these initiatives are not democratic, nor do they reflect collective problem-solving or universal solutions. Rather, they favour the use of the private sector and its charitable spoils, the market way of looking at things, and the bypassing of government. They reflect a highly influential view that the winners of an unjust status quo — and the tools and mentalities and values that helped them win — are the secret to redressing the injustices. Those at greatest risk of being resented in an age of inequality are thereby recast as our saviours from an age of inequality. Socially minded financiers at Goldman Sachs seek to change the world through ‘win-win’ initiatives such as ‘green bonds’ and ‘impact investing.’ Tech companies such as Uber and Airbnb cast themselves as empowering the poor by allowing them to chauffeur people around or rent out spare rooms. Management consultants and Wall Street brains seek to convince the social sector that they should guide its pursuit of greater equality by assuming board seats and leadership positions.”

“This genre of elites believes and promotes the idea that social change should be pursued principally through the free market and voluntary action, not public life and the law and the reform of the systems that people share in common; that it should be supervised by the winners of capitalism and their allies, and not be antagonistic to their needs; and that the biggest beneficiaries of the status quo should play a leading role in the status quo’s reform.”

Giridharadas calls this ‘MarketWorld’ — “an ascendant power elite defined by the concurrent drives to do well and do good, to change the world while also profiting from the status quo. It consists of enlightened businesspeople and their collaborators in the worlds of charity, academia, media, government and thinktanks. It has its own thinkers, whom it calls ‘thought leaders,’ its own language [they often speak in a language of ‘changing the world’ and ‘making the world a better place’], and even its own territory — including a constantly shifting archipelago of conferences at which its values are reinforced and disseminated and translated into action. MarketWorld is a network and community, but it is also a culture and state of mind.”

One could argue that the elites are doing the best they can. “The world is what it is, the system is what it is, the forces of the age are bigger than anyone can resist, and the most fortunate are helping,” Giridharadas writes. “But there is a darker way of judging what goes on when the elites put themselves in the vanguard of social change: that doing so not only fails to make things better, but also serves to keep things as they are. After all, it takes the edge off of some of the public’s anger at being excluded from progress. It improves the image of the winners. By using private and voluntary half-measures, it crowds out public solutions that would solve problems for everyone, and do so with or without the elite’s blessing. There is no question that the outpouring of elite-led social change in our era does great good and soothes pain and saves lives.”

But how can there be anything wrong with trying to do good? “The answer may be: when the good is an accomplice to even greater, if more invisible, harm. In our era that harm is the concentration of money and power among a small few, who reap from that concentration a near monopoly on the benefits of change. And do-gooding pursued by elites tends not only to leave this concentration untouched, but actually to shore it up. For when elites assume leadership of social change, they are able to reshape what social change is — above all, to present it as something that should never threaten winners. In an age defined by a chasm between those who have power and those who don’t, elites have spread the idea that people must be helped, but only in market-friendly ways that do not upset fundamental power equations. Society should be changed in ways that do not change the underlying economic system that has allowed the winners to win and fostered many of the problems they seek to solve,” Giridharadas writes.

“What is at stake is whether the reform of our common life is led by governments elected by and accountable to the people, or rather by wealthy elites claiming to know our best interests. We must decide whether, in the name of ascendant values such as efficiency and scale, we are willing to allow democratic purpose to be usurped by private actors who often genuinely aspire to improve things but, first things first, seek to protect themselves. […]

Much of what appears to be reform in our time is in fact the defense of stasis. When we see through the myths that foster this misperception, the path to genuine change will come into view. It will once again be possible to improve the world without permission slips from the powerful.”

The moment when we become slaves to the machines

“Going to Facebook, Twitter, Amazon, or Google may seem harmless and may feel like it’s making our life easier, but in reality every time you ‘like’ that photo or type into that search box or order that next Kindle book, all because it’s easy, it’s akin to feeding ‘Audrey’ in Little Shop of Horrors.”

“Sooner than you think, every facet of your life, from the car in your driveway to the lights in your living room, will be ruled by algorithms. The next version of Amazon won’t just suggest which book you should buy next — it will automatically re-stock your fridge with Amazon products that an algorithm has decided you’ll like or need. Sure, it sounds like a utopia to not have to go to the grocery store, but it will also mark the moment when we truly become slaves to the machines,” writes Nick Bilton in “No one is at the controls”: How Facebook, Amazon, and others are turning life into a horrific Bradbury novel.

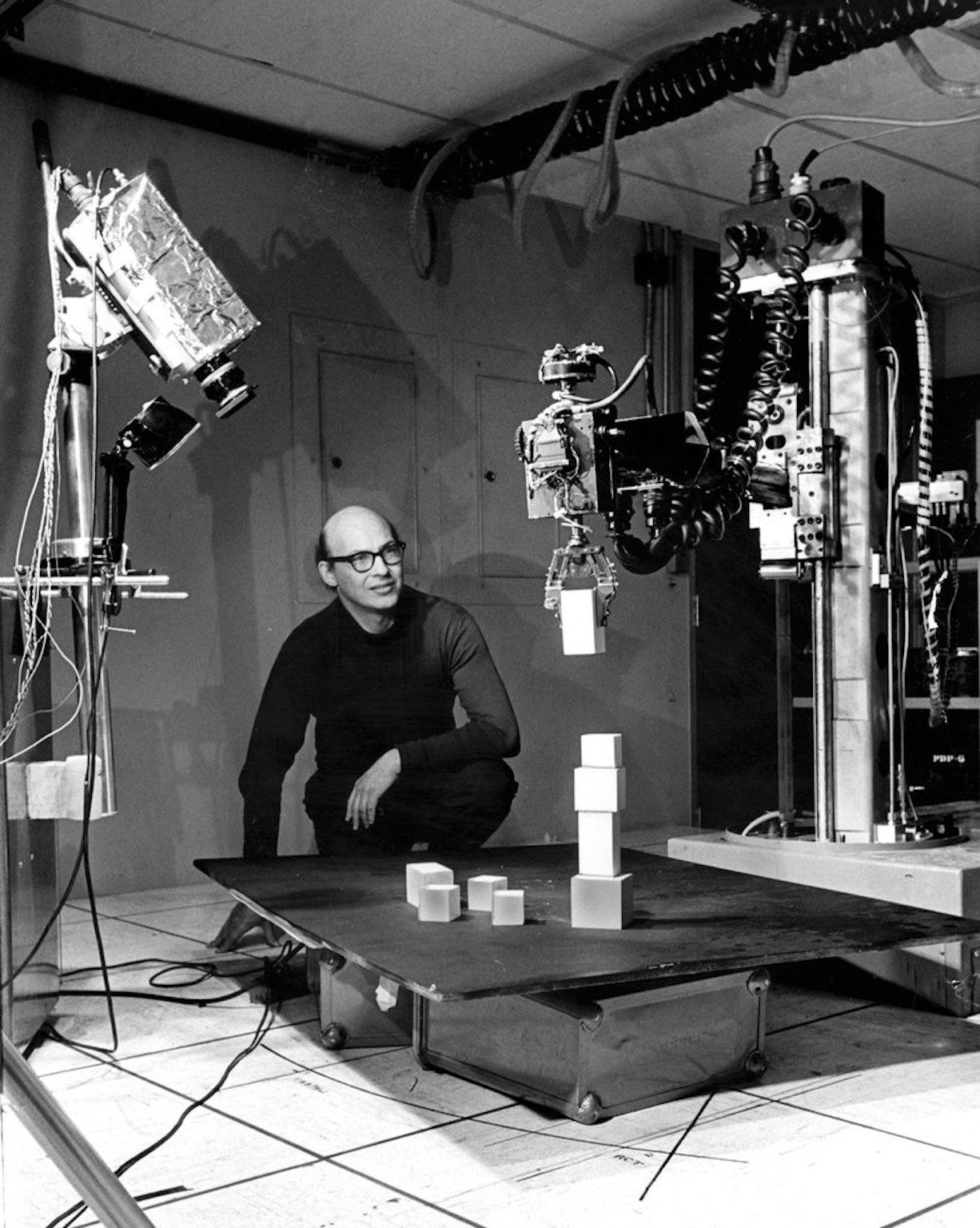

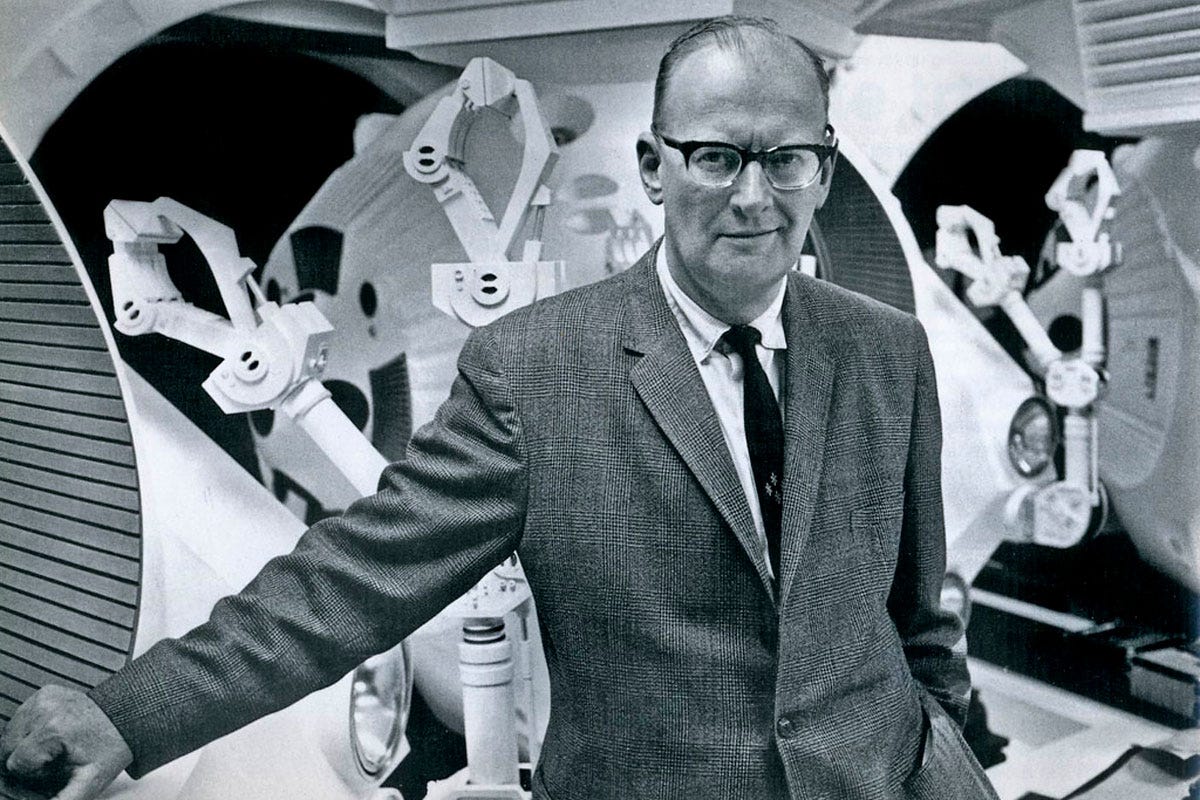

Almost half a century after Marvin Minsky, a mathematician and computer scientist at M.I.T., better known as a founding father of artificial intelligence, posited, “If we’re lucky, the [machines] might decide to keep us as pets,” we are starting to see the outlines of the world Minsky fearfully presaged, one in which the machines really do control us more than we control them, Bilton writes. “Google and Facebook track your every move as you float around the Web, aggregating the most intimate details of your private life for sale to advertisers. Surveillance cameras, some now powered by Amazon, track our wanderings as Alexa, Google Home, and Facebook’s Portal can monitor our conversations. […] At the same time, the randomness of human experience has become constrained. Netflix will recommend a new show you’re guaranteed to love, but at what cost? You’re no longer going to stroll down the wrong aisle in the video store and stumble across an incredible indie film, or pick up a book someone misplaced in the bookstore. Serendipity, once a totem of the tech lingua franca, has now been hacked.”

According to George Dyson, a historian of technology who wrote The 2019 EDGE New Year’s Essay, entitled Childhood’s End, Silicon Valley is “through the looking glass” to the point where tech products take on a life of their own. Take Google’s search engine. It “is no longer a model of human knowledge, it is human knowledge,” Dyson argues. “What began as a mapping of human meaning, now defines human meaning, and has begun to control, rather than simply catalog or index, human thought. No one is at the controls.”

“Each time we mere humans type a query into Google,” Bilton resumes, “we’re altering the results of the algorithm that in turns affects the next person. That might not seem like much of a problem, but when the results control what we do and think, which road we drive down and when, the type of opinions we make based on the news we see, and there is no single person actively defining those results, we’re simply at the mercy of the machine that delivers them to us. The Internet, in other words, has become like a living organism.”

“This, Dyson and countless others argue, is why we should be terrified by these massive companies that currently control the world. Perhaps most prescient is Dyson’s warning about what we have let these massive tech giants become. ‘We imagine that individuals, or individual algorithms, are still behind the curtain somewhere, in control. We are fooling ourselves,’ he writes. ‘The new gatekeepers, by controlling the flow of information, rule a growing sector of the world.’ And yet the companies that do rule the world are no longer in control of the machines they’ve built and the algorithms that have been touched by hundreds of thousands of engineers, none of whom control a single input or output on the platform anymore. The result is a runaway train the size of a continent that has led to things like fake news and Russian bots and Donald J. Trump and Sears going bankrupt and that mom-and-pop store on Main Street going out of business. There is no question that democracy is deteriorating, and technology is hasting that atrophy.”

“In part this is because no single person controls the algorithm anymore — especially the people at the very top of these companies who gain the most financially for how much we use their products and services. There are over a million people working for Google, Amazon, Apple, and Microsoft. While a large number of them are stocking things in warehouses or helping you fix your iPhone, tens of thousands of engineers are re-writing the code that answers all of our questions and desires. It’s almost as if these companies have become too big not to fail society. No single engineer, or even thousands of them, can see every angle of how these platforms are becoming rulers over our own thinking, and what they might be used for. And yet at the same time, they are being used for everything. When I recently asked a former Facebook engineer what it was like working for the social network and if they felt any responsibility for how it was being used nefariously, they replied, ‘You don’t really see it from that perspective. You’re just excited to see two-plus billion people using something you partially coded.’ In other words, I don’t care if the thing I make is bad for the world, just as long as people are using it. Zuckerberg, I’m sure, is more concerned with how many people don’t use his platform, than how he affects the people who do,” Bilton writes.

Can it be stopped or do we, as Minsky predicted in 1970, become “pets” to the computers?

Bilton believes it can be stopped, but only “[if] we really want it to. The most obvious logic would be to unplug the computers, realize we screwed it all up this time around, and start fresh. But as history has proven over and over, we humans are not big on obvious, or logic. We can, however, take it into our own hands.” Each of has the ability to stop “feeding the machines.” We could find alternatives for the big tech giants or even “stop using technology as much as we do, and to return to our analog life of getting lost and trying new things, not at the behest of an algorithm. But, we likely won’t. Let’s just hope our new Overlords treat us well and, when they fully take over, they let us sleep curled up at the end of the bed.”

The age of ‘surveillance capitalism’

“‘Surveillance capitalism’ unilaterally claims human experience as free raw material for translation into behavioural data.” – Shoshana Zuboff

Library shelves groan under the weight of books about the impact of digital technology. “Lots of scholars are thinking, researching and writing about this stuff. But they’re like the blind men trying to describe the elephant in the old fable: everyone has only a partial view, and nobody has the whole picture. So our contemporary state of awareness is — as Manuel Castells, the great scholar of cyberspace once put it — one of ‘informed bewilderment,’” Observer tech columnist John Naughton writes. This is why he believes the arrival of Shoshana Zuboff’s new book, The Age of Surveillance Capitalism, a chilling exposé of the business model that underpins the digital world, is such a big event.

In 1988, as one of the first female professors at Harvard Business School to hold an endowed chair, Zuboff published a landmark book, The Age of the Smart Machine: The Future of Work and Power, which “provided the most insightful account up to that time of how digital technology was changing the work of both managers and workers. And then Zuboff appeared to go quiet, though she was clearly incubating something bigger. The first hint of what was to come was a pair of startling essays — one in an academic journal in 2015, the other in a German newspaper in 2016. What these revealed was that she had come up with a new lens through which to view what Google, Facebook et al were doing — nothing less than spawning a new variant of capitalism. Those essays promised a more comprehensive expansion of this Big Idea. And now it has arrived — the most ambitious attempt yet to paint the bigger picture and to explain how the effects of digitisation that we are now experiencing as individuals and citizens have come about,” Naughton writes.

“The headline story is that it’s not so much about the nature of digital technology as about a new mutant form of capitalism that has found a way to use tech for its purposes. The name Zuboff has given to the new variant is ‘surveillance capitalism.’ It works by providing free services that billions of people cheerfully use, enabling the providers of those services to monitor the behaviour of those users in astonishing detail — often without their explicit consent.”

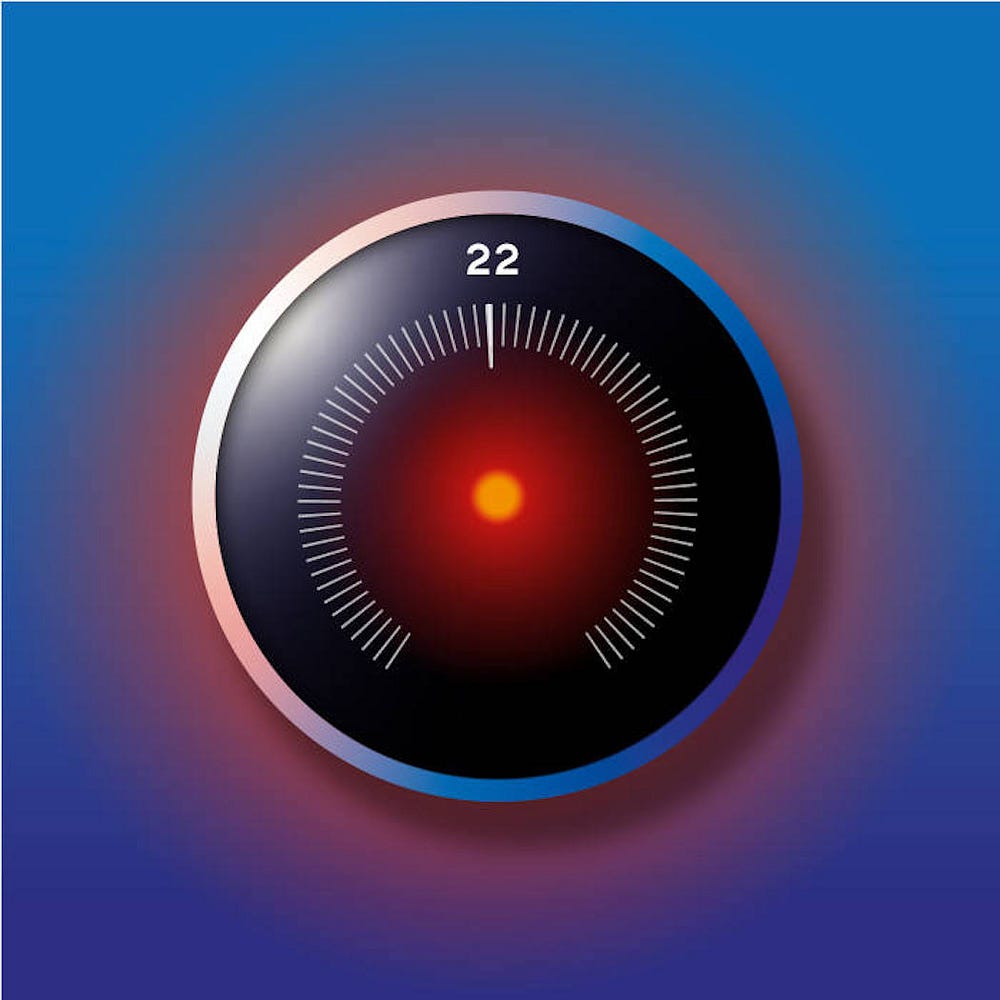

“‘Surveillance capitalism,’ she writes, ‘unilaterally claims human experience as free raw material for translation into behavioural data. Although some of these data are applied to service improvement, the rest are declared as a proprietary behavioural surplus, fed into advanced manufacturing processes known as machine intelligence, and fabricated into prediction products that anticipate what you will do now, soon, and later. Finally, these prediction products are traded in a new kind of marketplace that I call behavioural futures markets. Surveillance capitalists have grown immensely wealthy from these trading operations, for many companies are willing to lay bets on our future behaviour.’

While the general modus operandi of Google, Facebook et al has been known and understood (at least by some people) for a while, what has been missing — and what Zuboff provides — is the insight and scholarship to situate them in a wider context. She points out that while most of us think that we are dealing merely with algorithmic inscrutability, in fact what confronts us is the latest phase in capitalism’s long evolution — from the making of products, to mass production, to managerial capitalism, to services, to financial capitalism, and now to the exploitation of behavioural predictions covertly derived from the surveillance of users. In that sense, her vast (660-page) book is a continuation of a tradition that includes Adam Smith, Max Weber, Karl Polanyi and — dare I say it — Karl Marx,” Naughton notes.

Like Thomas Piketty’s Capital in the Twenty-First Century, Zuboff’s The Age of Surveillance Capital “opens one’s eyes to things we ought to have noticed, but hadn’t. And if we fail to tame the new capitalist mutant rampaging through our societies then we will only have ourselves to blame, for we can no longer plead ignorance.”

Nautghton also asked Zuboff “10 key questions.” Here are three:

[There’s] the “inevitability” narrative — technological determinism on steroids.

[Shoshana Zuboff] “In my early fieldwork in the computerising offices and factories of the late 1970s and 80s, I discovered the duality of information technology: its capacity to automate but also to ‘informate,’ which I use to mean to translate things, processes, behaviours, and so forth into information. This duality set information technology apart from earlier generations of technology: information technology produces new knowledge territories by virtue of its informating capability, always turning the world into information. The result is that these new knowledge territories become the subject of political conflict. The first conflict is over the distribution of knowledge: ‘Who knows?’ The second is about authority: ‘Who decides who knows?’ The third is about power: ‘Who decides who decides who knows?’

Now the same dilemmas of knowledge, authority and power have surged over the walls of our offices, shops and factories to flood each one of us… and our societies. Surveillance capitalists were the first movers in this new world. They declared their right to know, to decide who knows, and to decide who decides. In this way they have come to dominate what I call ‘the division of learning in society,’ which is now the central organising principle of the 21st-century social order, just as the division of labour was the key organising principle of society in the industrial age.

So the big story is not really the technology per se but the fact that it has spawned a new variant of capitalism that is enabled by the technology?

[Shoshana Zuboff] “Larry Page grasped that human experience could be Google’s virgin wood, that it could be extracted at no extra cost online and at very low cost out in the real world. For today’s owners of surveillance capital the experiential realities of bodies, thoughts and feelings are as virgin and blameless as nature’s once-plentiful meadows, rivers, oceans and forests before they fell to the market dynamic. We have no formal control over these processes because we are not essential to the new market action. Instead we are exiles from our own behaviour, denied access to or control over knowledge derived from its dispossession by others for others. Knowledge, authority and power rest with surveillance capital, for which we are merely ‘human natural resources.’ We are the native peoples now whose claims to self-determination have vanished from the maps of our own experience.

While it is impossible to imagine surveillance capitalism without the digital, it is easy to imagine the digital without surveillance capitalism. The point cannot be emphasised enough: surveillance capitalism is not technology. Digital technologies can take many forms and have many effects, depending upon the social and economic logics that bring them to life. Surveillance capitalism relies on algorithms and sensors, machine intelligence and platforms, but it is not the same as any of those.”

Doesn’t all this mean that regulation that just focuses on the technology is misguided and doomed to fail? What should we be doing to get a grip on this before it’s too late?

[Shoshana Zuboff] “The tech leaders desperately want us to believe that technology is the inevitable force here, and their hands are tied. But there is a rich history of digital applications before surveillance capitalism that really were empowering and consistent with democratic values. Technology is the puppet, but surveillance capitalism is the puppet master.

Surveillance capitalism is a human-made phenomenon and it is in the realm of politics that it must be confronted. The resources of our democratic institutions must be mobilised, including our elected officials. GDPR [a recent EU law on data protection and privacy for all individuals within the EU] is a good start, and time will tell if we can build on that sufficiently to help found and enforce a new paradigm of information capitalism. Our societies have tamed the dangerous excesses of raw capitalism before, and we must do it again.

While there is no simple five-year action plan, much as we yearn for that, there are some things we know. Despite existing economic, legal and collective-action models such as antitrust, privacy laws and trade unions, surveillance capitalism has had a relatively unimpeded two decades to root and flourish. We need new paradigms born of a close understanding of surveillance capitalism’s economic imperatives and foundational mechanisms.”

For example, the idea of ‘data ownership’ is often championed as a solution. But what is the point of owning data that should not exist in the first place? All that does is further institutionalise and legitimate data capture. It’s like negotiating how many hours a day a seven-year-old should be allowed to work, rather than contesting the fundamental legitimacy of child labour. Data ownership also fails to reckon with the realities of behavioural surplus. Surveillance capitalists extract predictive value from the exclamation points in your post, not merely the content of what you write, or from how you walk and not merely where you walk. Users might get ‘ownership’ of the data that they give to surveillance capitalists in the first place, but they will not get ownership of the surplus or the predictions gleaned from it — not without new legal concepts built on an understanding of these operations.

Another example: there may be sound antitrust reasons to break up the largest tech firms, but this alone will not eliminate surveillance capitalism. Instead it will produce smaller surveillance capitalist firms and open the field for more surveillance capitalist competitors.

So what is to be done? In any confrontation with the unprecedented, the first work begins with naming. Speaking for myself, this is why I’ve devoted the past seven years to this work… to move forward the project of naming as the first necessary step toward taming. My hope is that careful naming will give us all a better understanding of the true nature of this rogue mutation of capitalism and contribute to a sea change in public opinion, most of all among the young.”

Shoshana Zuboff also wrote this weekend’s long read in the Financial Times, Facebook, Google and a dark age of surveillance capitalism.

“This is where we live now — a world in which nearly every product or service that begins with the word ‘smart’ or ‘personalised,’ every internet-enabled device or vehicle, every ‘digital assistant’ — each is a supply-chain interface for the unobstructed flow of behavioural data.

It has long been understood that capitalism evolves by claiming things that exist outside of the market dynamic and turning them into market commodities for sale and purchase. Surveillance capitalism extends this pattern by declaring private human experience as free raw material that can be computed and fashioned into behavioural predictions for production and exchange.

In this logic, surveillance capitalism poaches our behaviour for surplus and leaves behind all the meaning lodged in our bodies, our brains and our beating hearts. You are not ‘the product’ but rather the abandoned carcass. The ‘product’ derives from the surplus data ripped from your life.

In these new supply chains we may find signs of the people you share our life with, your tears, the clench of his jaw in anger, the secrets your children share with their dolls, our breakfast conversations and sleep habits, the decibel levels in my living room, the thinning treads on her running shoes, your hesitation as you survey the sweaters laid out in the shop and the exclamation marks that follow a Facebook post, once composed in innocence and hope. Nothing is exempt, from ‘smart’ vodka bottles to internet-enabled rectal thermometers, as products and services from every sector join the competition for surveillance revenues.”

Further recommended reading

And also this …

“Can we establish a better balance between Gemeinschaft and Gesellschaft?,” Manfred F. R. Kets de Vries wonders in Have We Reached the Limit of Individualism?

“How can societies further economic and political development whilst also preserving the qualities that make for a liveable, cohesive community? If we wish to taper the narcissistic orientation of the younger generation, one priority would be to neutralise the faulty premises of the self-esteem movement. To instil genuine self-esteem in children, praise needs to be tied to observable behaviours and successes. There should be strong efforts to increase the amount of actual human (i.e. face-to-face) interaction that children have. Giving them the kinds of experiences needed to develop social skills such as empathy and compassion will encourage the next generation to be more civic-minded and more politically committed than is presently the case.

In organisational life, the challenge is how to make business a force for good. First, we should beware of narcissistic CEOs and senior managers. Under narcissistic leadership, subordinates often opt to tell higher-ups what they want to hear. They soon live in an echo chamber that promotes errant behaviour patterns and decisions, including fraudulent activities. Narcissistic leaders may profess company loyalty but, deep down, are only committed to their own agendas, with often dire consequences for the firm and its various stakeholders.

Second, we need to devise workplaces that are humane and not Darwinian environments where everyone is out for themselves. In such companies, people have a voice, as well as ample opportunities to learn and express their creative capabilities. These kinds of organisations value a coaching culture and see leadership as a team sport. Shareholder value is not their exclusive rallying cry. They adopt a long-term perspective and seek to be part of a sustainable world.”

“Don’t throw in the day job to follow your dream. Join the bifurcators who juggle work-for-pay and their work-for-love,” Thomas Maloney writes in The creed of compromise.

“Today, we have higher expectations of work, and more choices over which we can dither and fret. Tales of bold and triumphant career choices are a media staple. We also have a bigger toolbox in our search for meaning. Technology is a boon for bifurcators, in particular (no need to carry your typewriter on the bus), while the internet has also enabled a growing minority to escape bifurcation by translating their passion directly into a viable income. Circumstances still unfairly constrain opportunities for many but, for plenty of others, whingeing is unwarranted.”

“Advocates of dream-following, of commitment and career leaps of faith, often say: ‘You’ll regret it if you don’t.’ They might be right about that (actually, they almost certainly are). But here’s the rub: regret is not the sole preserve of the cautious compromiser. A failure to compromise can also beget future unhappiness. Some of your sacrifices might come back to haunt you. I wouldn’t dare to advise young idealists either to follow or not to follow their dreams. At the most, I might advise them to be wary of career preachers, whether radical or conservative, and that, while they shouldn’t fear commitment and leaps of faith, they also shouldn’t fear compromise.

The celebrated achievers are usually those who committed to their passion. But often they sacrificed a lot. There is another group of achievers, less celebrated but perhaps happier on average, whose central accomplishment is a balancing act — between work and family, money and meaning, pursuing their passion at the weekend and getting an early night on Sundays. I’m only a novice bifurcator but I know my role models. They’re not famous and never will be, but you probably know some too.”

When we think about technology’s moral implications, we tend to think about what we do with a given technology, L.M. Sacasas writes in Do Artifacts Have Ethics?

“We might call this the ‘guns don’t kill people, people kill people’ approach to the ethics of technology. What matters most about a technology on this view is the use to which it is put. This is, of course, a valid consideration. A hammer may indeed be used to either build a house or bash someones head in. On this view, technology is morally neutral and the only morally relevant question is this: What will I do with this tool?

But is this really the only morally relevant question one could ask?

For instance, pursuing the example of the hammer, might I not also ask how having the hammer in hand encourages me to perceive the world around me? Or, what feelings having a hammer in hand arouses?”

Sacasas subsequently gives us a list of “questions that we might ask in order to get at the wide-ranging ‘moral dimension’ of our technologies.”

- What sort of person will the use of this technology make of me?

- What habits will the use of this technology instill?

- How will the use of this technology affect my experience of time?

- How will the use of this technology affect my experience of place?

- How will the use of this technology affect how I relate to other people?

- How will the use of this technology affect how I relate to the world around me?

- What practices will the use of this technology cultivate?

- What practices will the use of this technology displace?

- What will the use of this technology encourage me to notice?

- What will the use of this technology encourage me to ignore?

- What was required of other human beings so that I might be able to use this technology?

- What was required of other creatures so that I might be able to use this technology?

- What was required of the earth so that I might be able to use this technology?

- Does the use of this technology bring me joy?

- Does the use of this technology arouse anxiety?

- How does this technology empower me? At whose expense?

- What feelings does the use of this technology generate in me toward others?

- Can I imagine living without this technology? Why, or why not?

- How does this technology encourage me to allocate my time?

- Could the resources used to acquire and use this technology be better deployed?

- Does this technology automate or outsource labor or responsibilities that are morally essential?

- What desires does the use of this technology generate?

- What desires does the use of this technology dissipate?

- What possibilities for action does this technology present? Is it good that these actions are now possible?

- What possibilities for action does this technology foreclose? Is it good that these actions are no longer possible?

- How does the use of this technology shape my vision of a good life?

- What limits does the use of this technology impose upon me?

- What limits does my use of this technology impose upon others?

- What does my use of this technology require of others who would (or must) interact with me?

- What assumptions about the world does the use of this technology tacitly encourage?

- What knowledge has the use of this technology disclosed to me about myself?

- What knowledge has the use of this technology disclosed to me about others? Is it good to have this knowledge?

- What are the potential harms to myself, others, or the world that might result from my use of this technology?

- Upon what systems, technical or human, does my use of this technology depend? Are these systems just?

- Does my use of this technology encourage me to view others as a means to an end?

- Does using this technology require me to think more or less?

- What would the world be like if everyone used this technology exactly as I use it?

- What risks will my use of this technology entail for others? Have they consented?

- Can the consequences of my use of this technology be undone? Can I live with those consequences?

- Does my use of this technology make it easier to live as if I had no responsibilities toward my neighbor?

- Can I be held responsible for the actions which this technology empowers? Would I feel better if I couldn’t?

Andrew Hill, the Financial Times’s management editor, has written a book about John Ruskin, Ruskinland: How John Ruskin Shapes Our World. In an article in the Financial Times, Hill explores why the radical social thinker’s ideas on work are more relevant than ever today.

Although best known as “the supreme tastemaker in early Victorian art and architecture,” Ruskin’s “later role as social critic and reformer has arguably been more revolutionary and enduring,” Hill writes. “Traces of his once-radical ideas can be detected in the foundations of the British welfare state, free public education and state pensions, not to mention in the modern environmental movement and, of course, in our museums and galleries.

Weaver of a pre-internet world wide web, Ruskin had a polymath’s appreciation of the fragile interconnectedness of disciplines such as science, history and art that was at least a century ahead of its time. He can still teach us how to see more clearly and understand the world around us more fully.

It is, though, his ideas about how to rethink work to make it more meaningful — ideas born from the maelstrom of 19th-century industrialisation — that offer the most interesting lessons for modern leaders, organisations and economies grappling with the latest wave of technological change.”

“Just as the machine age divided 19th-century intellectuals, the latest robot-led industrial revolution has provoked a debate about the human contribution to work. A recent essay published by the Silicon Valley-based Deloitte Center for the Edge, part of the professional services firm, invited readers to think again about the question ‘what is work?.’ It urged managers to help workers make the most of their ‘human capabilities’: notably, curiosity, imagination, intuition, creativity and empathy.

Ruskin, too, was convinced work was worthless, even dangerous, unless workers had sufficient education to use these capabilities, and room in which to exercise them. ‘In order that people may be happy at work,’ Ruskin wrote in 1851, ‘these three things are needed: They must be fit for it; They must not do too much of it: and they must have a sense of success in it.’ If that sounds modern, it is no surprise: these are the keys to employees’ self-motivation that management writer Daniel Pink laid out in his 2009 book Drive, calling them mastery, autonomy and purpose,” Hill writes.

VAC Library by Farming Architects is a large wooden climbing frame that uses solar-powered aquaponics to keep vegetables, koi carp and chickens in Hanoi, Vietnam. The architects designed the library and city farm hybrid as a way for children to learn about self-sustaining ecosystems.

“Children will learn that Koi carp are not only pets to watch, but also how their waste can benefit the vegetable planters,” said Farming Architects.

Via Dezeen

“It turns out that if you want to go somewhere new, the map is no use.

We need to get lost.

Together.”

— Steve Marshall, We Change Together

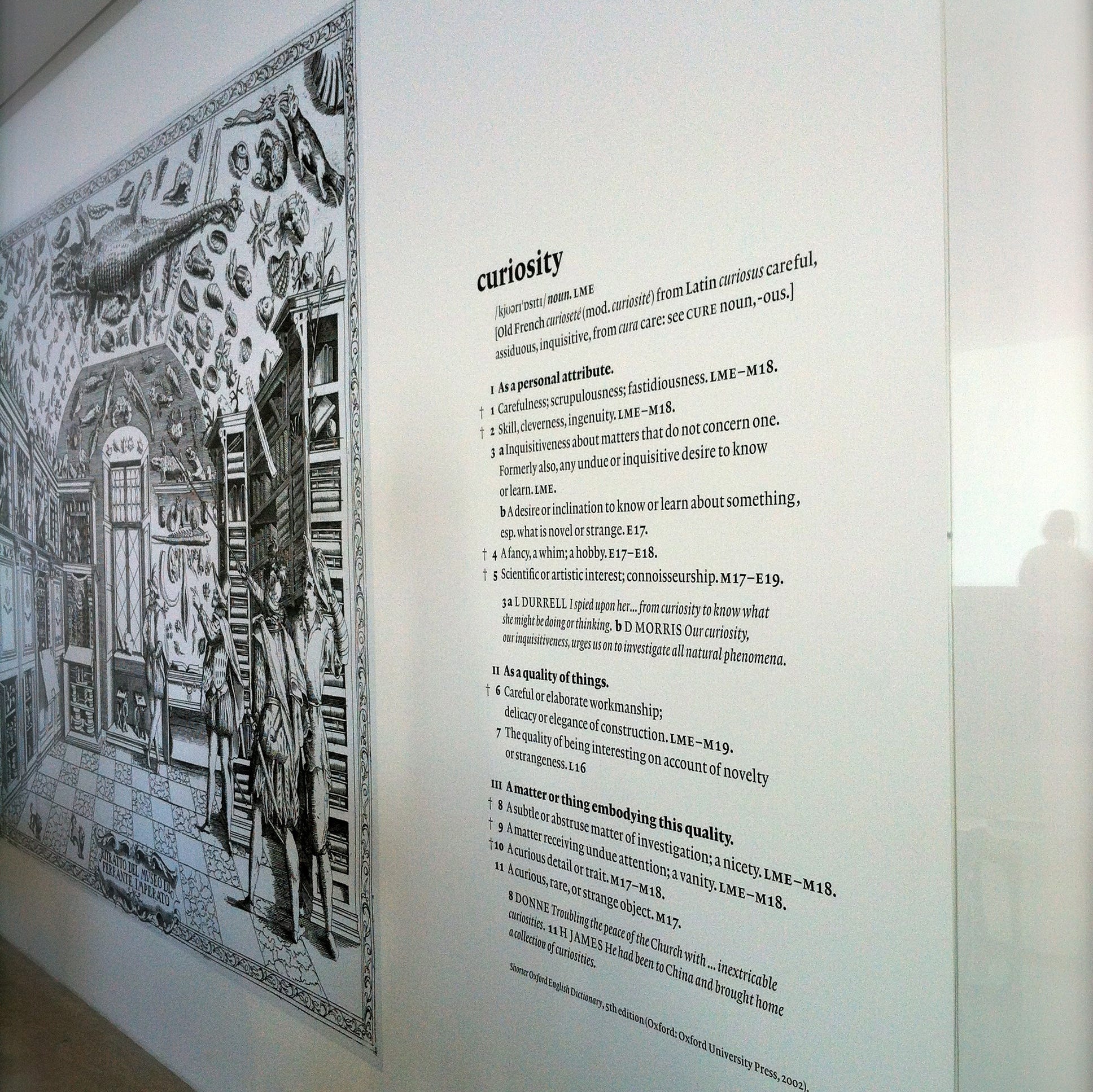

Curiosity and seeking out new ‘first loves’

First time experiences — our first love or the first time we come face to face with the Mona Lisa — are defining moments in our lives. They kindle our curiosity, can give us a sense of purpose and may even lead us into entirely new directions.

But how often do we seek first time experiences in our work? How often do we allow ourselves, and others, to be curious — to seek new knowledge, try new things, shape new relationships?

Join Eitan Reich and me, and share your thoughts and experiences in a candid conversation about the role of curiosity in management and organisations.

— Wednesday, 30 January 2019 (via Zoom

— Between 20:00–21:00 CET

— Only 8 seats available

Save your seat by sending an email to conversations@newaysof.com (subject: ‘I want to join the conversation’). We will send you a Zoom-link shortly before the conversation.