Reading notes (2021, week 1-2) — On the joys of being an absolute beginner, learning without thinking, and how self-interest influences our predictions

Reading notes is a weekly curation of my tweets. It is, as Michel de Montaigne so beautifully wrote, “a posy of other men’s flowers and nothing but the thread that binds them is mine own.”

In the first edition of 2021: The phrase ‘adult beginner’ can sound patronising, but learning is not just for the young; as machine learning continues to develop, the intuition that thinking necessarily precedes learning should wane; how to forfeit unrealistic optimism about the future; talking out loud to yourself; how to channel boredom; the massive effect culture has on how we view ourselves and how we are perceived by others; unlocking the key to a mysterious 17th-century painting; and, finally, Lao Tzu and finding the answers at the center of your being.

The joys of being an absolute beginner

“For most of us, the beginner stage is something to be got through as quickly as possible,” Tom Vanderbilt writes in The joys of being an absolute beginner — for life, an edited extract from Beginners: The Joy and Transformative Power of Lifelong Learning (Alfred E. Knopf, 2021).

“Think of a time when you first visited a new, distant place, one with which you were barely familiar. Upon arrival, you were alive to every novelty. The smell of the food in the street! The curious traffic signs! The sound of the call to prayer! Flushed from the comfort of your usual surrounds, forced to learn new rituals and ways to communicate, you gained sensory superpowers. You paid attention to everything because you didn’t even know what you needed to know to get by. After a few days, as you became more expert in the place, what seemed strange began to become familiar. You began noticing less. You became safer in your knowledge. Your behaviour became more automatic.

Even as your skills and knowledge progress, there is a potential value to holding on to that beginner’s mind. In what’s come to be known as the Dunning-Kruger effect, the psychologists David Dunning and Justin Kruger showed that on various cognitive tests the people who did the worst were also the ones who most ‘grossly overestimated’ their actual performance. They were ‘unskilled and unaware of it.’

This can certainly be a stumbling block for beginners. But additional research later showed that the only thing worse than hardly knowing anything was knowing a little bit more. This pattern appears in the real world: doctors learning a spinal surgery technique committed the most errors not on the first or second try, but on the 15th; pilot errors, meanwhile, seem to peak not in the earliest stages but after about 800 hours of flight time.

I’m not suggesting experts have much to fear from beginners. Experts, who tend to be ‘skilled, and aware of it,’ are much more efficient in their problem-solving processes, more efficient in their movement (the best chess players, for example, tend also to be the best speed-chess players). They can draw upon more experience, and more finely honed reflexes. Beginner chess players will waste time considering a huge range of possible moves, while grandmasters zero in on the most relevant options (even if they then spend a lot of time calculating which of those moves are best).

And yet, sometimes, the ‘habits of the expert,’ as the Zen master Suzuki called it, can be an obstacle — particularly when new solutions are demanded. With all their experience, experts can come to see what they expect to see. Chess experts can become so entranced by a move they remember from a previous game that they miss a more optimal move on a different part of the board. This tendency for people to default to the familiar, even in the face of a more optimal novel solution, has been termed the Einstellung effect,” Vanderbilt writes.

This effect is illustrated in the well-known candle problem. People are asked to attach a candle to the wall using nothing more than a box of matches and a box of tacks. Most people struggle because they get hung up on the functional fixedness of the box as a container for tacks, not as a theoretical shelf for the candle. Not five-year-olds, though.

According to researchers, “younger children have a more fluid ‘conception of function’ than older children or adults. They are less hung up on things being for something, and more able to view them simply as things to be used in all sorts of ways. […] Children, in a very real sense, have beginners’ minds, open to wider possibilities. They see the world with fresher eyes, are less burdened with preconception and past experience, and are less guided by what they know to be true.

They are more likely to pick up details that adults might discard as irrelevant. Because they’re less concerned with being wrong or looking foolish, children often ask questions that adults won’t ask.”

But why bother learning a bunch of things that aren’t relevant to your career? Why dabble in mere hobbies when all of us are scrambling to keep up with the demands of a rapidly changing workplace?, Vanderbilt wonders.

“First,” he suggests, “it’s not at all clear that learning something like singing or drawing actually won’t help you in your job — even if it’s not immediately obvious how. Learning has been proposed as an effective response to stress in one’s job. By enlarging one’s sense of self, and perhaps equipping us with new capabilities, learning becomes a ‘stress buffer.’

Claude Shannon, the brilliant MIT polymath who helped invent the digital world in which we live today, plunged into all kinds of pursuits, from juggling to poetry to designing the first wearable computer. ‘Time and time again,’ noted his biographer, ‘he pursued projects that might have caused others embarrassment, engaged questions that seemed trivial or minor, then managed to wring breakthroughs out of them.’

Regularly stepping out of our comfort zones, at this historical moment, just feels like life practice. The fast pace of technological change turns us all, in a sense, into ‘perpetual novices,’ always on the upward slope of learning, our knowledge constantly requiring upgrades, like our phones. Few of us can channel our undivided attention into a lifelong craft. Even if we keep the same job, the required skills change. The more willing we are to be brave beginners, the better. As Ravi Kumar, president of the IT giant Infosys, described it: ‘You have to learn to learn, learn to unlearn, and learn to re-learn.’

Second, it’s just good for you. I don’t mean only the things themselves — the singing or the drawing or the surfing — are good for you (although they are, in ways I’ll return to). I mean that skill learning itself is good for you.

It scarcely matters what it is — tying nautical knots or throwing pottery. Learning something new and challenging, particularly with a group, has proven benefits for the ‘novelty-seeking machine’ that is the brain. Because novelty itself seems to trigger learning, learning various new things at once might be even better. A study that had adults aged 58 to 86 simultaneously take multiple classes — ranging from Spanish to music composition to painting — found that after just a few months, the learners had improved not only at Spanish or painting, but on a battery of cognitive tests. They’d rolled back the odometers in their brains by some 30 years, doing better on the tests than a control group who took no classes. They’d changed in other ways, too: they felt more confident, they were pleasantly surprised by their work, and they kept getting together after the study ended.”

“Skill learning seems to be additive; it’s not only about the skill. A study that looked at young children who had taken swimming lessons found benefits beyond swimming. The swimmers were better at a number of other physical tests, such as grasping or hand-eye coordination, than non-swimmers. They also did better on reading and mathematical reasoning tests than non-swimmers, even accounting for factors such as socio-economic status.

[…]

Learning new skills also changes the way you think, or the way you see the world. Learning to sing changes the way you listen to music, while learning to draw is a striking tutorial on the human visual system. Learning to weld is a crash course in physics and metallurgy. You learn to surf and suddenly you find yourself interested in tide tables and storm systems and the hydrodynamics of waves. Your world got bigger because you did.

Last, if humans seem to crave novelty, and novelty helps us learn, one thing that learning does is equip us with how to better handle future novelty. ‘More than any other animal, we human beings depend on our ability to learn,’ the psychologist Alison Gopnik has observed [in her book The Gardener and the Carpenter]. ‘Our large brain and powerful learning abilities evolved, most of all, to deal with change.’

We are always flipping between small moments of incompetence and mastery. Sometimes, we cautiously try to work out how we’re going to do something new.

Sometimes, we read a book or look for an instructional video. Sometimes, we just have to plunge in.”

Learning without thinking

‘Mindless learning.’ The phrase looks incoherent, writes Jacob Browning, a philosopher and Berggruen Fellow, in Learning without thinking. After all, how could there be learning without a learner?

“As it is commonly understood, thinking is a matter of consciously trying to connect the dots between ideas. It’s only a short step for us to assume that thinking must precede learning, that we need to consciously think something through in order to solve a problem, understand a topic, acquire a new skill or design a new tool.” This assumption, which is shared by early AI researchers, suggests that learning depends on reasoning— on our capacity to detect the necessary connections (mathematical, logical and causal) between things.

“Our capacity to reason so impressed Enlightenment philosophers that they took this as the distinctive character of thought — and one exclusive to humans. The Enlightenment approach often simply identified the human by its impressive reasoning capacities — a person understood as synonymous with their mind.

This led to the Enlightenment view that took the mind as the motor of history: Where other species toil blindly, humans decide their own destiny. Each human being strives to learn more than their parents and, over time, the overall species is perfected through the accumulation of knowledge. This picture of ourselves held that our minds made us substantively different and better than mere nature — that our thinking explains all learning, and thus our brilliant minds explain ‘progress.’”

But less anthropocentric thinkers like David Hartley, David Hume and James Mill were skeptical about this idea. Instead, “they argued that human learning is better understood as similar to the stimulus-responsive learning seen in animals, which hinges on creating associations between arbitrary actions that become lifelong patterns of behavior.

[…]

This approach — later popularized by the behaviorists — held that rewarded associations can account not just for trained behavior but for any aspect of animal behavior, even seemingly ‘thoughtful’ behavior. My cat seems to understand how can openers work and that they contain cat food, but there isn’t necessarily any reasoning involved. Anything that sounds like the can opener, or even looks like a can, will result in the same behavior: running into the kitchen, meowing expectantly, scratching at the empty food bowl. And what works for animals works in humans as well. Humans can accomplish many tasks through repetition without understanding what they are doing, as when children learn to multiply by memorizing multiplication tables through tedious practice and recitation.

Many philosophers and scientists have argued that associative learning need not be limited to explaining how an individual animal learns. They contend that arbitrary events in diverse and non-cooperative agents could still lead to problem-solving behavior — a spontaneous organization of things without any organizer.”

In Adam Smith’s invisible hand of the market, for example, ‘progress’ results “not from any grand plan but from countless, undirected interactions over time that adaptively shape groups towards some stable equilibrium amongst themselves and their environment.” And according to Charles Darwin, “the development of species emerges from chance events over time snowballing into increasingly complex capacities and organs.” It doesn’t involve progress or the appearance of ‘better’ species.

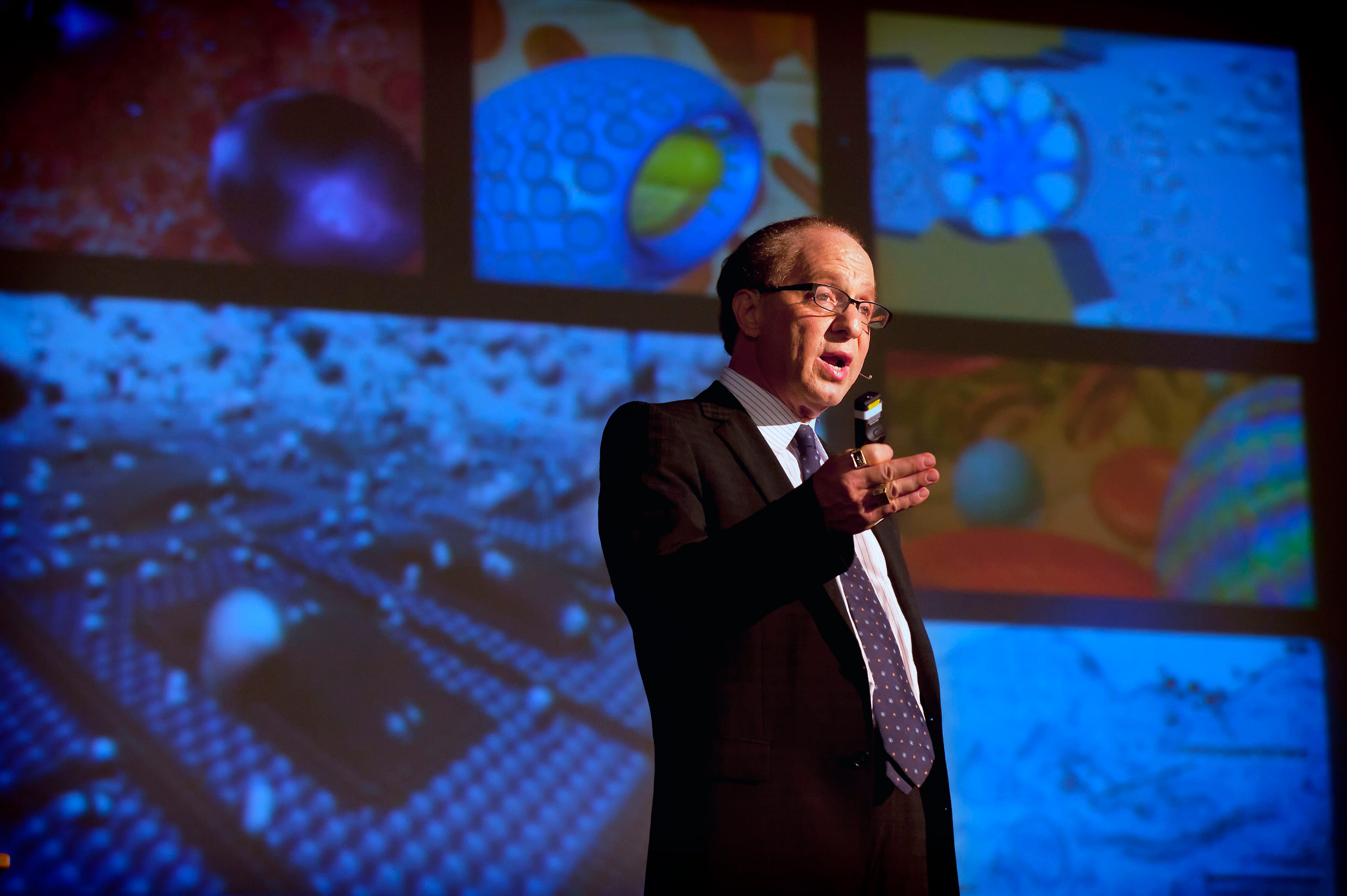

Looking at the mathematical sophistication and sheer computing power of contemporary AI systems, it is easy to believe that the process involves a kind of technological wizardry only possible through human ingenuity. But it is possible for us to think about machine learning otherwise.

“As with evolution, there are far more trials ending in failure than in success. However, these failures are a feature, not a bug. Repeated failure is essential for mindless learning to test out many possible solutions — as in the 30 million trials leading up to AlphaGo’s famous move 37 in its game against the second-best Go player in the world, Lee Sedol. Going through so many trials meant AlphaGo played many moves no human had ever made, principally because these moves usually result in losing. Move 37 was just such a long shot, and it was so counterintuitive that it made Lee visibly uncomfortable,” Browning writes.

“As machine learning continues to develop, the intuition that thinking necessarily precedes learning — much less that humans alone learn — should wane. We will eventually read surprising headlines, such as the recent finding that a machine is a champion poker player or that slime molds learn, without immediately asking: ‘Does it have a mental life? Is it conscious?’ Mindless learning proves that many of our ideas about what a mind is can be broken into distinct mindless capacities. It pushes us to ask more insightful questions: If learning doesn’t need a mind, why are there minds? Why is any creature conscious? How did consciousness evolve in the first place? These questions help clarify both how minded learning works in humans, and why it would be parochial to treat this as the only possible kind of learning AI should aspire to.

We should be clear that much human learning has itself been, and still is, mindless. The history of human tools and technologies — from the prehistoric hammer to the current search for effective medicines — reveals that conscious deliberation plays a much less prominent role than trial and error. And there are plenty of gradations between the mindless learning at work in bacteria and the minded learning seen in a college classroom. It would be needlessly reductive to claim, as some have, that human learning is the only ‘real learning’ or ‘genuine cognition,’ with all other kinds — like association, evolution and machine learning — as mere imitations.

Rather than singling out the human, we need to identify those traits essential for learning to solve problems without exhaustive trial and error. The task is figuring out how minded learning plays an essential role in minimizing failures. Simulations sidestep fatal trials; discovering necessary connections rules out pointless efforts; communicating discards erroneous solutions; and teaching passes on success. Identifying these features helps us come up with machines capable of similar skills, such as those with ‘internal models’ able to simulate trials and grasp necessary connections, or systems capable of being taught by others.

But the second insight is broader. While there are good reasons to make machines that engage in human-like learning, artificial intelligence need not — and should not — be confined to simply imitating human intelligence. Evolution is fundamentally limited because it can only build on solutions it has already found, permitting limited changes in DNA from one individual to the next before a variant is unviable. The result is (somewhat) clear paths in evolutionary space from dinosaurs to birds, but no plausible path from dinosaurs to cephalopods. Too many design choices, and their corresponding trade-offs, are already built in.

If we imagine a map of all possible kinds of learning, the living beings that have popped up on Earth take up only a small territory, and came into being along connected (if erratic) lines. In this scenario, humans occupy only a tiny dot at the end of one of a multitude of strands. Our peculiar mental capacities could only arise in a line from the brains, physical bodies and sense-modalities of primates. Constraining machines to retrace our steps — or the steps of any other organism — would squander AI’s true potential: leaping to strange new regions and exploiting dimensions of intelligence unavailable to other beings. There are even efforts to pull human engineering out of the loop, allowing machines to evolve their own kinds of learning altogether.

The upshot is that mindless learning makes room for learning without a learner, for rational behavior without any ‘reasoner’ directing things. This helps us better understand what is distinctive about the human mind, at the same time that it underscores why [our mind] isn’t the key to understanding the natural universe, as the rationalists believed. The existence of learning without consciousness permits us to cast off the anthropomorphizing of problem-solving and, with it, our assumptions about intelligence.”

How self-interest influences our predictions

Psychology research suggests that the more desirable a future event is, the more likely people think it is. Conversely, the more someone dreads or fears a potential outcome, the less likely they think it is to happen, Caroline Beaton writes in Humans Are Bad at Predicting Futures That Don’t Benefit Them .

Unrealistic optimism or thinking that good things are more likely to happen to you than to other people whereas bad things are less likely, was discovered by accident in the late 1970s by the psychologist Neil Weinstein. It isn’t outright denial of risk, he says. “People don’t say, ‘It can’t happen to me.’ It’s more like, ‘It could happen to me, but it’s not as likely for me as for other people around me.’”

According to Beaton, “[anxiety] affects people’s predictions subliminally. For example, they may unwittingly only gather and synthesize facts about their prediction that support the outcome they want. This process may even be biologically ingrained: Neuroscience research suggests that facts supporting a desired conclusion are more readily available in people’s memories than other equally relevant but less appealing information. Our predictions are often less imaginative than we think.

They’re also more self-absorbed. Unrealistic optimism occurs in part because people fail to consider others’ experiences, especially when they think a future outcome is controllable.”

Weinstein tried to curb this bias, with limited success though. Other research indicates that people resist revising their estimations of personal risk even when confronted with relevant averages that explicitly contradict their initial predictions. But Weinstein insists it’s not all bad. Thinking things are going to turn out well may actually be adaptive, a way to soothe our fears about the future. “It keeps you from falling apart.”

“Research suggests that far-off events, like death, are particularly vulnerable to overly optimistic predictions. Moreover, predictions appear to be most influenced by whether ‘the event in question is of vital personal importance to the predictor.’ The author, speaker, and global-trends expert Mark Stevenson says that people who predict the future are victim to their own prejudices, wish lists, and life experiences, which are often reflected in their predictions. When I asked Stevenson for an example, he told me to consider at what point in time any futurist approaching 50 predicts life extension will be normal — ‘quite soon!’”

“People aren’t so naïve as to think that just because something is important to them, it will happen. Rather, they tend to think most other people share their beliefs, and thus the future they endorse is likely. What researchers call projection bias explains why individuals so often bungle election predictions. Because they assume that others have political opinions similar to their own, people think their chosen candidate is more popular than she or he actually is. Liberals’ underestimates of the true scale of Trump support during the 2016 election may have at least partially resulted from this bias.”

Although sometimes self-interested predictions pan out — for example, the end of the Cold War and apartheid in South Africa — “on the whole people would make better predictions with more objectivity and awareness.” And how we predict the future is important because it affects what we do in the present. So how do you forfeit your fantasies?

“Faith Popcorn, the CEO of ‘a future-focused strategic consultancy,’ advises shaking up your perspective: ‘Learn how the other side thinks,’ she says. Chat up interesting people; go to readings, talks, and fairs to ‘expand your horizons.’

[University of Pennsylvania’s Philip Tetlock, who studies the art and science of forecasting,] says forecasting tournaments can teach people how to outsmart their shortcomings and instead play ‘a pure accuracy game.’ […] He says that many people ‘have a little voice in the back of their heads saying, Watch out! You might be distorting things a bit here, and forecasting tournaments encourage people to get in touch with those little inner voices.’

But Neil Weinstein has been around long enough to know that extinguishing unrealistic optimism isn’t so simple. ‘It’s hard because it has all these different roots,’ he says. And human nature is so obstinate.

In one study, Weinstein and his collaborators asked people to estimate the probability that their Texas town would be hit by a tornado. Everyone thought that their own town was less at risk than other towns. Even when a town was actually hit, its inhabitants continued to believe that their town was less likely to get hit than average. But then, by chance, one town got hit twice during the study. Finally, these particular townspeople realized that their odds were the same as all the other towns. They woke up, says Weinstein. ‘So you might say it takes two tornadoes.’”

And also this…

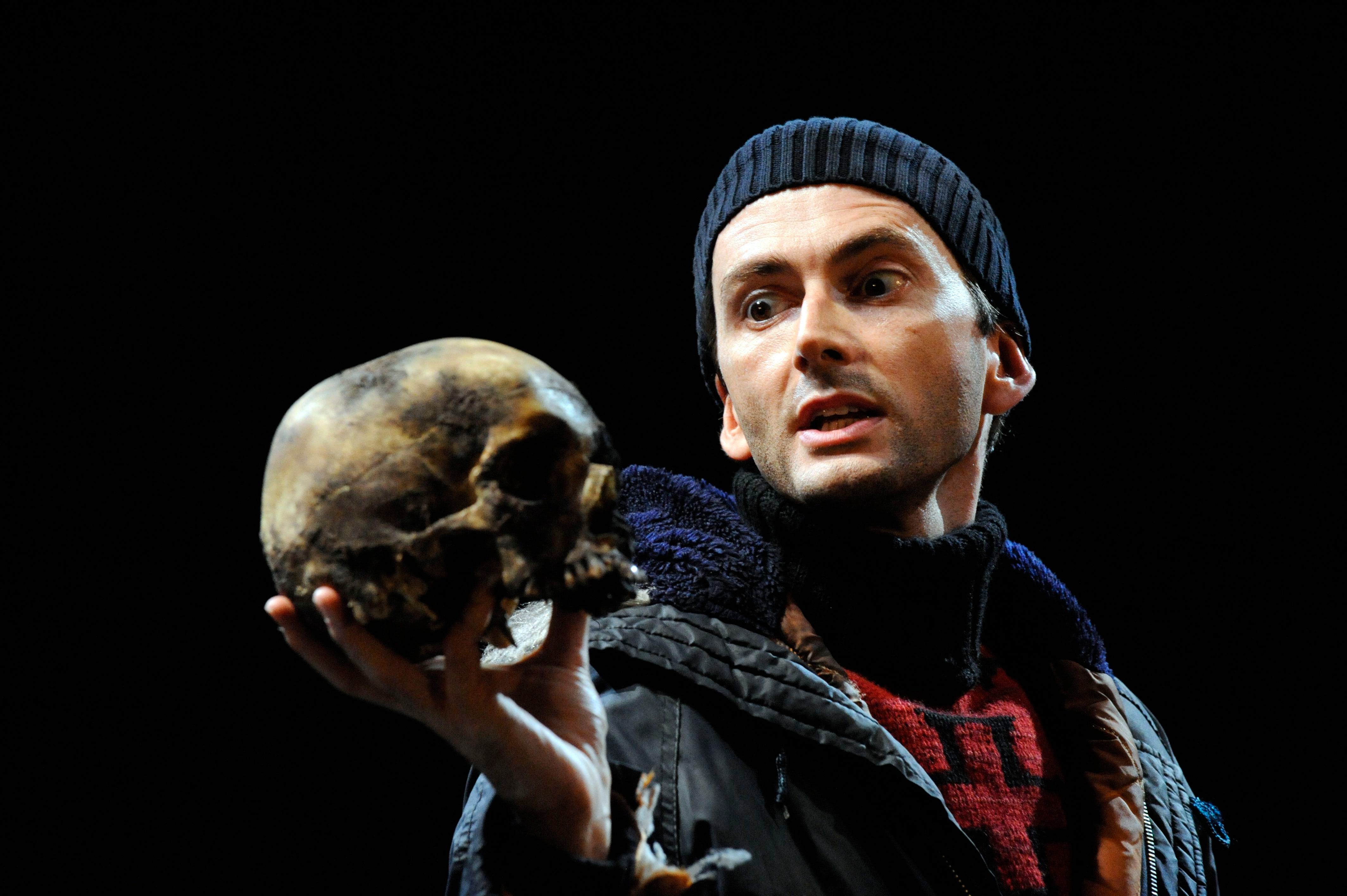

“Self-talk is deemed legitimate only when done in private, by children, by people with intellectual disabilities, or in Shakespearean soliloquies,” Nana Ariel writes in Talking out loud to yourself is a technology for thinking.

“Yet self-talk enjoys certain advantages over inner speech, even in adults. First, silent inner speech often appears in a ‘condensed’ and partial, form; as [the psychologist Charles Fernyhough] has shown, we often tend to speak to ourselves silently using single words and condensed sentences. Speaking out loud, by contrast, allows the retrieval of our thoughts in full, using rhythm and intonation that emphasise their pragmatic and argumentative meaning, and encourages the creation of developed, complex ideas.

Not only does speech retrieve pre-existing ideas, it also creates new information in the retrieval process, just as in the process of writing. Speaking out loud is inventive and creative — each uttered word and sentence doesn’t just bring forth an existing thought, but also triggers new mental and linguistic connections. In both cases — speech and writing — the materiality of language undergoes a transformation (to audible sounds or written signs) which in turn produces a mental shift. This transformation isn’t just about the translation of thoughts into another set of signs — rather, it adds new information to the mental process, and generates new mental cascades. That’s why the best solution for creative blocks isn’t to try to think in front of an empty page and simply wait for thoughts to arrive, but actually to continue to speak and write (anything), trusting this generative process.

Speaking out loud to yourself also increases the dialogical quality of our own speech. Although we have no visible addressee, speaking to ourselves encourages us to actively construct an image of an addressee and activate one’s ‘theory of mind’ — the ability to understand other people’s mental states, and to speak and act according to their imagined expectations. Mute inner speech can appear as an inner dialogue as well, but its truncated form encourages us to create a ‘secret’ abbreviated language and deploy mental shortcuts. By forcing us to articulate ourselves more fully, self-talk summons up the image of an imagined listener or interrogator more vividly. In this way, it allows us to question ourselves more critically by adopting an external perspective on our ideas, and so to consider shortcomings in our arguments — all while using our own speech.”

“You might have noticed, too, that self-talk is often intuitively performed while the person is moving or walking around. If you’ve ever paced back and forth in your room while trying to talk something out, you’ve used this technique intuitively. It’s no coincidence that we walk when we need to think: evidence shows that movement enhances thinking and learning, and both are activated in the same centre of motor control in the brain. In the influential subfield of cognitive science concerned with ‘embodied’ cognition, one prominent claim is that actions themselves are constitutive of cognitive processes. That is, activities such as playing a musical instrument, writing, speaking or dancing don’t start in the brain and then emanate out to the body as actions; rather, they entail the mind and body working in concert as a creative, integrated whole, unfolding and influencing each other in turn. It’s therefore a significant problem that many of us are trapped in work and study environments that don’t allow us to activate these intuitive cognitive muscles, and indeed often even encourage us to avoid them.

Technological developments that make speaking seemingly redundant are also an obstacle to embracing our full cognitive potential. Recently, the technology entrepreneur Elon Musk declared that we are marching towards a near future without language, in which we’ll be able to communicate directly mind-to-mind through neural links. ‘Our brain spends a lot of effort compressing a complex concept into words,’ he said in a recent interview, ‘and there’s a lot of loss of information that occurs when compressing a complex concept into words.’ However, what Musk chalks up as ‘effort’, friction and information loss also involves cognitive gain. Speech is not merely a conduit for the transmission of ideas, a replaceable medium for direct communication, but a generative activity that enhances thinking. Neural links might ease intersubjective communication, but they won’t replace the technology of thinking-while-speaking. Just as Kleist realised more than 200 years ago, there are no pre-existing ideas, but rather the heuristic process by which speech and thought co-construct each other.

So, the next time you see someone strolling and speaking to herself in your street, wait before judging her — she might just be in the middle of intensive work. She might be wishing she could say: ‘I’m sorry, I can’t chat right now, I’m busy talking to myself.’ And maybe, just maybe, you might find yourself doing the same one day.”

In How to channel boredom, James Danckert and John Eastwood examine how to use that discomfort to switch up a gear and regain control over your life and your interests.

“When you experience the discomfort of boredom, it is alerting you to the fact that […] you’ve become superfluous and pointless; you need to reclaim authorship of your life. You’re having what psychologists call a crisis of agency. You’ve become passive and are currently letting life happen to you: you’re not forming goals or following through on them. You’re not engaged with the world on your terms, pursuing goals that matter to you, that allow you to deploy your skills and talents in a purposeful way. It’s actually a good thing that boredom feels so uncomfortable because without it, you might fail to notice your plight.

The change that boredom demands is not simply about doing something different […]; rather, what’s required is a change in the way that you connect with the world. Boredom signals a need to look for activities that flow from and give expression to your curiosity, creativity and passion. In short, you need to re-establish your agency. […] Of course, some strategies might be judged more desirable than others. Ultimately, how you resolve boredom is up to you.

Boredom is not a place to linger. You’re the better for having passed through boredom because, in doing so, you stop doing whatever it was that denied you a sense of agency and, instead, start doing things that promote your agency — a critical transition we all need to make in small and large ways each and every day. It’s when you get stuck and struggle to move on that boredom can become a prison, the precise opposite of a passageway to something new. This happens to some of us more often and more intensely than others.

‘Boredom proneness’ is characterised by difficulties with self-regulation and is akin to a personality trait. For those inclined to it, the story of boredom proneness is not a good one — it’s associated with increased rates of depression and anxiety, problems with drug and alcohol use, higher rates of problem gambling, and even problematic relations with smartphones. It’s as though the highly boredom-prone turn to these things — alcohol, gambling, the rabbit hole of social media — as a pacifier for boredom. Ultimately, when the pacifier is no longer there, boredom remains, relentlessly pushing them to embrace their agency. But this is precisely where the boredom-prone struggle to take their life in hand. […]

The internet abounds with lists of activities for when boredom strikes (one has 150 options!) These lists at best miss the point, and at worst hinder the very self-determination boredom demands we seek. Don’t let anyone tell you what to do. There are no specific activities — baking sourdough, learning a new language — that will always work (for all people) to eliminate boredom and, in fact, that’s the point. The message of boredom is that you need to reclaim your agency. It has to be up to you. While we won’t be telling you specific activities to try out, there are various steps you can follow to help you reclaim your agency in the midst of boredom.”

One of the productive ways to respond to the signal that boredom sends is to change your perspective, the authors say.

“When you’re mired in boredom, it can feel as though there’s no way out. Sometimes a change of perspective can help. For instance, try imagining yourself as a fly on the wall and explore your boredom from the outside, rather than ruminating on it from the inside, and then you can often find the freedom to move. A sense of efficacy and autonomy are key hallmarks of agency. Technically referred to as ‘decentring,’ this fly-on-the-wall technique is known to upregulate the part of the nervous system responsible for activating behaviour — another way to get you moving.

Sometimes it’s even possible to turn a boring situation into an engaging one. For instance, if you’re stuck doing highly repetitive work, you could try to beat your personal best time for the task to help make the time pass more quickly. Or if you’re completing a school project on what you consider a dull topic, you could imagine yourself as a detective working a case. Sure, in each case you find the work monotonous, but reframing it might make it a little less boring and put you back in the driver’s seat.

Simply making the choice to focus on something gives it value, and that’s where agency comes in once again. The artist Andy Warhol supposedly once claimed that you have to let the little things that would ordinarily bore you suddenly thrill you. Channel your curiosity towards something and, all of a sudden, the tiny details become fascinating because you choose to pay close attention to things you previously dismissed. As we’ve said, it’s up to you. You might imagine stamp collecting to be quite boring, but those who passionately pursue philately as a hobby would strenuously disagree. Boredom can’t get a foothold when you commit yourself to a pursuit. When you choose to be curious, you can’t be bored.”

“The academic discipline of psychology was developed largely in North America and Europe. Some would argue it’s been remarkably successful in understanding what drives human behaviour and mental processes, which have long been thought to be universal. But in recent decades some researchers have started questioning this approach, arguing that many psychological phenomena are shaped by the culture we live in,” Nicolas Geeraert writes in How knowledge about different cultures is shaking the foundations of psychology.

“If you were asked to describe yourself, what would you say? Would you describe yourself in terms of personal characteristics — being intelligent or funny — or would you use preferences, such as ‘I love pizza’? Or perhaps you would instead base it on social relationships, such as ‘I am a parent’? Social psychologists have long maintained that people are much more likely to describe themselves and others in terms of stable personal characteristics.

However, the way people describe themselves seems to be culturally bound. Individuals in the western world are indeed more likely to view themselves as free, autonomous and unique individuals, possessing a set of fixed characteristics. But in many other parts of the world, people describe themselves primarily as a part of different social relationships and strongly connected with others. This is more prevalent in Asia, Africa and Latin America. These differences are pervasive, and have been linked to differences in social relationships, motivation and upbringing.

This difference in self-construal has even been demonstrated at the brain level. In a brain-scanning study (fMRI), Chinese and American participants were shown different adjectives and were asked how well these traits represented themselves. They were also asked to think about how well they represented their mother (the mothers were not in the study), while being scanned.

In American participants, there was a clear difference in brain responses between thinking about the self and the mother in the ‘medial prefrontal cortex,’ which is a region of the brain typically associated with self presentations. However, in Chinese participants there was little or no difference between self and mother, suggesting that the self-presentation shared a large overlap with the presentation of the close relative.

[…]

With more research, we may well find that cultural differences pervade into even more areas where human behaviour was previously thought of as universal. But only by knowing about these effects will we ever be able to identify the core foundations of the human mind that we all share.”

“A dizzying retinal riddle of a painting, Las Meninas plays tug of war with our mind. On the one hand, the canvas’s perspective lines converge to a vanishing point within the open doorway, pulling our gaze through the work. On the other hand, the rebounding glare of the mirror bounces our attention back out of the painting to ponder the plausible position of royal spectres whose vague visages haunt the work. We are constantly dragged into and out of the painting as the here-and-now of the shadowy chamber depicted by Velázquez becomes a strangely elastic dimension that is both transient and eternal — a realm at once palpably real and mistily imaginary,” Kelly Grovier writes in Velázquez’s Las Meninas: A detail that decodes a masterpiece.

“In her brilliant biography, The Vanishing Man: In Pursuit of Velázquez, the writer and art critic Laura Cumming reflects on Las Meninas’s remarkable ability to present ‘such a precise vision of reality’ while at the same time remaining ‘so open a mystery.’ ‘The knowledge,’ she writes, ‘that all this is achieved by brushstrokes, that these are only painted figments, does not weaken the illusion so much as deepen the enchantment. The whole surface of Las Meninas feels alive to our presence.’

Cumming’s assessment of the painting’s uncanny power, with its carefully chosen language of ‘mystery,’ ‘illusion,’ and ‘enchantment,’ captures perfectly the almost psychotropic effect Velásquez’s imagery has on us — the trance-like state into which the painting has lured generation after generation. Cumming could almost be describing a hallucination or a mystical vision rather than a painting.”

Maybe she is because easily overlooked is a small earthenware vase, “which is being offered to the young Infanta (and us) by a supplicating attendant on a silver platter.” Contemporaries would have recognised it “as embodying both mind-and-body-altering properties.”

“To call the complexion of that simple ceramic ‘otherworldly’ is more than mere poetic hyperbole. Known as a ‘búcaro,’ it was among the many covetable crafts brought back to the Old World by Spanish explorers to the New World in the 16th and 17th Centuries,” Grovier writes. [Búcuara] “was known to have served another more surprising function beyond inflecting water with an addictively fragrant flavour. It became something of a fad in 17th-Century Spanish aristocratic circles for girls and young women to nibble at the rims of these porous clay vases and slowly to devour them entirely. A chemical consequence of consuming the foreign clay was a dramatic lightening of the skin to an almost ethereal ghostliness. The urge to change one’s skin tone can be traced back to antiquity and has perennially been driven by a range of cultural motivations. Since the reign of Queen Elizabeth, whose own pale complexion became synonymous with her iconicity, artificially white skin had been established in Europe as a measure of beauty. In warmer climates, it was thought that lighter skin provided proof of affluence and that one’s livelihood was not reliant on labour performed in harsh, skin-darkening sunlight.”

Although less dangerous than other “alternatives to skin lightening, such as smearing one’s face with Venetian ceruse (a topical paste made from lead, vinegar and water) which resulted in blood poisoning, hair loss, and death,” búcaro could triggered hallucinations.

“When we map the physiological and psychotropic effects of búcaro dependency on to the perennial puzzle of Las Meninas, the painting takes on a new and perhaps even eerier complexion. As the epicentre of the canvas’s enigmatic action, the altered and altering consciousness of the Infanta, whose fingers are wrapped around the búcaro […], suddenly expands to the mindset of the painting. Look closely and we can see that Velázquez’s brush is pointing directly at a pigment splotch of the same intense pulsating red on his palette as that from which the búcaro has been magicked into being. As spookily peaky in pallor as a genie conjured from a bottle, the Infanta appears too to levitate from the floor — an effect delicately achieved by the subtle shadow that the artist has subliminally inserted beneath the parachute-like dome of her billowing crinoline dress. Even the Infanta’s parents, whose images hover directly above the lips of the búcaro, begin to appear more like holographic spirits projected from another dimension than mere reflections in a mirror.

Suddenly, we see Las Meninas for what it is — not just a snapshot of a moment in time, but a soulful meditation on the evanescence of the material world and the inevitable evaporation of self. Over the course of his nearly four decades of service to the court, Velázquez witnessed the gradual diminishment of Philip IV’s dominion. The world was slipping away. The crumbly búcaro, a dissoluble trophy of colonial exploits and dwindling imperial power that has the power to reveal realms that lie beyond, is the perfect symbol of that diminuendo and the letting go of the mirage of now. The búcaro ingeniously anchors the woozy scene while at the same time is directly implicated in its wooziness. Simultaneously physical, psychological, and spiritual in its symbolic implications, the búcaro is a keyhole through which the deepest meaning of Velázquez’s masterpiece can be glimpsed and unlocked.”

“The Center of Your Being

Always we hope that someone else has the answer,

some other place will be better,

some other time it will all turn out.

This is it.

No one else has the answer.No other place will be better.

And it has already turned out.At the center of your being you have the answers.

And you know what you want.There is no need to run outside for better seeing,

nor to peer from the window.Rather abide at the center of your being.

For the more you leave it,

the less you know.

Search your heart and see that

the way to do is to be.”— Lao Tzu