Reading notes (2021, week 6) — On consumer culture, pop futurism and the library of possible futures, and Amazon and the problem of desire

Reading notes is a weekly curation of my tweets. It is, as Michel de Montaigne so beautifully wrote, “a posy of other men’s flowers and nothing but the thread that binds them is mine own.”

In this week’s edition: We need things consumed, burned up, replaced and discarded at an ever-accelerating rate (Victor Lebow, 1955); since the release of Alvin Toffler’s Future Shock 50 years ago, we all want to know what’s coming next; inside the mind of Amazon’s Jeff Bezos; when worldviews collide; Horace’s lyrics of friendship; how should we relate to the work of ‘cancelled’ artists?; against ‘relevance’ in art; and, finally, Apple CEO Tim Cook on misleading users on data exploitation.

A brief history of consumer culture

“Over the course of the 20th century, capitalism preserved its momentum by molding the ordinary person into a consumer with an unquenchable thirst for more stuff,” Kerryn Higgs writes in A Brief History of Consumer Culture, an adaptation of her book Collision Course: Endless Growth on a Finite Planet.

People have always ‘consumed’ the necessities of life, Higgs argues, but before the 20th century, there was little economic motive for increased consumption among the mass of people. Today, however, consumption is frequently seen as our principal role in the world.

“The cardinal features of this culture were acquisition and consumption as the means of achieving happiness; the cult of the new; the democratization of desire; and money value as the predominant measure of all value in society,” the the historian William Leach writes in Land of Desire: Merchants, Power, and the Rise of a New American Culture. Significantly, it was individual desire that was democratized, rather than wealth or political and economic power.

“This first wave of consumerism was short-lived. Predicated on debt, it took place in an economy mired in speculation and risky borrowing. U.S. consumer credit rose to $7 billion in the 1920s, with banks engaged in reckless lending of all kinds. Indeed, though a lot less in gross terms than the burden of debt in the United States in late 2008, which Sydney economist Steve Keen has described as ‘the biggest load of unsuccessful gambling in history,’ the debt of the 1920s was very large, over 200 percent of the GDP of the time. In both eras, borrowed money bought unprecedented quantities of material goods on time payment and (these days) credit cards. The 1920s bonanza collapsed suddenly and catastrophically. In 2008, a similar unraveling began; its implications still remain unknown. In the case of the Great Depression of the 1930s, a war economy followed, so it was almost 20 years before mass consumption resumed any role in economic life — or in the way the economy was conceived,” Higgs writes.

“Once World War II was over, consumer culture took off again throughout the developed world, partly fueled by the deprivation of the Great Depression and the rationing of the wartime years and incited with renewed zeal by corporate advertisers using debt facilities and the new medium of television. […]

Though the television sets that carried the advertising into people’s homes after World War II were new, and were far more powerful vehicles of persuasion than radio had been, the theory and methods were the same — perfected in the 1920s by PR experts like [Edward Bernays]. Vance Packard echoes both Bernays and the consumption economists of the 1920s in his description of the role of the advertising men of the 1950s:

‘They want to put some sizzle into their messages by stirring up our status consciousness. … Many of the products they are trying to sell have, in the past, been confined to a «quality market». The products have been the luxuries of the upper classes. The game is to make them the necessities of all classes. This is done by dangling the products before non-upper-class people as status symbols of a higher class. By striving to buy the product — say, wall-to-wall carpeting on instalment — the consumer is made to feel he is upgrading himself socially.’

Though it is status that is being sold, it is endless material objects that are being consumed,” Higgs notes.

“In researching his excellent history of the rise of PR, Stuart Ewen interviewed Bernays himself in 1990, not long before he turned 99. Ewen found Bernays […] to be just as candid about his underlying motivations as he had been in 1928 when he wrote Propaganda:

‘Throughout our conversation, Bernays conveyed his hallucination of democracy: A highly educated class of opinion-molding tacticians is continuously at work … adjusting the mental scenery from which the public mind, with its limited intellect, derives its opinions.… Throughout the interview, he described PR as a response to a transhistoric concern: the requirement, for those people in power, to shape the attitudes of the general population.’

Bernays’s views, like those of several other analysts of the ‘crowd’ and the ‘herd instinct,’ were a product of the panic created among the elite classes by the early 20th-century transition from the limited franchise of propertied men to universal suffrage. ‘On every side of American life, whether political, industrial, social, religious or scientific, the increasing pressure of public judgment has made itself felt,’ Bernays wrote. ‘The great corporation which is in danger of having its profits taxed away or its sales fall off or its freedom impeded by legislative action must have recourse to the public to combat successfully these menaces.’

The opening page of Propaganda discloses his solution:

‘The conscious and intelligent manipulation of the organized habits and opinions of the masses is an important element in democratic society. Those who manipulate this unseen mechanism of society constitute an invisible government which is the true ruling power of our country.… It is they who pull the wires which control the public mind, who harness old social forces and contrive new ways to bind and guide the world.’

“The commodification of reality and the manufacture of demand have had serious implications for the construction of human beings in the late 20th century, where, to quote philosopher Herbert Marcuse, ‘people recognize themselves in their commodities.’ Marcuse’s critique of needs, made more than 50 years ago, was not directed at the issues of scarce resources or ecological waste, although he was aware even at that time that Marx was insufficiently critical of the continuum of progress and that there needed to be ‘a restoration of nature after the horrors of capitalist industrialisation have been done away with.’

Marcuse directed his critique at the way people, in the act of satisfying our aspirations, reproduce dependence on the very exploitive apparatus that perpetuates our servitude. Hours of work in the United States have been growing since 1950, along with a doubling of consumption per capita between 1950 and 1990. Marcuse suggested that this ‘voluntary servitude (voluntary inasmuch as it is introjected into the individual) … can be broken only through a political practice which reaches the roots of containment and contentment in the infrastructure of man, a political practice of methodical disengagement from and refusal of the Establishment, aiming at a radical transvaluation of values.’

[…]

The capitalist system, dependent on a logic of never-ending growth from its earliest inception, confronted the plenty it created in its home states, especially the United States, as a threat to its very existence. It would not do if people were content because they felt they had enough. However over the course of the 20th century, capitalism preserved its momentum by molding the ordinary person into a consumer with an unquenchable thirst for its ‘wonderful stuff.’”

The library of possible futures

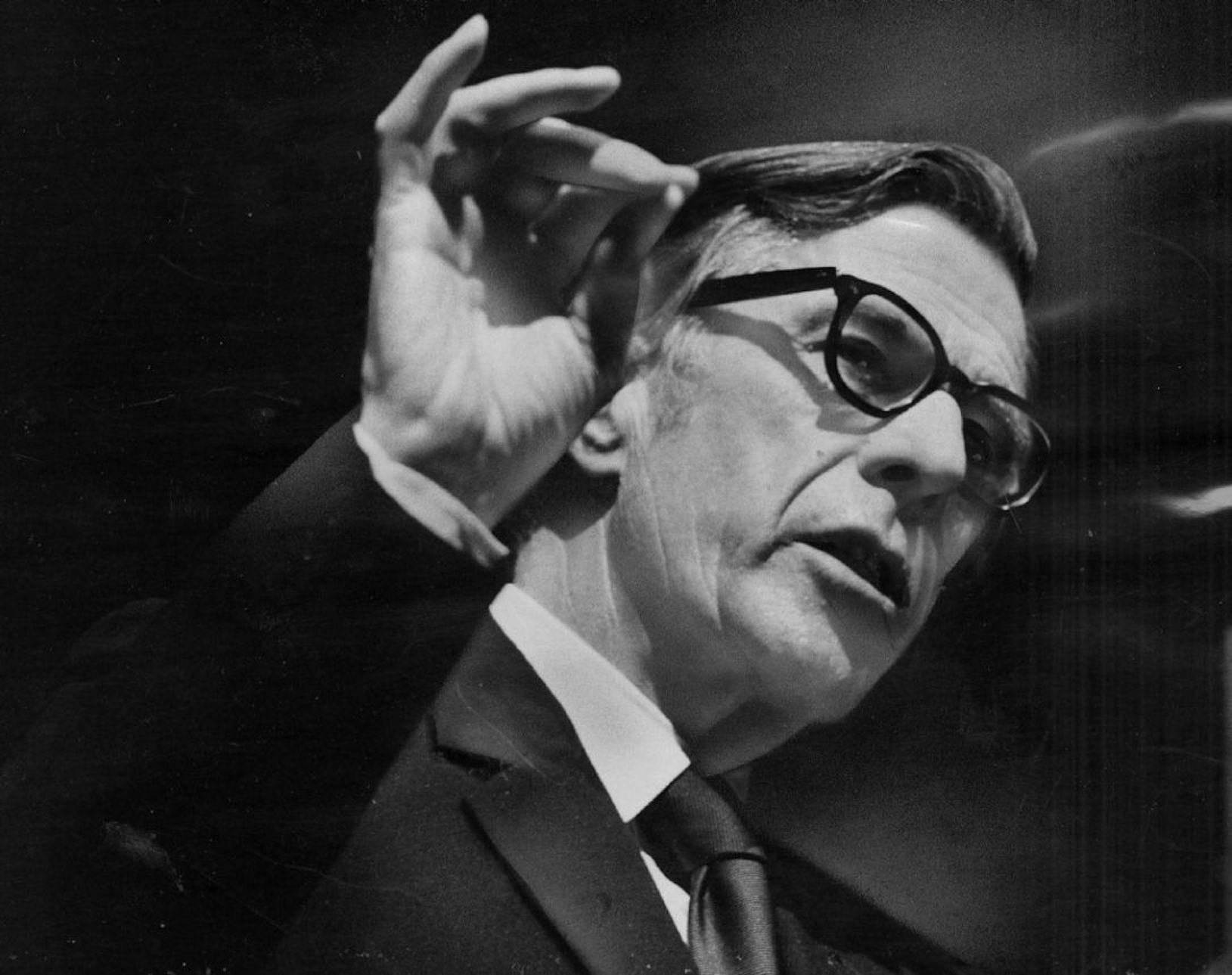

“Since the release of Alvin Toffler’s Future Shock 50 years ago, the allure of speculative nonfiction has remained the same: We all want to know what’s coming next,” Samantha Culp writes in The Library of Possible Futures.

“[T]he most important promise underlying much of the canon inaugurated by Future Shock [a genre we might call ‘pop futurism’] is that with the right foresight, readers can not only prepare for what’s coming, but also profit from it. This whiff of insider trading presents the future as a commodity, an exercise in temporal arbitrage in which knowledge of new developments yields a financial edge. It’s no coincidence that the authors of such works have historically skewed white, male, and capitalist in mindset; many of them work as futurists, consulting in the gray area between business, government, technology, advertising, and science fiction,” Culp writes.

“The book’s central argument may be recognizable, perhaps because Toffler argued it so convincingly that it became a cliché: The world was changing at an exponentially accelerating pace, leaving humans in a state of ‘shock’ and struggling to keep up. At least in the countries of the developing West, society experienced a profound historical shift as the industrial revolution gave way to information economies; that shift was in turn sped up by new technologies of mass communication. Confronted by a tsunami of change, most people were anxious, disoriented, and confused. The book sought to diagnose this novel condition, to show its ‘sources and symptoms,’ and to ponder possible ways to mitigate its effects.

The chief strategy for combatting future shock, according to Toffler, was to double down on the future itself. He called for government committees to fund large-scale future studies, for science-fiction writers to bring more methodical forecasting to their stories, for American schools to teach future-oriented courses (as a counterpoint to history classes). ‘To create such images and thereby soften the impact of future shock,’ he wrote, ‘we must begin by making speculation about the future respectable.’ […]

Beyond its substance, though, Future Shock resonated just as much for its style. Taking a cue from the Canadian media theorist Marshall McLuhan, whose ability to deliver big concepts in snappy sound bites was obviously an inspiration, Toffler made the medium the message. His tone is equal parts alarmed and energized, professorial and breathless. He speaks of a ‘fire storm of change,’ the ‘electric impact’ of new concepts, populations ‘rocketing’; his language emulates the propulsive speed he’s describing. He shares catchy terms like ad-hocracy; he makes the future the most spectacular show on Earth, and you’d be foolish to look away. Even when Toffler depicts the potential dangers of accelerated change — offshore drilling accidents, for example, or decision-making algorithms — and proclaims the necessity of regulation, he believes that solutions must come from a thorough orientation toward future transformations. ‘The power of the technological drive is too great to be stopped by Luddite paroxysms,’ he writes, and the book, despite its occasional cautionary tone, intoxicatingly sets the terms of its own worldview. ‘Is all this exaggerated?’ he famously asks, before answering his own question. ‘I think not.’”

“[A]s the discipline and definition of ‘futures thinking’ transforms, expanding through different voices, geographies, and ideological frameworks, the pop-futurist book is transforming along with it, sometimes challenging Toffler’s footsteps, if not taking a new path altogether. Last year saw the release of After Shock, an official tribute to Future Shock: The anthology featured homages and, notably, a few critiques, by more than 100 contemporary futurists and thinkers. Representing a step in a different direction, How to Future shares strategies about how to manage and create change in various contexts, far beyond just the commercial or technological realm. Written by Scott Smith and the sci-fi writer Madeline Ashby, it admirably attempts to open up the ‘futuring toolkit’ for diverse audiences and goals, illustrating the application of methodologies such as scenario planning […] in fields such as nonprofit management and public health. […]

The oft-cited concept of ‘long-term thinking’ also pops up in several recent books as a crucial tool for addressing a different existential threat: the climate emergency. In The Good Ancestor, the philosopher Roman Krznaric calmly calls for a reorientation toward the future, not to benefit us (as is typically the pitch of the pop-futurist book), but to benefit our far-off descendants. He uses the term cathedral thinking to describe epic projects that will not be completed within our lifetimes, but that are crucial to start now — similar to the work of generations who built medieval cathedrals that only their great-grandchildren would see finished. If Future Shock and its ilk crystallized the image of the future as a great wave roaring toward us, inevitable and crushing, the central metaphor throughout Krznaric’s book […] is the acorn. The point is not just to, say, plant trees (though literal reforestation is indeed described as a vital long-term project), but to emphasize the agency of the present moment and the potential, however imperfect, to influence the future.

This theme is perhaps most inventively explored in pop-futurist projects that go beyond the confines of the book entirely. One is Afro-Rithms From the Future, a card game that challenges players to imagine future scenarios with an explicit focus on issues of social justice and inequality. […] Unlike a linear book, interactive card decks and collective storytelling projects may best embody the strange, mutable, participatory ways the actual future unfolds.”

“[P]erhaps this is the year when we’ll be able to see most clearly that we need an entirely new way of talking about the future if we are to shape it into something equitable and sustainable for all. Now, just as in 1970, the future is made by intricate interactions of people, systems, communities, material and environmental conditions — and by the stories that influence those relationships. This new chapter of pop futurism shows its enduring appeal as a familiar dialect, even if the message it carries is now an urgently different one. It might still have the potential to illustrate new visions of the future for a healthier world, but it needs to feel as vivid and magnetic as Future Shock did 50 years ago. As Toffler and his acolytes once made the accelerated, profit-driven future dazzling, the next generation of thinkers is trying to reconcile this paradox — to conjure slower, more restorative, community-driven futures that are just as irresistible,” Culp concludes.

Amazon and the problem of desire

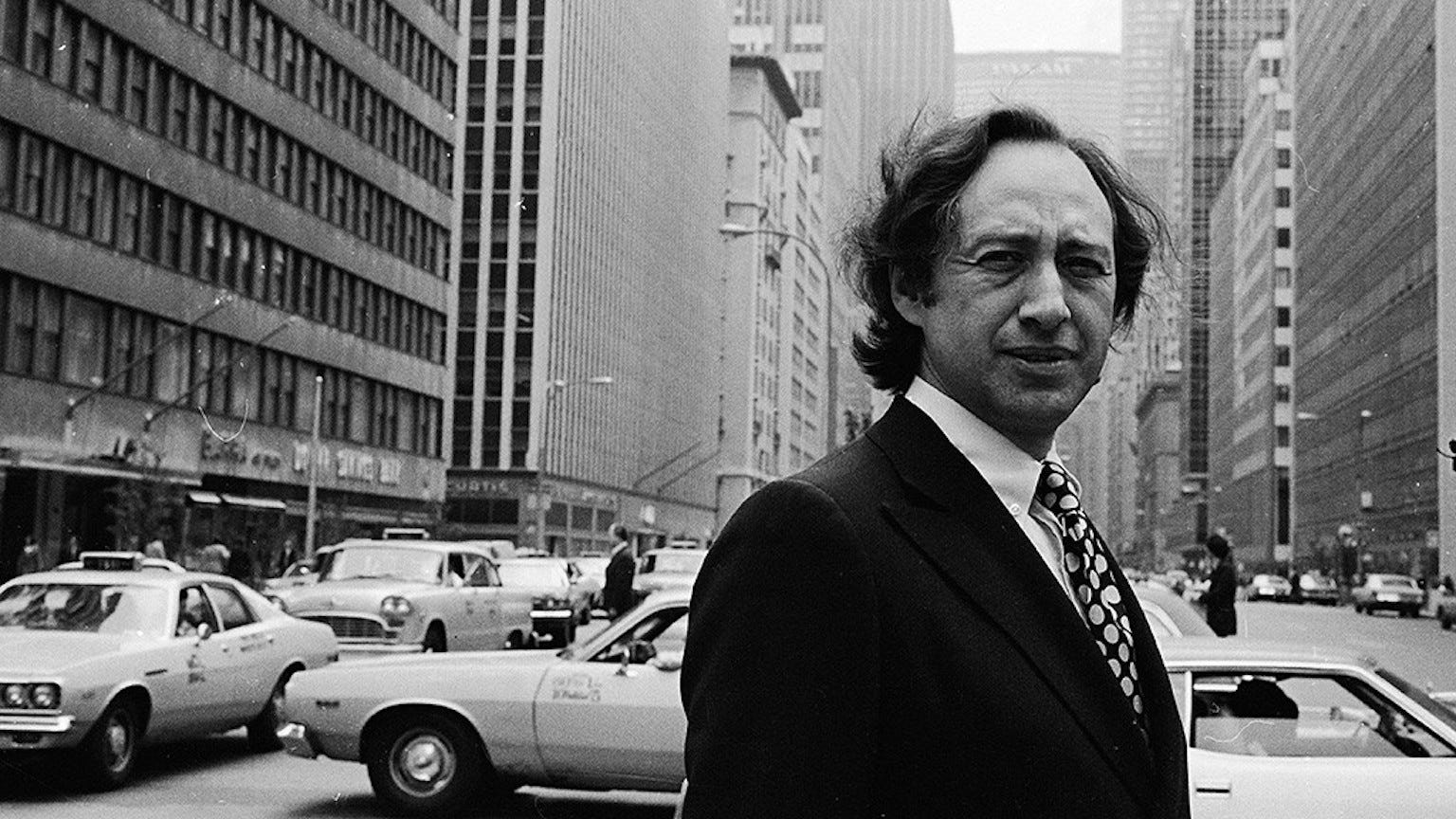

“A world in which consumer goods are rationed is not a world in which Amazon could continue its endless growth. We must follow, in other words, the path of relentlessness, and we must follow it off the face of the Earth. It’s here that ‘relentlessness’ is revealed as an ideological term. Bezos seems less interested in protecting the future of the planet than protecting the future of capitalism,” Mark O’Connell writes in ‘A managerial Mephistopheles’: inside the mind of Jeff Bezos.

But what are we to make of these celestial aspirations, this vision of the good life as one of endless consumption, limitless growth? And what of the man himself?

“Bezos himself, like seemingly all wealthy entrepreneurs, claims not to be motivated by — or even especially interested in — wealth itself, or its mundane trappings. As he puts it in [Invent & Wander], he never sought the title of ‘world’s richest man.’ […] He would much rather be known, he says, as ‘inventor Jeff Bezos’ [emphasis by the author]. In the statement he made to investors earlier this week on stepping down as chief executive, Bezos once more returned to the idea of himself as an inventor: ‘Amazon is what it is,’ he said, ‘because of invention … When you look at our financial results, what you’re actually seeing are the long-run cumulative results of invention.’

The book, in particular its introduction, by the biographer Walter Isaacson, who is best known for his book about Steve Jobs, is intent on framing Bezos as a contemporary renaissance man, a figure on the revolutionary order of your Leonardos, your Einsteins, your Ben Franklins (all of whom Isaacson has written books about). After running through the qualities he considers peculiar to such ‘true innovators’ — passionate curiosity, an equal love of both the arts and the sciences, a Jobs-like ‘reality distortion field’ that inspires people to pursue apparently impossible ends, and a childlike sense of wonder — Isaacson concludes that Bezos embodies all of them, and that he belongs in the pantheon of truly revolutionary thinkers.

If you believe that capitalism is an inherently just and meritocratic system, whereby the most worthy people — the hardest-working, the cleverest, the most innovative — amass the greatest wealth, then it stands to reason that you would have to make some kind of argument for a man who had amassed more than $180bn in personal wealth as a presiding genius of our time. And just as Hegel looked at Napoleon and saw the world-soul on horseback, Isaacson views Bezos in similarly heroic light: the world-soul dispatched by delivery drone. The effort to portray him as such is, though, inevitably beset by bathos. ‘An example of how Bezos innovates and operates,’ he writes, ‘was the launch of Amazon Prime, which transformed the way Americans think about how quickly and cheaply they can be gratified by ordering online.’ There is no question that the introduction of Amazon Prime marked a major moment in the history of buying stuff off the internet, but to present it as the work of an ingenious inventor seems a stretch. For all the vastness of Bezos’s wealth and power, the banality of its foundation is undeniable. (There is, here, an unintended comedy to Isaacson’s hagiography, taking on as it does an almost mock-heroic tone: Bezos fomenting a kind of revolution in consciousness, around how ‘quickly and cheaply’ consumers can get the stuff they order off the internet.),” O’Connell writes.

Although Bezos has not been personally responsible for the introduction of any new technology into the world, “it would clearly be wrong to claim that there is nothing radical about the nature of Bezos’s achievement. Amazon’s vast logistical innovations have made the consumer experience, from order to delivery, as frictionless as possible, and in so doing have changed the nature of consumerism. This is to say that it has changed the texture of the world. It’s not that Bezos is doing any one thing that no one had thought to do before: it’s that he’s doing it faster, more efficiently, and at unprecedented scale. His achievement, in this sense, can be seen as one not one of quality but of quantity. But the sheer scale of the quantity, the unprecedented mass and velocity of Amazon’s power, becomes itself qualitative — in the way that getting stung by two bees is quantitatively different from getting stung by one, but getting stung by a billion would be qualitatively different.”

In 2016, Amazon was granted a patent for a Human Transport Device, a cage just large enough to contain one worker. “The patent went unremarked for two years until the academics Kate Crawford and Vladan Joler discovered it, and wrote about it in a document that accompanied a work (part research project, part art installation) entitled Anatomy of an AI System — a sprawling diagram, two metres high and five metres across, mapping the complex nexus of extractive and exploitative processes involved in the functioning of an Amazon Echo. The patent, they write, ‘represents an extraordinary illustration of worker alienation, a stark moment in the relationship between humans and machines … Here, the worker becomes a part of a machinic ballet, held upright in a cage which dictates and constrains their movement.’

Amazon never put the cage into production; when the patent was uncovered, the public reaction was one of horror, and the company acknowledged the whole thing as a terrible idea.” But for O’Connell, “[t]he cage suggests a way of thinking about what distinguishes Amazon as a business, and Bezos as an innovator: the use of technology to push harder and farther than any previous company toward removing, at both ends of the supply chain, the human limitations to capital’s efficiency. Amazon reveals a world, that is, where capital is not something to be acquired in service of human ends, but as an end in itself. If other worlds must be constructed, slowly rotating in space, in order to serve these post-human ends, then so be it. The problem with the cage patent, in other words, was not just that it was dehumanising, but that it illustrated too explicitly the patterns of dehumanisation — of relentlessness in pursuit of customer ecstasy — that had already long been central to Amazon’s operations in the first place.

It’s tempting to argue that Amazon’s true innovation has been the ruthless exploitation of human labour in service of speed and efficiency, but that’s really only part of the picture: the aim is removing humans — with their need for toilet breaks, their stubborn insistence on sleeping, their tendency to unionise — as much as possible from the equation; the grim specifics of the labour conditions are only ever a byproduct of that aim. This has been an aim of capitalism since at least as far back as Henry Ford, and in an obvious sense it’s precisely the dynamic you experience every time you wind up with unexpected items in the bagging area at Tesco. As usual with Amazon, it’s not that something new is happening — it’s that an old thing is happening with unprecedented force, speed and efficiency,” O’Connell writes.

It’s easy to say no ethical consumption is possible under capitalism because “the entire system within which we live is so morally bankrupt that no kind of decent accommodation with it can be reached — which would not be untrue, but also unquestionably something of a cop-out.” According to O’Connell, the core of the problem is that “Amazon works too well. Its success and ubiquity as a consumer phenomenon makes a mockery of my ethical objections to its existence. […] Amazon thrusts my identity as a consumer into open conflict with my other identities — writer of books, holder of vaguely socialist ideals — in such a way that my consumer identity too often prevails.

We live in a world where the satisfaction of quotidian desires is the work of mere moments. Whatever you want (and can afford) can be brought to you, cheaply and with vanishingly minimal effort on your part. We know that this form of satisfaction doesn’t make us any happier, and in fact only damages the world we live in, but even so we continue pursuing it — perhaps because it’s in our nature, or perhaps because almost every element of the culture we exist in is calibrated to make us do so.

And so the problem, as such, is one of desire. It’s not that Amazon gives me what I want; it’s that it gives me what I don’t want to want. I want the convenience and speed and efficiency that Amazon offers, but I’d rather not want it if it entails all the bad things that go with it. To put it in Freudian terms, we are talking about the triumph of the consumerist id over the ethical superego. Bezos is a kind of managerial Mephistopheles for our time, who will guarantee you a life of worldly customer ecstasy as long as you avert your eyes from the iniquities being carried out in your name.”

And also this…

“The more successful science became in describing nature and in facilitating the manipulation of its materials to create technologies and prosperity, the further it placed itself from the complex subjectivities of humans, which became part of the humanities and the arts,” Marcelo Gleiser writes in When worldviews collide: Why science needs to be taught differently.

“Despite much protesting from the early 19th-century Romantics, the agenda set forth by the Enlightenment placed the centrality of reason above all else. Universities, the seats of learning and knowledge creation, were divided into a proliferating number of departments, split from one another by high walls, each discipline with its own methodology and language, goals and essential questions. This fragmentation of knowledge inside and outside of academia is the hallmark of our times, an amplification of the clash of The Two Cultures that physicist and novelist C.P. Snow admonished his Cambridge colleagues for in 1959. Snow would surely be appalled to see that this fragmentation is representative of a much larger tribal fracturing that continues to spread across the world at alarming speeds.”

“[Four hundred] years since Galileo, the time has come to rethink how high the walls separating the sciences from the humanities and the arts should be. This is especially true in education at all levels, both formal and informal. It is not an accident that distrust in science is rampant in this country and others. The teaching of science boasts its separation from our humanity, relegating subjective and existential concerns as secondary. The teaching of the humanities distances itself from the sciences. In the overwhelming majority of cases, a science class is strictly about technical content, the programmatic instructing of the tools and jargon needed to enter the guild. Students don’t learn about the scientists themselves, the cultural context of their times, or the struggles and challenges, often very dramatic, that colored their research path.

Traditional science teaching adopts what could be called the conquering mode: It’s all about the final results, not about the difficulties of the process, the failures and the challenges that humanize science. This dehumanizing approach works as a cleaver, splitting students and the public into two distinct groups: those who embrace a dehumanized science teaching and those who shun it. […]

Science doesn’t exist in a cultural and existential vacuum and its teaching shouldn’t either. I say this after 30 years of classroom experience, both in technical and nontechnical science classes. Although teachers are always pressed for time to cover their assigned syllabi, they will be educating and inspiring better scientists and citizens if they took the time to humanize the science they teach.”

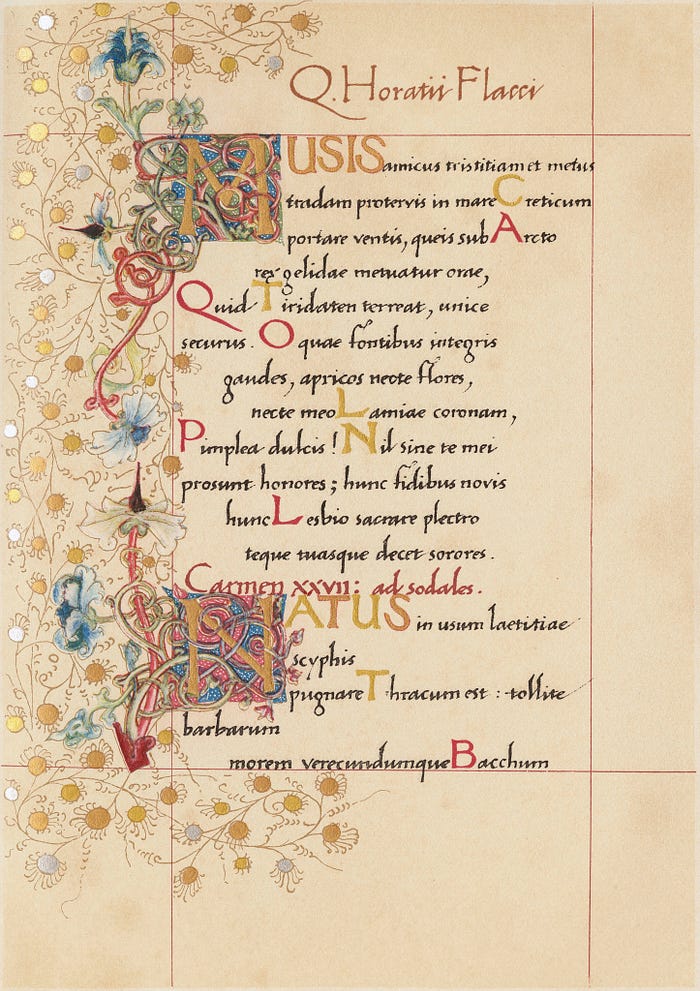

According to Stephanie McCarter, Horace’s lyrics of friendship offer hope to our troubled world.

“It is of course death that’s the greatest source of anxiety for many human beings, and in his lyric poems Horace repeatedly holds out friendship as the best possible antidote to such despair. Instead of shielding us from our mortal condition, he reminds us of it constantly. Each of us will die, and then there will be no wine, no love, no friendship. The finality of death is his most constant refrain. Once we descend to the Underworld, ‘we’re nothing more than dust and shadow’ (Odes 4.7). Such ephemerality demands friendship — without it, our mortal lives are squandered.

Friendship indeed becomes acutely important as one ages. Horace, especially in the Odes, is perhaps the foremost poet of middle-aged friendship. War and love — the stuff that leads to division — are features instead of youth’s impetuosity. In one movingly beautiful poem (Odes 2.11), Horace urges his fellow middle-aged friend Quinctius to put aside his ‘gnawing anxieties’ about the future and to focus instead on the here-and-now within his grasp. Quinctius’ fleeting life, his loss of ‘smooth-cheeked youthfulness and grace,’ makes doing so all the more pressing. Horace depicts a private, idealised landscape, where together he and Quinctius can recline beneath a plane or pine and ‘drink while we can.’

This call to ‘drink while we can’ folds friendship into what is perhaps Horace’s most abiding theme: carpe diem, a tag coined in Odes 1.11. This phrase is commonly misunderstood — it has little to do with the instant gratification traditionally associated with it. Carpe suggests not seizing but plucking or harvesting the day as if it were ripe fruit. To seize the day is to recognise and savour what is at hand for enjoyment in each moment of our mortal lives. Such savouring can best be done in the company of friends.”

Despite his shortcomings — Horatian friendship “frequently defines itself in opposition to outsiders, with Horace contrasting his inner circle with various ‘others,’ such as foreigners”— it is still Horace to whom McCarter turns again and again in her own ephemeral life, “mining his words for ways to forge connections and live well. His lyrics remind me constantly of the precious value of the relationships I’ve built with those I love, no matter how different these relationships might look from those of Horace. Over the years, he himself has become a kind of friend to me, and he continues to guide me through the political anxiety, the unfathomable death, and the seemingly neverending separation that define this specific moment in which I live. I am not naive enough to think that friendship is all that this moment requires, but I do know this moment requires friendship if I’m to face it without despair. And so I let Horace’s refrains run through my mind to keep my eyes focused on what’s in my grasp right now and to give me hope for what comes next.”

“In engaging with the output of compromised figures, we must decipher whether the artist’s misdemeanours have a bearing on the moral questions arising from their work,” Noël Carroll, a professor of philosophy and the author of Art in Three Dimensions and Beyond Aesthetics, argues in How should we relate to the work of “cancelled” artists?

In recent years, many art lovers have asked themselves what their response to dishonoured artists should be. “Some have decided to ‘cancel’ the offending artists,” but “these responses are not responses to the artworks themselves. They are motivated by external reasons pertaining to the artist’s standing and to financial issues,” Carroll writes. These external reasons “can be contrasted with what might be called ‘reasons of art’ — reasons that grow out of our transactions with the artwork itself, reasons internal to the experience of the work. […] Sometimes these reasons involve moral factors that arise from our very engagement with the works of culpable artists. In such cases, we may wonder whether internal reasons alone could count against our having any contact with blemished goods.”

Carroll argues that the art lover should feel free to savour the work if there is no connection between the known moral misbehaviour of the artist and the moral content of the work. For example, “Roald Dahl’s anti-Semitism does not give us an internal reason to forsake his children’s stories which do not traffic in this prejudice. If James and the Giant Peach does not internally prescribe our endorsement of anti-Semitism, why cancel it?”

But why put such weight on the existence of bad behaviour as a clue to the artist’s endorsement of evil?, Carroll wonders. Shouldn’t the conscientious art lover feel uncomfortable in the face of the mere portrayal of evil? “No,” says Carroll, “the mere portrayal of evil does not always signal endorsement.”

Take David Chase, who “presented the mafioso Tony Soprano as a harried family man, thereby eliciting positive feelings for him from many viewers. But the show did not endorse his criminal activities. Rather, it unmasked the way in which the excuse — ‘I did it all to take care of my family’ — can serve as a rationalisation for the most heinous transgressions. In doing so, it asked the viewers to reflect upon this rationalisation, perhaps even in their own lives.

Similarly, apparent evil is sometimes lionised for the sake of what might be called ‘moral immoralism,’ where the actions in question are designed to challenge the dubious constraints of conventional morality. For example, violations of established sexual mores, as portrayed in DH Lawrence’s Lady Chatterley’s Lover, may be foregrounded in order to subvert the status quo, so that what appears immoral serves a higher or more moral morality.

For these reasons, and others maybe even more obvious, the simple portrayal of evil cannot be read as an endorsement of evil. Yet when an artist has been found guilty in their daily life of the very crimes and misdemeanours that are exhibited in their work, then it seems reasonable to suspect that we are meant to endorse them — to embrace a positive attitude toward them. And this is something a righteous art lover may resist, even to the point of closing the book and putting it aside. Of course, this may not be the final word. There may be reasons to open the book again, possibly to gain a better grasp of the corruption involved. Nevertheless, what I have called ‘reasons of art’ can play a legitimate role in deliberating about what is to be done with respect to disgraced artists. And whether we continue to engage with an artist’s work may depend on weighing our external reasons against our internal ones.”

“I can’t bear the thought that art is a zero-sum game, that we have to choose which kinds of stories are relevant, which lives have value; I can’t bear the thought that works of art exist only at the expense of other works of art, that books are locked in some ferocious competition for space. Maybe there is virtue in rejecting any reality construed along these lines; maybe there are certain choices that so deform our character that no claim of necessity can justify them. Besides, the rhetoric of scarcity often turns out to be exaggerated. Our time and attention might be more like the loaves and fishes than we think. After all, we could always cancel our Netflix subscriptions; we could always delete our Twitter accounts,” Garth Greenwell writes in Making Meaning, an essay in Harper’s Magazine against ‘relevance’ in art.

“Our misunderstanding of relevance may come from a single word in the OED definition: ‘Appropriate or applicable in the (esp. current) context.’ In the age of Twitter feeds and nonstop news, our overwhelming glut of novelty, something has become disordered in our sense of the relationship between art and time. I’m interested in literary projects that attempt to write at the speed of our present moment — projects like Ali Smith’s seasonal cycle of novels, or, somewhat differently, Karl Ove Knausgaard’s My Struggle. But a more profound capacity of art is its ability to speak across time, and not just time, but also across geography, language, culture, class — the very attributes that now determine ‘relevance.’ Literature is an extraordinary technology for the transmission of consciousness, which is what makes it worth devoting a life to. It seems little less than miraculous that I can read Sappho across millennia, Yukio Mishima across languages, Chimamanda Ngozi Adichie across continents, and feel both that I am experiencing worlds alien to me and that I am being shown essential truths about myself,” Greenwell writes.

“One reason subject matter has become central to discussions of art in this era of hot takes and think pieces is that viewing art as subject matter, especially political subject matter, makes it voluble, productive of discourse. When I consider the subject matter of a work of art, or its political or social context, I want to talk; when I consider its form, I want to contemplate. But commentary about art that says nothing about form is nearly useless; it almost always misses the point, because form is the distinctive feature of art, its defining property. Aesthetic form is charged with affective and intellectual significance, with human intention, in a way that non-aesthetic forms are not. This is what makes a poem or a novel different from a newspaper article or an encyclopedia entry.

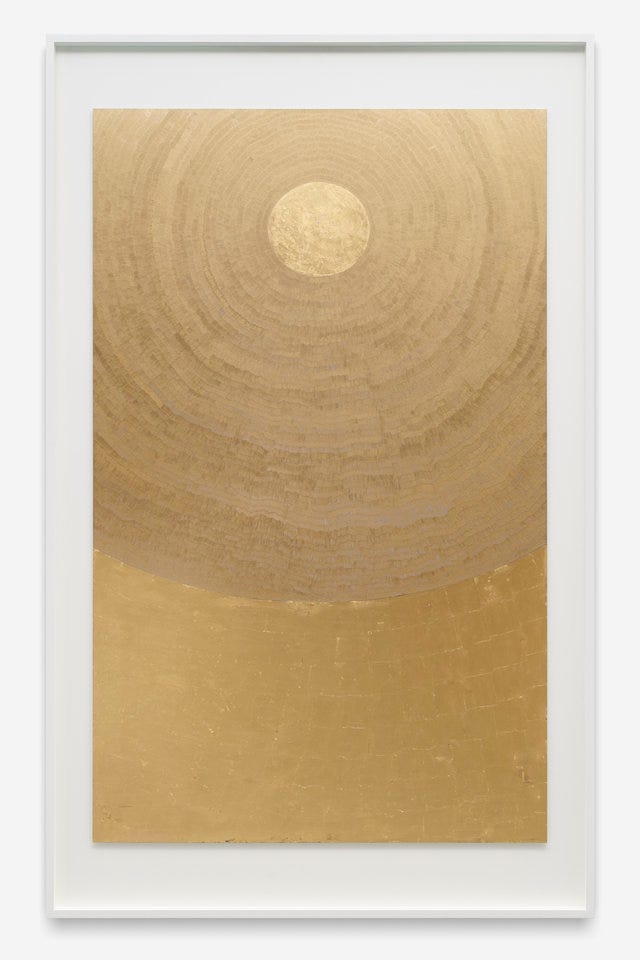

There is a painting on the wall of my studio, a work by a young American artist named Slater Bradley. I’ve learned a few things about Bradley over the past year, but I first encountered this painting in a state of perfect innocence, when I saw it hanging in a gallery in Lisbon, where I was teaching at a writers’ conference. I was accompanying another writer, who wanted to see the work of a photographer she liked, and we were both struck by an exhibition of Bradley’s canvases in the gallery’s main space. The paintings were large, some broken into geometrical shapes, gold and silver and black, some simple fields of a single color. They contained an elaborate symbolism, a mix of astrology and Eastern metaphysics, which immediately aroused my skepticism and still has yet to catch my interest. But the paintings were beautiful, and one of them, the smallest, grabbed hold of me in a way I’ve felt only a few times in my life. It’s a block of blue on a surface mounted on a white mat, the whole enclosed in a brass frame. From across the gallery, a warehouselike space with cement floors and white walls, lit through a strip of high windows by Lisbon’s extraordinary summer sun, it looked like undifferentiated color, a weirdly textured and captivating blue. Then the room darkened dramatically — a cloud passed in front of the sun — and the painting transformed: it brightened and became luminous, an effect I have become familiar with but not accustomed to, and which I have no way of explaining. The painting communicated a sense of stillness infused with vibrancy — a quality I find in much of the art I love, something I’ve characterized elsewhere as being like ‘a flame submerged in glass.’ It’s a stillness that reminds me that stasis was also the Greek word for sedition, for that decidedly unplacid political stalemate that can erupt in civil war.

Up close, the stillness dissolves, or is troubled: the painting consists of thousands of small hatch marks, short vertical strokes made in horizontal bands, applied in what the artist has described as a kind of meditative discipline. The experience I had viewing it was something like love, what the French call a coup de foudre, a thunderbolt, and I knew that I wanted to feel its effect again and again; I knew that it was something that would be, in some way I didn’t fully understand, useful to me. And so, thanks to haggling, a drawn-out schedule of payments generously accepted by the gallery, and the forbearance of my partner, it now hangs behind my desk, where I can feel it almost buzzing as I work.

It would be difficult for me to say anything about the social and political relevance of Bradley’s work, though of course the work is embedded in social and political contexts, the arrangements of the world that made it possible for Bradley to create it and for me to hang it in my writing room. It would be difficult to make the work voluble in ways intelligible to the idea of relevance I find inadequate. And yet, when I think of the real relevance of art, I think of this painting, which reminds me of that oldest sense of relever, the shared parent of relevant and relieve, and of its physical, bodily meaning: to put back into an upright position. That was what I felt at the gallery in Lisbon, and it’s what I feel now, writing these words with the painting at my back: that I am being restored, set upright, reminded of a frequency I need to tune myself to catch. This is the real relevance of art, I think, this lifting up, this challenge to lift myself up. It helps me to do my work, this mystery hanging at my back; it helps me to live my life.”

“If a business is built on misleading users on data exploitation, on choices that are no choices at all, then it does not deserve our praise. It deserves reform.

We should not look away from the bigger picture and a moment of rampant disinformation and conspiracy theory is juiced by algorithms. We can no longer turn a blind eye to a theory of technology that says all engagement is good engagement, the longer the better, and all with the goal of collecting as much data as possible.

Too many are still asking the question, ‘How much can we get away with?’ When they need to be asking, ‘What are the consequences?’” — Apple CEO Tim Cook, from a recent speech at Brussels’ International Data Privacy Day