Reading notes (2021, week 9) — On teaching literature like science, ‘believe science,’ and a ‘new’ interdisciplinary approach to education

Reading notes is a weekly curation of my tweets. It is, as Michel de Montaigne so beautifully wrote, “a posy of other men’s flowers and nothing but the thread that binds them is mine own.”

In this week’s edition: Literature is a machine that accelerates the human brain; science is simply assumed to reign supreme; in the age of Wikipedia, is it better to study everything?; imagining a different kind of platform economy; time, like memory, is fickle; how to speak in public; monument created in memory of the Russian avant-garde artist and art theorist, Kazimir Malevich; and, finally, Bill Gates on climate change.

Teaching literature like science

“[N]arrative and stories are the most powerful thing that humans have. They are what have allowed Elon Musk to hijack our modern conversation about Mars and the future. That’s all narrative. There’s nothing actually new about SpaceX. It’s just NASA. But Musk has been able to tell this story.

So if you want to be successful in business, if you want to be successful in science, if you want to be successful in anything, you need to understand stories and how they work. On a fundamental level, there’s no better way to understand stories than through literature.” — Angus Fletcher

“In the past quarter century, enrollment in college English departments has sunk like the Pequod in Moby Dick. Meanwhile, enrollment in science programs has skyrocketed. It’s understandable. Elon Musk, not Herman Melville, is the role model of the digital economy.” But Angus Fletcher, an English professor at Ohio State University, it doesn’t have to be that way,” Kevin Berger writes in Literature Should Be Taught Like Science.

Fletcher says he is part of a ‘group of renegades’ who are on a mission to plug literature back into the electric heart of contemporary life and culture. He has a plan — ‘apply science and engineering to literature’ — and a syllabus, Wonderworks: The 25 Most Powerful Inventions in the History of Literature, in which he examines how writers — from ancient Mesopotamia to Elena Ferrante — have created technical breakthroughs, rivaling scientific inventions, and engineering enhancements to the human heart and mind.

In a conversation with Nautilus Magazine’s Kevin Berger, Fletcher talks about his provocative view that to save the humanities, literature must be taught as a science.

In the past 20 years, enrollment in English departments has dropped by 25 percent, while enrollment in STEM classes has doubled. Why is that?

[Angus Fletcher] “I can tell you exactly why that is. English literature is not being taught in a way that is connecting with people. We’ve been taught in school to interpret literature, to say what it means, to identify its themes and arguments. But when you do that, you’re working against literature. I’m saying we need to find these technologies, these inventions, and connect them to your head, see what they can do for your brain. Literature isn’t about telling you what’s right or wrong or about giving you ideas. It’s about helping you troubleshoot your own head.”

The chapters in Wonderworks include fun names for the inventions of literature. The names include ‘Almighty Heart,’ ‘Serenity Elevator,’ ‘Sorrow Resolver,’ ‘Virtual Scientist.’ How did literature invent the virtual scientist?

[Angus Fletcher] “A virtual scientist has no ego. Our brains are born scientists. From the moment we’re born, we make hypotheses about what will happen if we do something. Then we test those hypotheses. We might say, ‘If I stick my hand in the fire, it might burn.’ And then we stick our hand in the fire. We’re like, ‘Yes, that did burn.’ We’re constantly running these experiments in our lives. We build stories and narratives as a way of organizing them. But what holds us back as scientists is our egos. That’s because we just don’t like to think we’re wrong. Present someone with a piece of data that contradicts what they think and they will deny your data. They will bend your data to fit their hypothesis. This is an endemic psychological process of the human mind.

So the question is, ‘How do we become better scientists?’ The way to do that is to remove our ego. Literature provides us the space to do that. What is Sherlock Holmes doing? Sherlock Holmes has given us a problem that we need to solve experimentally by positing hypotheses, by testing them. But we’re not Sherlock Holmes and so it’s not embarrassing if we happen to be wrong. Sherlock Holmes and tons of great detective fiction allow us to play virtual scientists by going into a space where our ego doesn’t exist and we can practice our scientific method of making predictions and testing them.

Wonderworks made me a little anxious. I’m sure you know Auden’s lines from In Memory of W.B. Yeats: ‘For poetry makes nothing happen: it survives/In the valley of its making where executives/Would never want to tamper…’ Reading literature as instructional makes me a little queasy. I read Proust for his mad rhapsodies and not for his ‘therapeutic effect.’ That seems reductive, or worse, like self-help.

[Angus Fletcher] “If I was saying, […] ‘I want to delete all the other works of literary criticism in the world and I want everyone to only read Wonderworks,’ then I would agree with you. But what I’m saying is there are lots of gorgeous, wonderful, important works of literary criticism that celebrate exactly the qualities you’re talking about, but there’s not a single book out there that does what Wonderworks does. I’m not trying to stop people from reading those other books. I’m just trying to say that we have thousands of those books already out there — and the humanities are still collapsing. The reality is if we don’t figure out a way to turn around this slide, there aren’t going to be classes anymore on Proust. There aren’t going to be classes on the writers we love. We have to figure out a way to balance desire for the pure, non-utilitarian nature of literature with the recognition that pragmatism isn’t bad.”

I’ve never heard it put like that before: Pragmatism isn’t bad for literature.

[Angus Fletcher] “It’s not. Pragmatism isn’t the enemy of beauty. Pragmatism allows beauty to happen. If you’re not fed, if you don’t have a museum, if you don’t have paints, if you don’t have ink and printing presses, you don’t have beauty. So let’s acknowledge that pragmatism can work to support beauty and not displace or replace it. In the same way that the body supports the mind, let’s feed the body. Let’s see what literature can do for our physical nature.”

Are you saying we’ve lost the pragmatic value of art?

[Angus Fletcher] “Yes, we’ve lost a crucial part of literature and art — the part that works below our conscious mind on these deep parts of our brain, the parts that give us joy and optimism and hope and healing. By recovering those parts, we can allow all the things that are going on in English literature departments to continue. But if we don’t recover those parts, English departments are going to be gone.”

Do you think a need for literature is baked into our evolutionary nature?

[Angus Fletcher] “What I think is baked into our evolutionary nature is our need for meaning. What’s also baked into our evolutionary nature is our desire to tell stories from a beginning to an end. When we come into this world, we think, ‘Where’s this story going?’ For today to matter to us, we need to be able to see tomorrow and the next day.

In the same way, we’re obsessed with the question, ‘Where did it all come from?’ Other animals aren’t obsessed with this question. But humans are. ‘What was our origin? Where did the universe come from?’ These are intrinsic questions in our brains. And literature from the beginning was the most effective way of answering them by spinning time backward and forward in fictional ways. Literature is very effective at generating a sense of wonder, which is the most basic and primordial spiritual experience.

We need wonder in our days. That’s why most of us, when we read poetry, don’t like to think of it as being utilitarian. We like to think of it as bigger than that, as the purpose beyond. But our brains are only capable of a neurological spiritual experience. That’s why we need literature. Our brains need literature the way that our bodies need food. Literature provides our brains with basic sustenance.”

Believe science

“Believe science has become a comforting social media slogan amid the past year’s chaos, but this platitude has an undertone that runs contrary to the true spirit of scientific inquiry. All too often believe science means obey authority and is used as a way to shut down debate. Science is simply assumed to reign supreme,” John McLaughlin writes in Do You Believe Science?

“As science popularizers typically describe it, the scientific method involves observing the world around us, formulating hypotheses to explain our observations and testing those hypotheses by experiment. But this simple formula has a long and complex history,” which begins with Aristotle in the fourth century BCE. But “unlike his mentor Plato, Aristotle believed that the universal principles, or forms, of nature were best understood through a careful investigation of the natural world,” McLaughlin writes.

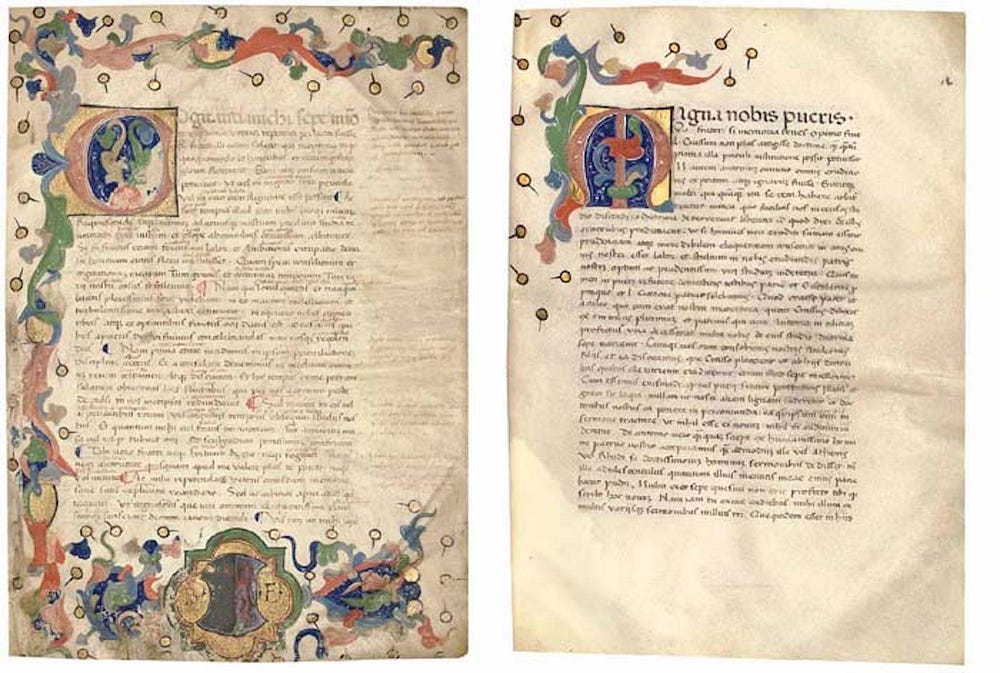

It wasn’t until the 17th century that the English philosopher and statesma, “Francis Bacon laid down a firmer grounding for the scientific method. New technical instruments had begun to give people the ability to peer deeper and farther into nature and Bacon was eager to explore the wealth of experimental evidence they could provide. Finding Aristotle’s syllogistic method inadequate to describe the complexity of the natural world, Bacon undertook his ‘great renewal,’ a grand project to endow the sciences with a new, more rigorous methodology. Bacon’s 1620 work the New Organon, which was designed as an overhaul of Aristotle’s eponymous text on logic, is widely considered to provide the first systematic description of a scientific method.”

According to Bacon, “the causal principle underlying each phenomenon could be uncovered,” but about a century later, David Hume notes in A Treatise of Human Nature that, “every time we draw an inductive inference, our chain of reasoning conceals the unstated, unproven premise that nature is uniform across space and time: ‘If Reason determin’d us, it would proceed upon that principle that instances, of which we have had no experience, must resemble those, of which we have had experience, and that the course of nature continues always uniformly the same.’

Hume’s ‘problem of induction’ dogs philosophers to this day. It doesn’t much bother scientists, however. Induction is the firm foundation of the scientific method and researchers carry on making empirical observations and inductive inferences unperturbed by philosophical dilemmas.”

During the last few centuries, “natural philosophy, which encompassed all scientific endeavor, was gradually transformed into the highly technical and specialized disciplines we recognize today. The twentieth century brought perhaps the most dramatic changes, as government and industry began to invest heavily in scientific research, having recognized its value to public health, technology, national security and prestige.

At the same time, there was heated debate as to how science itself makes progress. Karl Popper believed that the defining quality of a scientific theory is its ability to be falsified: a solid theory should contain hypotheses that can be decisively ruled out by experiment. It is primarily by falsifying erroneous theories, not by accumulating supporting evidence for true ones, that science makes progress. […]

Thomas Kuhn’s theory of how science advances is more radical. Instead of obeying a linear process of verification and falsification, he thought that science as a whole often advanced in unpredictable jumps. In the Structure of Scientific Revolutions, Kuhn writes that scientists often work productively on the basis of a given paradigm or foundational research model for long stretches of time. Then there is a trickle of contradictory and/or puzzling experimental results. Eventually, these problematic findings grow so numerous that they provoke a crisis in the discipline, which is resolved when a new paradigm is adopted. Competing scientific paradigms are often incommensurable, meaning that their methodologies and languages cannot be directly compared. But, although there are no permanent, objective rules that govern the choice between competing paradigms, Kuhn did not believe that the choice was arbitrary. A scientific paradigm should be simple, internally coherent, compatible with other accepted theories and able to generate new avenues of research and explain a wide range of phenomena.”

“As Kuhn points out, most day-to-day science consists of the modest, incremental progress of thousands of scientists, woven into a practical canon of knowledge. Researchers working anywhere in the world should be able to review the published findings of their peers and build upon them: the ability to reproduce experimental results is a cornerstone of the scientific method. Recently, however, there has been growing alarm over a replication crisis, particularly in the biomedical and social sciences.

One high-profile example came to light when pharmaceutical firm Amgen attempted to reproduce the findings of 53 landmark papers in cancer biology — and failed to do so for all but six. This raises serious questions. Are resources being invested in technology and therapeutics on the basis of flawed data? Is this problem endemic to just one corner of scientific research or is it more widespread? A few factors may be playing a role here: flawed research methodology and abuse of statistics, such as p-hacking; the need to publish or perish, which invariably leads to rushed and shoddy research; the gradual drift toward a Big Science in which the majority of scientific R&D is funded by corporations, instead of government. Science is a human institution, limited by the fallibility of its individual human practitioners.

Passionate debate over the proper limits and role of science in our societies will surely continue. But a careful study of the history and philosophy of science could have a salutary effect on the discipline. It would help us better communicate science to the public, suggest fruitful paths forward as we learn from the challenges of the past, and bring this venerable institution back down to earth where it belongs.”

A ‘new’ interdisciplinary approach to education

The one-track mind of the current education system is flawed. The London Interdisciplinary School (LIS), the first new university to open in more than 50 years, aims to put it right.

“Your life is assumed to be linear. Even before you know what you really want to do with your life, you have to specialise and commit to becoming an airline pilot or a plumber or studying humanities or going into the sciences. […] And then you come off the tracks at a certain point when you realise everything else you are automatically excluding. A higher education is only a higher form of ignorance. Increasingly, we teach more and more about less and less,” Andy Martin writes in In the age of Wikipedia, is it better to study everything?

“We used to produce polymaths, who would have a decent shot at anything and everything. Aristotle, as Peter Burke points out in his recent book The Polymath, ‘is most often remembered as a philosopher concerned with logic, ethics and metaphysics, but he also wrote on mathematics, rhetoric, poetry, political theory, physics, cosmology, anatomy, physiology, natural history and zoology.’ Or take Blaise Pascal, for example, in the 17th century: not only a theologian and philosopher (and author of the Pensées) but a mathematician who came up with an early form of calculus and one of the first computers to boot (and he died at 39).

The 17th-century Jesuit scholar, Athanasius Kircher, has been called ‘the last man to know everything.’ By the time of the Encyclopédie (‘A Systematic Dictionary of the Sciences, Arts, and Crafts’ — derived from the concept of enkuklios paideia, or ‘rounded education’) in the 18th century, in which writers like Rousseau and Diderot heroically bestrode the Enlightenment, we were already waving farewell to the ideal of knowing it all and to the figure of the ‘philosophe’ — who was a generalist, not a specialist, before the industrialisation of philosophy. Noble exceptions apart, from the 19th century on, we became humble workers on an infinite assembly line of which we could only ever see a small part.”

No wonder then that modern-day students end up feeling “alienate,” as the LIS’s Academic Lead and Director of Teaching & Learning, and one of the forces behind the new institution, Carl Gombrich, says. It was the word Karl Marx used about how the proletariat must feel, disconnected from their own work.

“Gombrich speaks of a ‘new Renaissance,’ uniting teaching and cutting-edge research, and producing a generation of polymaths. ‘There’s a value — a certain cognitive effort — in learning anything,’ he says. ‘But what we really want is wide-achievers as well as high-achievers.’ The LIS ethos was inspired in part by the philosopher Karl Popper’s theory of [objective] knowledge as ‘conjecture and refutation.’ ‘You start with a real problem and then bring to bear whatever you need to know. It could be maths or it could be writing. But it also leaves open the possibility that you go in different directions.’”

“One topic that will provide an initial focus is the question of happiness. It can be approached as a health issue, or in terms of genetics or cognitive science or linguistics or anthropology. ‘And there is a whole philosophy of melancholy,’ says Gombrich. ‘And anyway what’s wrong with unhappiness?’ Other ‘problems’ that are on the agenda are climate change, AI and ethics, public health, and education itself. ‘They are big chunky problems, and they are all interdisciplinary. You can’t ignore maths or science in the age of Covid.’

He stresses that this is not a ‘pick’n’mix’ approach — a criticism sometimes levelled at US colleges. But there is a degree of convergence with the US. Professor Joy Connolly, formerly provost of the Graduate Centre at the City University of New York, now president of the American Council of Learned Societies, says that ‘across the country it’s interdisciplinary programmes that are working best: food studies, environmental justice, peace studies, political economics, translation, public health studies/policy. And there is another common denominator with the American business school. LIS is looking for students who are capable and motivated, but also entrepreneurial-minded. They offer internships across all three years. Students are encouraged to get out of the ivory tower. And their business partners are invited in too. ‘These are real world problems,’ says Gombrich. ‘There must be a bit of ivory tower — a degree of seclusion — but whatever they learn in an academic context is important outside too.’”

And also this…

In Let’s imagine a different kind of platform economy, Mariana Mazzucato, the economist who, according to Pope Francis, “forces us to confront long-held beliefs about how economies work,” has a few suggestions for US and global policymakers to address the question of how best to govern platforms.

“First, when do the relationships among the platform, their users and their merchants establish a healthy ecosystem of services and innovation? What is the cost of being excluded from a given platform?

Second, are the business models and payment relationships among platforms, users, merchants and advertisers effectively contributing to the productive capacity of the market? Are they encumbered by digital economic and algorithmic rents? How transparent are the decisions by which value is allocated among users, suppliers, advertisers and the platform itself?

Third, what ecosystem and institutional capabilities are missing which can be created to drive investment, standards, enforcement and coordination capacities to transition towards privacy and public value empowering internet and economy? What are the kinds of digital public values which are demanded and feasible through new institution creation, yet under-provided by the current system?

Fourth, who gets the value from the creation and assessment of data, and what other kinds of governance institutions could mediate these relationships? Changing the value of data means changing the fundamental structure and direction of the digital economy, changing what kind of data is worth collecting and whether to collect it at all.

When the means of shaping a democracy are built on the ability to fine tune placement on a page, when the competitive advantage of a firm is dependent on non-transparent allocative algorithms, the concern is not simply whether there are anti-competitive practices, but whether, even if policymakers solve them, are they addressing the fundamental problem. Policymakers who merely focus on efficiency and the rate of innovation will fundamentally miss the opportunity, and rising demand, to improve the direction.”

“At this time, what the Greeks termed Kairos [καιρός], designating the correct or auspicious moment (as opposed to Chronos [χρόνος], which refers to sequential time, or Aion [Αἰών], which denotes the ages or cyclical time) feels very out of reach,” Grace Linden writes in Time, like memory, is fickle: days wrap back on themselves.

“Even so, there is no singular clock, no one ‘time organ,’ as the psychologist Robert E. Ornstein put it, to which we all adhere. Instead, our temporal perspectives are cultural (one friend tells me that she sees a year in the shape of a sea cucumber, and a lifetime as vast and unknowable as the ocean floor), and physiological. As neurobiologists have shown, how quickly or how slowly we feel time passing fluctuates wildly for each of us, its pace, according to researchers at the Weizmann Institute of Science in Israel, linked to the formation or lack of new memories and the flow of new experiences. Time is ruled entirely by one’s own sense of being, by hunger and humiliation, by lovesickness and dread and delight. The metrics cannot be severed from the rhythms of a life.”

The titular character of W. G. Sebald’s novel Austerlitz, “finds the cultural segmentation of time almost incomprehensible:

‘And is not human life in many parts of the earth governed to this day less by time than by the weather, and thus by an unquantifiable dimension which disregards linear regularity, does not progress constantly forward but moves in eddies, is marked by episodes of congestion and irruption, recurs in ever-changing form, and evolves in no one knows what direction?’

Time, as he sees it, is always in flux. It is as uncontrollable as the wind. For Austerlitz, who allows himself to learn the fate of his family during the Holocaust only as an adult, this awareness is a crippling, debilitating force that bears down upon his body: this temporal vortex is entirely autobiographical, and serves as a reminder of all that he has lost.”

“For [Heidi Julavits in The Folded Clock (2015)], all time is autobiographical. Like Marcel Proust’s madeleine, one bite of which plunges the narrator back to his childhood, her sense of time seems as much governed by the sun rising and setting as it is by a series of objects. Regularly, Julavits is struck by the transformative potential of certain items: an enamel tap handle, a second-hand necklace, a Rolodex fished out of a trashcan at JFK. She holds on to these until their true purpose is unveiled. Part of her captivation comes from her failure to fully ‘possess’ these objects. Their everydayness renders them magical. Their purpose is simply to endure.”

“Given the importance of clear, effective speech, you’d think we’d spend lots of time learning to do it in school. Yet for most of us, at least in the West, education consists of 12 to 20 years’ reading, writing and solving mathematics problems — on paper. As our society has become increasingly knowledge- and information-based, rhetoric and speech instruction have fallen almost entirely out of favour,” according to John Bowe, a speech consultant and the author of I Have Something to Say: Mastering the Art of Public Speaking in an Age of Disconnection, writes in How to speak in public.

In his essay, Bowe explores what we could learn from the ancient Greeks for whom “public speaking, like writing or, for that matter, military prowess, was considered an art form — teachable, learnable, and utterly unrelated to issues of innate character or emotional makeup.”

One of Bowe’s advices is to think about your audience’s happiness.

“Aristotle was seldom accused of being a warm, fuzzy type of guy, but one of his biggest insights into language theory was that people listen for one reason alone: for their own wellbeing.

[…]

In his Art of Rhetoric, Aristotle enumerates the things that make people happy: health, family, wealth, status and so on. It seems like a bizarre tangent for a book on public speaking, until you grasp his point. Your success as a speaker, regardless of your subject, depends on demonstrating to your audience that you’re paying attention to the one thing they care about most. You see them, you get them, you’re paying attention to their needs.

In other words, whether you’re talking about tax policy or ways to reduce your carbon footprint, your audience cares less about the rightness or logic of your points than they do about how your ideas will improve their lives.

Making an audience happy has little to do with adopting an inauthentically ‘fun’ or peppy manner of speaking so much as demonstrating on every level that you’re speaking for their benefit, not your own. If you’re forced to give a boring sales report, for example, you can demonstrate your attentiveness by being mercifully brief and clear. The point, in the end, is to show respect for your audience’s time and attention.”

Gregory Orekhov’s Black Square in Malevich Park, located in the Moscow region, is the first large-scale monument created in memory of the Russian avant-garde artist and art theorist, Kazimir Malevich, and a unique example of a contemporary Russian sculpture.

The sculpture acts as a passageway into the park. The object itself is quite utilitarian in its nature. According to the artist’s idea, once we enter the “square,” we find ourselves at a crossroad — to either enter the park or enter infinity, a crossing between what occurs in real-life and hundreds of its unfulfilled variations.

“There isn’t a moment when all of a sudden the world catches on fire and is gone. It’s just a matter of how many people will die and how many ecosystems will disappear. At some point the Amazon will dry up and become a savannah. Eventually, you’re not going to have Arctic ice or polar bears or coral reefs. You’re not going to be growing crops. People who talk about climate change often say there’s some magic breaking point, but we don’t know that. We just know that if you ignore climate change, these environmental and human tragedies eventually will happen. One sad fact is that there are lags in this system such that even once you get emissions to zero, temperatures won’t get cooler for about two decades. So I’m not likely to be alive in a year that’s cooler than the previous one.” — Bill Gates, from a conversation with the editor-in-chief of Harvard Business Review, Adi Ignatius